Publications

Objectifying Social Presence: Evaluating Multimodal Degraders in ECAs Using the Heard Text Recall Paradigm

Embodied conversational agents (ECAs) are key social interaction partners in various virtual reality (VR) applications, with their perceived social presence significantly influencing the quality and effectiveness of user-ECA interactions. This paper investigates the potential of the Heard Text Recall (HTR) paradigm as an indirect objective proxy for evaluating social presence, which is traditionally assessed through subjective questionnaires. To this end, we use the HTR task, which was primarily designed to assess memory performance in listening tasks, in a dual-task paradigm to assess cognitive spare capacity and correlate the latter with subjectively-rated social presence. As a prerequisite for this investigation, we introduce various co-verbal gesture modification techniques and assess their impact on the perceived naturalness of the presenting ECA, a crucial aspect fostering social presence. The main study then explores the applicability of HTR as a proxy for social presence by examining its effectiveness under different multimodal degraders of ECA behavior, including degraded co-verbal gestures, omitted lip synchronization, and the use of synthetic voices. The findings suggest that while HTR shows potential as an objective measure of social presence, its effectiveness is primarily evident in response to substantial changes in ECA behavior. Additionally, the study also highlights the negative effects of synthetic voices and inadequate lip synchronization on perceived social presence, emphasizing the need for careful consideration of these elements in ECA design.

» Show BibTeX

@ARTICLE{Ehret2026,

author={Ehret, Jonathan and Schüppen, Jonas and Mohanathasan, Chinthusa and Ermert, Cosima A. and Fels, Janina and Schlittmeier, Sabine J. and Kuhlen, Torsten W. and Bönsch, Andrea},

journal={IEEE Transactions on Visualization and Computer Graphics},

title={Objectifying Social Presence: Evaluating Multimodal Degraders in ECAs Using the Heard Text Recall Paradigm},

year={2026},

volume={32},

number={2},

pages={2312-2325},

doi={10.1109/TVCG.2025.3636079}

}

How Far is Too Far? The Trade-Off between Selection Distance and Accuracy during Teleportation in Immersive Virtual Reality

Target-selection-based teleportation is one of the most widely used and researched travel techniques in immersive virtual environments, requiring the user to specify a target location with a selection ray before being transported there. This work explores the influence of the maximum reach of the parabolic selection ray, modeled by different emission velocities of the projectile motion equation, and compares the resulting teleportation performance to a straight ray as the baseline. In a user study with 60 participants, we asked participants to teleport as far as possible while still remaining within accuracy constraints to understand how the theoretical implications of the projectile motion equation apply to a realistic VR use case. We found that a projectile emission velocity of 14 m/s (resulting in a maximal reach of 21.52 m) offered the best trade-off between selection distance and accuracy, with an inferior performance of the straight ray. Our results demonstrate the necessity to carefully set and report the projectile emission velocity in future work, as it was shown to directly influence user-selected distance, selection errors, and controller height during selection.

» Show BibTeX

@ARTICLE{Rupp2026b,

author={Rupp, Daniel and Weissker, Tim and Wölwer, Matthias and Kuhlen, Torsten W. and Zielasko, Daniel},

journal={IEEE Transactions on Visualization and Computer Graphics},

title={How Far is Too Far? The Trade-Off Between Selection Distance and Accuracy During Teleportation in Immersive Virtual Reality},

year={2026},

volume={32},

number={2},

pages={1864-1878},

doi={10.1109/TVCG.2025.3632345}

}

SATOR: Seamless 3D Teleportation to both Ground and Mid-Air Targets

Traditional target-selection-based teleportation depends on the intersection of a (curved) ray with the scene's geometry, which limits navigation to points on the ground, restricting users' navigational freedom. While previous techniques exist that permit mid-air target selection, they are not optimal for transitioning between air and ground navigation, leading to inaccurate or lengthy interaction sequences. In this paper, we introduce SATOR, a new 3D teleportation technique designed to enable efficient and accurate navigation to both ground and mid-air targets by combining and enhancing previous approaches. Informed by the literature, we implemented four different parametrizations of our technique and compared them to a previously published technique that also enables both ground and mid-air target selection. Our user study with 30 participants indicates that SATOR is more efficient, accurate, and easier to use than the baseline. As a result, SATOR effectively helps users get an overview of the environment, observe features at different heights, or maneuver quickly around larger obstacles.

» Show BibTeX

@ARTICLE{Rupp2026,

author={Rupp, Daniel and Wölwer, Matthias and Kuhlen, Torsten W. and Zielasko, Daniel and Weissker, Tim},

journal={IEEE Transactions on Visualization and Computer Graphics},

title={{SATOR: Seamless 3D Teleportation to both Ground and Mid-Air Targets}},

year={2026},

volume={},

number={},

pages={1-10},

keywords={Projectiles;Weapons;Circuits;Feedback;MIMICs;Millimeter wave integrated circuits;Monolithic integrated circuits;Graphical user interfaces;Videos;Avatars;Virtual Reality;3D User Interfaces;3D Navigation;Head-Mounted Display;Teleportation;Flying;Mid-Air Navigation},

doi={10.1109/TVCG.2026.3679894}}

This Lecture Makes Me Sick: On Confounding Factors Influencing the Simulator Sickness Questionnaire (SSQ)

The Simulator Sickness Questionnaire (SSQ) has become a standard tool for quantifying the severity and distribution of discomfort symptoms in virtual reality (VR) research. Despite its straightforward administration, the use of the SSQ also comes with significant challenges, including response subjectivity, strict threshold values based on a military reference population, and confounding factors influencing the results. To demonstrate the adverse interplay of these issues, we asked three cohorts of students to fill in a SSQ after having attended a 90-minute lecture of our teaching program. Although students were not exposed to any form of VR experience, the resulting SSQ scores were indistinguishable from VR studies and extended far beyond the originally defined threshold of a “bad simulator', with 88.1% of TS scores being larger and 25.4% even exceeding thrice this value. We compare our results to alternative scoring systems of the SSQ proposed in the literature and suggest implications for future experimental designs involving the quantification of sickness symptoms. In summary, our results motivate to exert caution when interpreting the results of the SSQ in the context of a VR study; participants might just have attended a lecture prior to the experiment.

@ARTICLE{Weissker2026a,

author={Weissker, Tim},

journal={IEEE Transactions on Visualization and Computer Graphics},

title={{This Lecture Makes Me Sick: on Confounding Factors Influencing the Simulator Sickness Questionnaire (SSQ)}},

year={2026},

volume={},

number={},

pages={1-7},

keywords={Solid modeling;Particle measurements;Focusing;Cybersickness;Atmospheric measurements;Visualization;Resists;Motion sickness;Education;Anxiety disorders;Virtual Reality;Simulator Sickness;Discomfort;Cybersickness;Nausea},

doi={10.1109/TVCG.2026.3679089}}

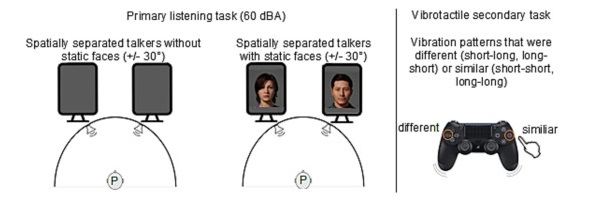

Beyond Words: The Impact of Static and Animated Faces as Visual Cues on Memory Performance and Listening Effort during Two-Talker Conversations

Listening to a conversation between two talkers and recalling the information is a common goal in verbal communication. However, cognitive-psychological experiments on short-term memory performance often rely on rather simple stimulus material, such as unrelated word lists or isolated sentences. The present study uniquely incorporated running speech, such as listening to a two-talker conversation, to investigate whether talker-related visual cues enhance short-term memory performance and reduce listening effort in non-noisy listening settings. In two equivalent dual-task experiments, participants listened to interrelated sentences spoken by two alternating talkers from two spatial positions, with talker-related visual cues presented as either static faces (Experiment 1, n = 30) or animated faces with lip sync (Experiment 2, n = 28). After each conversation, participants answered content-related questions as a measure of short-term memory (via the Heard Text Recall task). In parallel to listening, they performed a vibrotactile pattern recognition task to assess listening effort. Visual cue conditions (static or animated faces) were compared within-subject to a baseline condition without faces. To account for inter-individual variability, we measured and included individual working memory capacity, processing speed, and attentional functions as cognitive covariates. After controlling for these covariates, results indicated that neither static nor animated faces improved short-term memory performance for conversational content. However, static faces reduced listening effort, whereas animated faces increased it, as indicated by secondary task RTs. Participants' subjective ratings mirrored these behavioral results. Furthermore, working memory capacity was associated with short-term memory performance, and processing speed was associated with listening effort, the latter reflected in performance on the vibrotactile secondary task. In conclusion, this study demonstrates that visual cues influence listening effort and that individual differences in working memory and processing speed help explain variability in task performance, even in optimal listening conditions.

@article{MOHANATHASAN2026106295,

title = {Beyond words: The impact of static and animated faces as visual cues on memory performance and listening effort during two-talker conversations},

journal = {Acta Psychologica},

volume = {263},

pages = {106295},

year = {2026},

issn = {0001-6918},

doi = {https://doi.org/10.1016/j.actpsy.2026.106295},

url = {https://www.sciencedirect.com/science/article/pii/S0001691826000946},

author = {Chinthusa Mohanathasan and Plamenna B. Koleva and Jonathan Ehret and Andrea Bönsch and Janina Fels and Torsten W. Kuhlen and Sabine J. Schlittmeier}

}

SPLOCIS - Extending SPLOMs to a Scatterplot Cube with Interactable Shadows for Immersive Analysis in Virtual Reality

In data analysis, scatterplots serve as an initial tool for exploring the relationships between two or three attributes. While scatterplot matrices (SPLOMs) display every attribute combination through numerous 2D scatterplots to show a concise overview of a multivariate dataset, this approach is not directly suitable for 3D scatterplots due to visual clutter. Since research has shown that immersive virtual environments can enhance data analysis compared to traditional 2D desktop setups - especially for spatial analysis tasks - we propose an interactive system, called SPLOCIS, that makes use of virtual reality to enable users to interactively filter and select 3D scatterplots from all possible attribute combinations. Our user study, combining both qualitative and quantitative results, demonstrates that SPLOCIS is a particularly novel and stimulating approach to work with multivariate data in immersive environments. It enables solving classic data exploration tasks in an efficient and accurate way, while not imposing unexpectedly high task loads. Moreover, our findings provide promising suggestions for further developments.

@INPROCEEDINGS{11457517,

author={Derksen, Melanie and Dieke, Viktoria and Kuhlen, Torsten and Botsch, Mario and Weissker, Tim},

booktitle={2026 IEEE Conference on Virtual Reality and 3D User Interfaces (VR)},

title={SPLOCIS – Extending SPLOMs to a Scatterplot Cube with Interactable Shadows for Immersive Analysis in Virtual Reality},

year={2026},

volume={},

number={},

pages={55-65},

keywords={Projectiles;Weapons;Radio broadcasting;Frequency modulation;Filtering;Filters;Feedback;Circuits;Brushes;Negative feedback;Virtual reality;3D user interfaces;Head-mounted display;Immersive analytics;Scatterplot;Scatterplot matrix},

doi={10.1109/VR67842.2026.00029}}

Fostering Engagement through a Latency-Optimized LLM-based Dialogue System for Multimodal ECA Responses

Interactions with Embodied Conversational Agents (ECAs) are an integral part of many social Virtual Reality (VR) applications, increasing the need for free, context-sensitive conversations characterized by latency-optimized and multimodal ECA responses. Our presented methodology consists of three interdependent steps: We first present a holistic framework driven by a Large Language Model (LLM), which integrates existing technologies into a modular and extendable system that is developer-friendly and suitable for diverse use-cases. Building on this foundation, our second step comprises streaming-based optimizations that effectively reduce measured response latency, thereby facilitating real-time conversations. Finally, we conduct a comparative analysis between our latency optimized LLM-driven ECA and a conventional button-based Wizard-of-Oz (WoZ) system to evaluate performance differences in user engagement. Our insights reveal that users perceive our LLM-driven ECA as significantly more natural, competent, and trustworthy than their WoZ counterparts, despite objective measures indicating slightly higher latency in technical performance. These findings underscore the potential of LLMs to enhance engagement in ECAs within VR environments.

» Show BibTeX

@INPROCEEDINGS{Kuehlem2026,

author={Kühlem, Konstantin W. and Ehret, Jonathan and Kuhlen, Torsten W. and Bönsch, Andrea},

booktitle={2026 IEEE International Conference on Artificial Intelligence and eXtended and Virtual Reality (AIxVR)},

title={{Fostering Engagement Through a Latency-Optimized LLM-Based Dialogue System for Multimodal ECA Responses}},

year={2026},

volume={},

number={},

pages={85-97},

keywords={Large language models;Buildings;Virtual reality;Oral communication;Real-time systems;Optimization;large language model;embodied conversational agents;latency;multi-modal responses;virtual reality},

doi={10.1109/AIxVR67263.2026.00019}}

Previous Year (2025)