Welcome

at RWTH Aachen University!

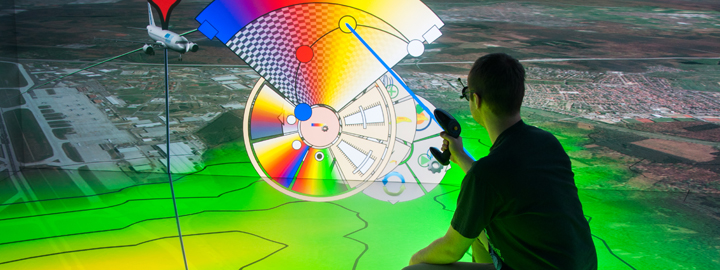

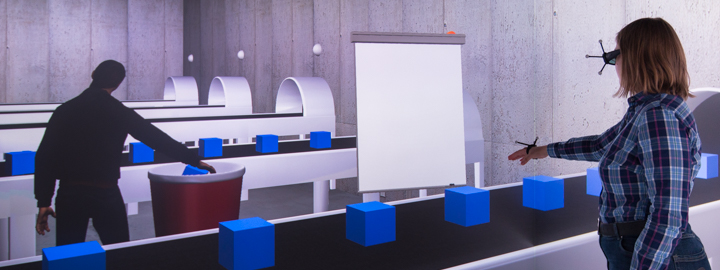

The Virtual Reality and Immersive Visualization Group started in 1998 as a service team in the RWTH IT Center. Since 2015, we are a research group (Lehr- und Forschungsgebiet) at i12 within the Computer Science Department. Moreover, the Group is a member of the Visual Computing Institute and continues to be an integral part of the RWTH IT Center.

In a unique combination of research, teaching, services, and infrastructure, we provide Virtual Reality technologies and the underlying methodology as a powerful tool for scientific-technological applications.

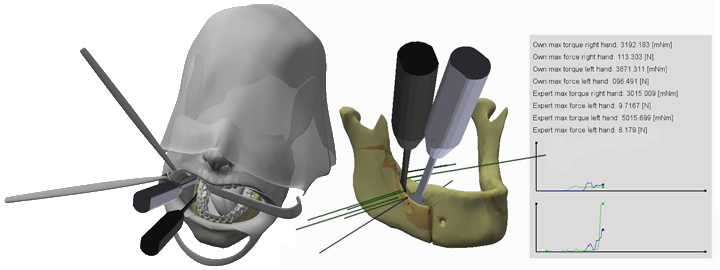

In terms of basic research, we develop advanced methods and algorithms for multimodal 3D user interfaces and explorative analyses in virtual environments. Furthermore, we focus on application-driven, interdisciplinary research in collaboration with RWTH Aachen institutes, Forschungszentrum Jülich, research institutions worldwide, and partners from business and industry, covering fields like simulation science, production technology, neuroscience, and medicine.

To this end, we are members of / associated with the following institutes and facilities:

| |

|---|---|

| |

| |

| |

Our offices are located in the RWTH IT Center, where we operate one of the largest Virtual Reality labs worldwide. The aixCAVE, a 30 sqm visualization chamber, makes it possible to interactively explore virtual worlds, is open to use by any RWTH Aachen research group.

News

| • |

Martin Bellgardt receives doctoral degree from RWTH Aachen University Today, our former colleague Martin Bellgardt successfully passed his Ph.D. defense and received a doctoral degree from RWTH Aachen University for his thesis on "Increasing Immersion in Machine Learning Pipelines for Mechanical Engineering". Congratulations! |

April 30, 2025 |

| • |

Student researcher opening in the area of Social VR, click here for more information. |

Jan. 15, 2025 |

| • |

Active Participation at 2024 IEEE VIS Conference (VIS 2024) At this year's IEEE VIS Conference, several contributions of our visualization group were presented. Dr. Tim Gerrits chaired the 2024 SciVis Contest and presented two accepted papers: The short paper "DaVE - A Curated Database of Visualization Examples" by Jens Koenen, Marvin Petersen, Christoph Garth and Dr. Tim Gerrits as well as the contribution to the Workshop on Uncertainty Exploring Uncertainty Visualization for Degenerate Tensors in 3D Symmetric Second-Order Tensor Field Ensembles by Tadea Schmitz and Dr. Tim Gerrits, which was awarded the best paper award. Congratulations! |

Oct. 22, 2024 |

| • |

Honorable Mention One Best Paper Honorable Mention Award of the VRST 2024 was given to Sevinc Eroglu for her paper entitled “Choose Your Reference Frame Right: An Immersive Authoring Technique for Creating Reactive Behavior”. |

Oct. 11, 2024 |

| • |

Tim Gerrits as invited Keynote Speaker at the ParaView User Days in Lyon ParaView, developed by Kitware is one of the most-used open-source visualization and analysis tools, widely used in research and industry. For the second edition of the ParaView user days, Dr. Tim Gerrits was invited to share his insights of developing and providing visualization within the academic communities. |

Sept. 26, 2024 |

| • |

Invited Talk at Visual Computing for Biology and Medicine This year's Eurographics Symposium on Visual Computing for Biologigy and Medicine VCBM in Magdeburg included a VCBM Fachgruppen Meeting with an invited presentation by Dr. Tim Gerrits on "Harnessing High Performance Infrastructure for Scientific Visualization of Medical Data". |

Sept. 20, 2024 |

Recent Publications

Towards Comprehensible and Expressive Teleportation Techniques in Immersive Virtual Environments 2025 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW)

Teleportation, a popular navigation technique in virtual environments, is favored for its efficiency and reduction of cybersickness but presents challenges such as reduced spatial awareness and limited navigational freedom compared to continuous techniques. I would like to focus on three aspects that advance our understanding of teleportation in both the spatial and the temporal domain. 1) An assessment of different parametrizations of common mathematical models used to specify the target location of the teleportation and the influence on teleportation distance and accuracy. 2) Extending teleportation capabilities to improve navigational freedom, comprehensibility, and accuracy. 3) Adapt teleportation to the time domain, mediating temporal disorientation. The results will enhance the expressivity of existing teleportation interfaces and provide validated alternatives to their steering-based counterparts.

|

Exploring Gaze Dynamics: Initial Findings on the Role of Listening Bystanders in Conversational Interactions 2025 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW) - VHCIE

This work-in-progress paper investigates how virtual listening bystanders influence participants’ gaze behavior and their perception of turn-taking during scripted conversations with embodied conversational agents (ECAs). 25 participants interacted with five ECAs – two speakers and three bystanders – across three conditions: no bystanders, bystanders exhibiting random gazing behavior, and social bystanders engaging in mutual gaze and backchanneling. Participants either observed the conversation or actively participated as speakers by reciting prompted sentences. The results indicated that bystanders reduced the participants’ attention to speakers, hindering their ability to anticipate turn changes and resulting in longer delays in shifting their gaze to the new speaker after an ECA yielded the turn. Random gazing bystanders were particularly noted for obscuring conversational flow. These findings underscore the challenges of designing effective and natural conversational environments, highlighting the need for careful consideration of ECA behaviors to enhance user engagement.

|

Front Matter: 9th Edition of IEEE VR Workshop: Virtual Humans and Crowds in Immersive Environments (VHCIE) 2025 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW) - VHCIE

The VHCIE workshop aims to explore and advance the creation of believable virtual humans and crowds within immersive virtual environments (IVEs). With the emergence of various tools, algorithms, and systems, it is now possible to design realistic virtual characters - known as virtual agents (VAs) - that can populate expansive environments with thousands of individuals. These sophisticated crowd simulations facilitate dynamic interactions among the VAs themselves and between VAs and virtual reality (VR) users. The VHCIE workshop seeks to highlight the diverse range of VR applications for these advancements, including virtual tour guides, platforms for professional training, studies on human behavior, and even recreations of live events like concerts. By fostering discussions around these themes, VHCIE aims to inspire innovative approaches and collaborative efforts that push the boundaries of what is possible in social IVEs while also providing an open place for networking and exchanging ideas among participants. Bild: Bitte das VHCIE Logo im Anhang nutzen und klein rechts in die Ecke packen

|