Profile

|

Dr. Yuen Cheong Law Wan |

Publications

Experiences on Validation of Multi-Component System Simulations for Medical Training Applications

In the simulation of multi-component systems, we often encounter a problem with a lack of ground-truth data. This situation makes the validation of our simulation methods and models a difficult task. In this work we present a guideline to design validation methodologies that can be applied to the validation of multi-component simulations that lack of ground-truth data. Additionally we present an example applied to an Ultrasound Image Simulation for medical training and give an overview of the considerations made and the results for each of the validation methods. With these guidelines we expect to obtain more comparable and reproducible validation results from which other similar work can benefit.

@InProceedings{eurorv3.20161113,

author = {Law, Yuen C. and Weyers, Benjamin and Kuhlen, Torsten W.},

title = {{Experiences on Validation of Multi-Component System Simulations for Medical Training Applications}},

booktitle = {EuroVis Workshop on Reproducibility, Verification, and Validation in Visualization (EuroRV3)},

year = {2016},

editor = {Kai Lawonn and Mario Hlawitschka and Paul Rosenthal},

publisher = {The Eurographics Association},

doi = {10.2312/eurorv3.20161113},

isbn = {978-3-03868-017-8},

pages = {29--33}

}

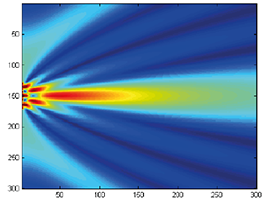

Simulation-based Ultrasound Training Supported by Annotations, Haptics and Linked Multimodal Views

When learning ultrasound (US) imaging, trainees must learn how to recognize structures, interpret textures and shapes, and simultaneously register the 2D ultrasound images to their 3D anatomical mental models. Alleviating the cognitive load imposed by these tasks should free the cognitive resources and thereby improve the learning process. We argue that the amount of cognitive load that is required to mentally rotate the models to match the images to them is too large and therefore negatively impacts the learning process. We present a 3D visualization tool that allows the user to naturally move a 2D slice and navigate around a 3D anatomical model. The slice is displayed in-place to facilitate the registration of the 2D slice in its 3D context. Two duplicates are also shown externally to the model; the first is a simple rendered image showing the outlines of the structures and the second is a simulated ultrasound image. Haptic cues are also provided to the users to help them maneuver around the 3D model in the virtual space. With the additional display of annotations and information of the most important structures, the tool is expected to complement the available didactic material used in the training of ultrasound procedures.

BlowClick: A Non-Verbal Vocal Input Metaphor for Clicking

In contrast to the wide-spread use of 6-DOF pointing devices, freehand user interfaces in Immersive Virtual Environments (IVE) are non-intrusive. However, for gesture interfaces, the definition of trigger signals is challenging. The use of mechanical devices, dedicated trigger gestures, or speech recognition are often used options, but each comes with its own drawbacks. In this paper, we present an alternative approach, which allows to precisely trigger events with a low latency using microphone input. In contrast to speech recognition, the user only blows into the microphone. The audio signature of such blow events can be recognized quickly and precisely. The results of a user study show that the proposed method allows to successfully complete a standard selection task and performs better than expected against a standard interaction device, the Flystick.

Cirque des Bouteilles: The Art of Blowing on Bottles

Making music by blowing on bottles is fun but challenging. We introduce a novel 3D user interface to play songs on virtual bottles. For this purpose the user blows into a microphone and the stream of air is recreated in the virtual environment and redirected to virtual bottles she is pointing to with her fingers. This is easy to learn and subsequently opens up opportunities for quickly switching between bottles and playing groups of them together to form complex melodies. Furthermore, our interface enables the customization of the virtual environment, by means of moving bottles, changing their type or filling level.

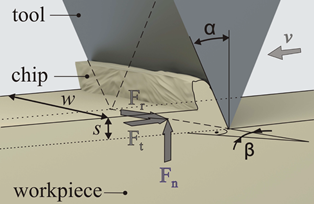

Preliminary Bone Sawing Model for a Virtual Reality-Based Training Simulator of Bilateral Sagittal Split Osteotomy

Successful bone sawing requires a high level of skill and experience, which could be gained by the use of Virtual Reality-based simulators. A key aspect of these medical simulators is realistic force feedback. The aim of this paper is to model the bone sawing process in order to develop a valid training simulator for the bilateral sagittal split osteotomy, the most often applied corrective surgery in case of a malposition of the mandible. Bone samples from a human cadaveric mandible were tested using a designed experimental system. Image processing and statistical analysis were used for the selection of four models for the bone sawing process. The results revealed a polynomial dependency between the material removal rate and the applied force. Differences between the three segments of the osteotomy line and between the cortical and cancellous bone were highlighted.

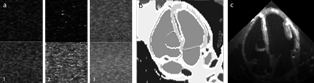

Software Phantom with Realistic Speckle Modeling for Validation of Image Analysis Methods in Echocardiography

Computer-assisted processing and interpretation of medical ultrasound images is one of the most challenging tasks within image analysis. Physical phenomena in ultrasonographic images, e.g., the characteristic speckle noise and shadowing effects, make the majority of standard methods from image analysis non optimal. Furthermore, validation of adapted computer vision methods proves to be difficult due to missing ground truth information. There is no widely accepted software phantom in the community and existing software phantoms are not flexible enough to support the use of specific speckle models for different tissue types, e.g., muscle and fat tissue. In this work we propose an anatomical software phantom with a realistic speckle pattern simulation to fill this gap and provide a flexible tool for validation purposes in medical ultrasound image analysis. We discuss the generation of speckle patterns and perform statistical analysis of the simulated textures to obtain quantitative measures of the realism and accuracy regarding the resulting textures.

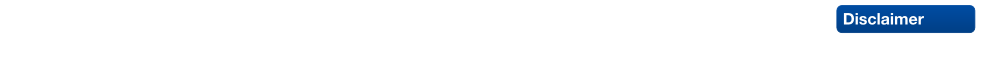

Geometrical-Acoustics-based Ultrasound Image Simulation

Brightness modulation (B-Mode) ultrasound (US) images are used to visualize internal body structures during diagnostic and invasive procedures, such as needle insertion for Regional Anesthesia. Due to patient availability and health risks-during invasive procedures-training is often limited, thus, medical training simulators become a viable solution to the problem. Simulation of ultrasound images for medical training requires not only an acceptable level of realism but also interactive rendering times in order to be effective. To address these challenges, we present a generative method for simulating B-Mode ultrasound images using surface representations of the body structures and geometrical acoustics to model sound propagation and its interaction within soft tissue. Furthermore, physical models for backscattered, reflected and transmitted energies as well as for the beam profile are used in order to improve realism. Through the proposed methodology we are able to simulate, in real-time, plausible view- and depth-dependent visual artifacts that are characteristic in B-Mode US images, achieving both, realism and interactivity.