Profile

|

Dipl.-Inform. Thomas Knott |

Publications

Accurate and adaptive contact modeling for multi-rate multi-point haptic rendering of static and deformable environments

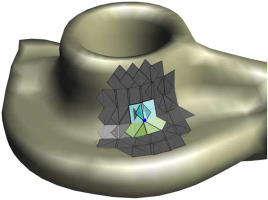

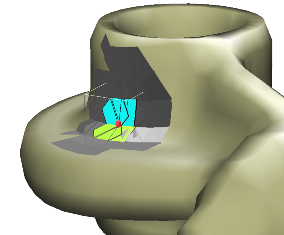

Common approaches for the haptic rendering of complex scenarios employ multi-rate simulation schemes. Here, the collision queries or the simulation of a complex deformable object are often performed asynchronously at a lower frequency, while some kind of intermediate contact representation is used to simulate interactions at the haptic rate. However, this can produce artifacts in the haptic rendering when the contact situation quickly changes and the intermediate representation is not able to reflect the changes due to the lower update rate.

We address this problem utilizing a novel contact model. It facilitates the creation of contact representations that are accurate for a large range of motions and multiple simulation time-steps. We handle problematic geometrically convex contact regions using a local convex decomposition and special constraints for convex areas. We combine our accurate contact model with an implicit temporal integration scheme to create an intermediate mechanical contact representation, which reflects the dynamic behavior of the simulated objects. To maintain a haptic real time simulation, the size of the region modeled by the contact representation is automatically adapted to the complexity of the geometry in contact. Moreover, we propose a new iterative solving scheme for the involved constrained dynamics problems. We increase the robustness of our method using techniques from trust region-based optimization. Our approach can be combined with standard methods for the modeling of deformable objects or constraint-based approaches for the modeling of, for instance, friction or joints. We demonstrate its benefits with respect to the simulation accuracy and the quality of the rendered haptic forces in several scenarios with one or more haptic proxies.

@Article{Knott201668,

Title = {Accurate and adaptive contact modeling for multi-rate multi-point haptic rendering of static and deformable environments },

Author = {Thomas C. Knott and Torsten W. Kuhlen},

Journal = {Computers \& Graphics },

Year = {2016},

Pages = {68 - 80},

Volume = {57},

Abstract = {Abstract Common approaches for the haptic rendering of complex scenarios employ multi-rate simulation schemes. Here, the collision queries or the simulation of a complex deformable object are often performed asynchronously at a lower frequency, while some kind of intermediate contact representation is used to simulate interactions at the haptic rate. However, this can produce artifacts in the haptic rendering when the contact situation quickly changes and the intermediate representation is not able to reflect the changes due to the lower update rate. We address this problem utilizing a novel contact model. It facilitates the creation of contact representations that are accurate for a large range of motions and multiple simulation time-steps. We handle problematic geometrically convex contact regions using a local convex decomposition and special constraints for convex areas. We combine our accurate contact model with an implicit temporal integration scheme to create an intermediate mechanical contact representation, which reflects the dynamic behavior of the simulated objects. To maintain a haptic real time simulation, the size of the region modeled by the contact representation is automatically adapted to the complexity of the geometry in contact. Moreover, we propose a new iterative solving scheme for the involved constrained dynamics problems. We increase the robustness of our method using techniques from trust region-based optimization. Our approach can be combined with standard methods for the modeling of deformable objects or constraint-based approaches for the modeling of, for instance, friction or joints. We demonstrate its benefits with respect to the simulation accuracy and the quality of the rendered haptic forces in several scenarios with one or more haptic proxies. },

Doi = {http://dx.doi.org/10.1016/j.cag.2016.03.007},

ISSN = {0097-8493},

Keywords = {Haptic rendering},

Url = {http://www.sciencedirect.com/science/article/pii/S0097849316300206}

}

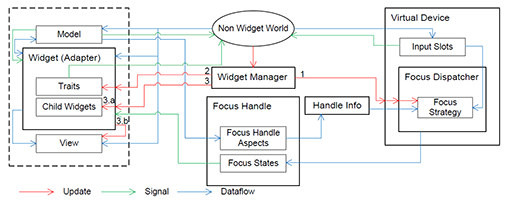

Vista Widgets: A Framework for Designing 3D User Interfaces from Reusable Interaction Building Blocks

Virtual Reality (VR) has been an active field of research for several decades, with 3D interaction and 3D User Interfaces (UIs) as important sub-disciplines. However, the development of 3D interaction techniques and in particular combining several of them to construct complex and usable 3D UIs remains challenging, especially in a VR context. In addition, there is currently only limited reusable software for implementing such techniques in comparison to traditional 2D UIs. To overcome this issue, we present ViSTA Widgets, a software framework for creating 3D UIs for immersive virtual environments. It extends the ViSTA VR framework by providing functionality to create multi-device, multi-focus-strategy interaction building blocks and means to easily combine them into complex 3D UIs. This is realized by introducing a device abstraction layer along sophisticated focus management and functionality to create novel 3D interaction techniques and 3D widgets. We present the framework and illustrate its effectiveness with code and application examples accompanied by performance evaluations.

@InProceedings{Gebhardt2016,

Title = {{Vista Widgets: A Framework for Designing 3D User Interfaces from Reusable Interaction Building Blocks}},

Author = {Gebhardt, Sascha and Petersen-Krau, Till and Pick, Sebastian and Rausch, Dominik and Nowke, Christian and Knott, Thomas and Schmitz, Patric and Zielasko, Daniel and Hentschel, Bernd and Kuhlen, Torsten W.},

Booktitle = {Proceedings of the 22nd ACM Conference on Virtual Reality Software and Technology},

Year = {2016},

Address = {New York, NY, USA},

Pages = {251--260},

Publisher = {ACM},

Series = {VRST '16},

Acmid = {2993382},

Doi = {10.1145/2993369.2993382},

ISBN = {978-1-4503-4491-3},

Keywords = {3D interaction, 3D user interfaces, framework, multi-device, virtual reality},

Location = {Munich, Germany},

Numpages = {10},

Url = {http://doi.acm.org/10.1145/2993369.2993382}

}

Accurate Contact Modeling for Multi-rate Single-point Haptic Rendering of Static and Deformable Environments

Common approaches for the haptic rendering of complex scenarios employ multi-rate simulation schemes. Here, the collision queries or the simulation of a complex deformable object are often performed asynchronously on a lower frequency, while some kind of intermediate contact representation is used to simulate interactions on the haptic rate. However, this can produce artifacts in the haptic rendering when the contact situation quickly changes and the intermediate representation is not able to reflect the changes due to the lower update rate. We address this problem utilizing a novel contact model. It facilitates the creation of contact representations that are accurate for a large range of motions and multiple simulation time-steps.We handle problematic convex contact regions using a local convex decomposition and special constraints for convex areas.We combine our accurate contact model with an implicit temporal integration scheme to create an intermediate mechanical contact representation, which reflects the dynamic behavior of the simulated objects. Moreover, we propose a new iterative solving scheme for the involved constrained dynamics problems.We increase the robustness of our method using techniques from trust region-based optimization. Our approach can be combined with standard methods for the modeling of deformable objects or constraint-based approaches for the modeling of, for instance, friction or joints. We demonstrate its benefits with respect to the simulation accuracy and the quality of the rendered haptic forces in multiple scenarios.

Best Paper Award!

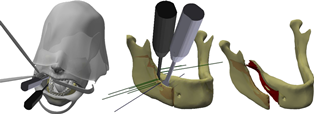

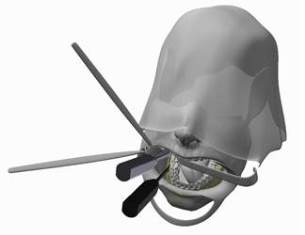

Bimanual Haptic Simulation of Bone Fracturing for the Training of the Bilateral Sagittal Split Osteotomy

In this work we present a haptic training simulator for a maxillofacial procedure comprising the controlled breaking of the lower mandible. To our knowledge the haptic simulation of fracture is seldom addressed, especially when a realistic breaking behavior is required. Our system combines bimanual haptic interaction with a simulation of the bone based on well-founded methods from fracture mechanics. The system resolves the conflict between simulation complexity and haptic real-time constraints by employing a dedicated multi-rate simulation and a special solving strategy for the occurring mechanical equations. Furthermore, we present remeshing-free methods for collision detection and visualization which are tailored for an efficient treatment of the topological changes induced by the fracture. The methods have been successfully implemented and tested in a simulator prototype using real pathological data and a semi-immersive VR-system with two haptic devices. We evaluated the computational efficiency of our methods and show that a stable and responsive haptic simulation of the fracturing has been achieved.

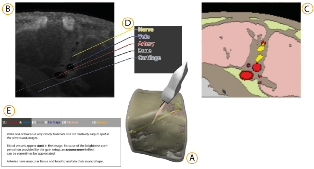

Simulation-based Ultrasound Training Supported by Annotations, Haptics and Linked Multimodal Views

When learning ultrasound (US) imaging, trainees must learn how to recognize structures, interpret textures and shapes, and simultaneously register the 2D ultrasound images to their 3D anatomical mental models. Alleviating the cognitive load imposed by these tasks should free the cognitive resources and thereby improve the learning process. We argue that the amount of cognitive load that is required to mentally rotate the models to match the images to them is too large and therefore negatively impacts the learning process. We present a 3D visualization tool that allows the user to naturally move a 2D slice and navigate around a 3D anatomical model. The slice is displayed in-place to facilitate the registration of the 2D slice in its 3D context. Two duplicates are also shown externally to the model; the first is a simple rendered image showing the outlines of the structures and the second is a simulated ultrasound image. Haptic cues are also provided to the users to help them maneuver around the 3D model in the virtual space. With the additional display of annotations and information of the most important structures, the tool is expected to complement the available didactic material used in the training of ultrasound procedures.

Cirque des Bouteilles: The Art of Blowing on Bottles

Making music by blowing on bottles is fun but challenging. We introduce a novel 3D user interface to play songs on virtual bottles. For this purpose the user blows into a microphone and the stream of air is recreated in the virtual environment and redirected to virtual bottles she is pointing to with her fingers. This is easy to learn and subsequently opens up opportunities for quickly switching between bottles and playing groups of them together to form complex melodies. Furthermore, our interface enables the customization of the virtual environment, by means of moving bottles, changing their type or filling level.

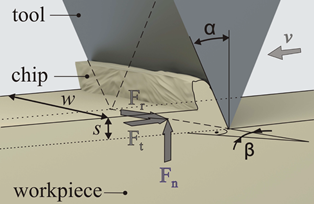

Preliminary Bone Sawing Model for a Virtual Reality-Based Training Simulator of Bilateral Sagittal Split Osteotomy

Successful bone sawing requires a high level of skill and experience, which could be gained by the use of Virtual Reality-based simulators. A key aspect of these medical simulators is realistic force feedback. The aim of this paper is to model the bone sawing process in order to develop a valid training simulator for the bilateral sagittal split osteotomy, the most often applied corrective surgery in case of a malposition of the mandible. Bone samples from a human cadaveric mandible were tested using a designed experimental system. Image processing and statistical analysis were used for the selection of four models for the bone sawing process. The results revealed a polynomial dependency between the material removal rate and the applied force. Differences between the three segments of the osteotomy line and between the cortical and cancellous bone were highlighted.

Geometrically Limited Constraints for Physics-based Haptic Rendering

In this paper a single-point haptic rendering technique is proposed which uses a constraint-based physics simulation approach. Geometries are sampled using point shell points, each associated with a small disk, that jointly result in a closed surface for the whole shell. The geometric information is incorporated into the constraint-based simulation using newly introduced geometrically limited contact constraints which are active in a restricted region corresponding to the disks in contact. The usage of disk constraints not only creates closed surfaces, which is important for single-point rendering, but also tackles the problem of over-constraint contact situations in convex geometric setups. Furthermore, an iterative solving scheme for dynamic problems under consideration of the proposed constraint type is proposed. Finally, an evaluation of the simulation approach shows the advantages compared to standard contact constraints regarding the quality of the rendered forces.

Data-flow Oriented Software Framework for the Development of Haptic-enabled Physics Simulations

This paper presents a software framework that supports the development of haptic-enabled physics simulations. The framework provides tools aiming to facilitate a fast prototyping process by utilizing component and flow-oriented architectures, while maintaining the capability to create efficient code which fulfills the performance requirements induced by the target applications. We argue that such a framework should not only ease the creation of prototypes but also help to effectively and efficiently evaluate them. To this end, we provide analysis tools and the possibility to build problem oriented evaluation environments based on the described software concepts. As motivating use case, we present a project with the goal to develop a haptic-enabled medical training simulator for a maxillofacial procedure. With this example, we demonstrate how the described framework can be used to create a simulation architecture for a complex haptic simulation and how the tools assist in the prototyping process.

Failure Mode and Effects Analysis in Designing a Virtual Reality-Based Training Simulator for Bilateral Sagittal Split Osteotomy

Virtual reality-based simulators offer a cost-effective and efficient alternative to traditional medical training and planning. Developing a simulator that enables the training of medical skills and also supports recognition of errors made by the trainee is a challenge. The first step in developing such a system consists of error identification in the real procedure, in order to ensure that the training environment covers the most significant errors that can occur. This paper focuses on identifying the main system requirements for an interactive simulator for training bilateral sagittal split osteotomy (BSSO). An approach is proposed based on failure mode and effects analysis (FMEA), a risk analysis method that is well structured and already an approved technique in other domains. Based on the FMEA results, a BSSO training simulator is currently being developed, which centers upon the main critical steps of the procedure (sawing and splitting) and their main errors. FMEA seems to be a suitable tool in the design phase of developing medical simulators. Herein, it serves as a communication medium for knowledge transfer between the medical experts and the system developers. The method encourages a reflective process and allows identification of the most important elements and scenarios that need to be trained.

Comparing Auditory and Haptic Feedback for a Virtual Drilling Task

While visual feedback is dominant in Virtual Environments, the use of other modalities like haptics and acoustics can enhance believability, immersion, and interaction performance. Haptic feedback is especially helpful for many interaction tasks like working with medical or precision tools. However, unlike visual and auditory feedback, haptic reproduction is often difficult to achieve due to hardware limitations. This article describes a user study to examine how auditory feedback can be used to substitute haptic feedback when interacting with a vibrating tool. Participants remove some target material with a round-headed drill while avoiding damage to the underlying surface. In the experiment, varying combinations of surface force feedback, vibration feedback, and auditory feedback are used. We describe the design of the user study and present the results, which show that auditory feedback can compensate the lack of haptic feedback.

@inproceedings {EGVE:JVRC12:049-056,

booktitle = {Joint Virtual Reality Conference of ICAT - EGVE - EuroVR},

editor = {Ronan Boulic and Carolina Cruz-Neira and Kiyoshi Kiyokawa and David Roberts},

title = {{Comparing Auditory and Haptic Feedback for a Virtual Drilling Task}},

author = {Rausch, Dominik and Aspöck, Lukas and Knott, Thomas and Pelzer, Sönke and Vorländer, Michael and Kuhlen, Torsten},

year = {2012},

publisher = {The Eurographics Association},

ISSN = {1727-530X},

ISBN = {978-3-905674-40-8},

DOI = {10.2312/EGVE/JVRC12/049-056}

pages= { -- }

}

Geometrical-Acoustics-based Ultrasound Image Simulation

Brightness modulation (B-Mode) ultrasound (US) images are used to visualize internal body structures during diagnostic and invasive procedures, such as needle insertion for Regional Anesthesia. Due to patient availability and health risks-during invasive procedures-training is often limited, thus, medical training simulators become a viable solution to the problem. Simulation of ultrasound images for medical training requires not only an acceptable level of realism but also interactive rendering times in order to be effective. To address these challenges, we present a generative method for simulating B-Mode ultrasound images using surface representations of the body structures and geometrical acoustics to model sound propagation and its interaction within soft tissue. Furthermore, physical models for backscattered, reflected and transmitted energies as well as for the beam profile are used in order to improve realism. Through the proposed methodology we are able to simulate, in real-time, plausible view- and depth-dependent visual artifacts that are characteristic in B-Mode US images, achieving both, realism and interactivity.