Profile

|

Dr. Daniel Zielasko |

Now Post-Doc at Human-Computer Interaction at Department IV – Computer Science at University of Trier

Publications

Front Matter: The Third Workshop on Locomotion and Wayfinding in XR (LocXR)

The Third Workshop on Locomotion and Wayfinding in XR, held in conjunction with IEEE VR 2025 in Saint-Malo, France, is dedicated to advancing research and fostering discussions around the critical topics of navigation in extended reality (XR). Navigation is a fundamental form of user interaction in XR, yet it poses numerous challenges in conceptual design, technical implementation, and systematic evaluation. By bringing together researchers and practitioners, this workshop aims to address these challenges and push the boundaries of what is achievable in XR navigation.

@inproceedings{Weissker2025,

author={T. {Weissker} and D. {Zielasko}},

booktitle={2025 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW)},

title={The Third Workshop on Locomotion and Wayfinding in XR (LocXR)},

year={2025},

volume={},

number={},

pages={239-240},

doi={10.1109/VRW66409.2025.00058}

}

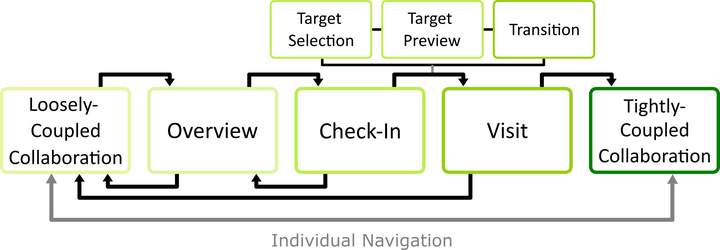

Come Look at This: Supporting Fluent Transitions between Tightly and Loosely Coupled Collaboration in Social Virtual Reality

Collaborative work in social virtual reality often requires an interplay of loosely coupled collaboration from different virtual locations and tightly coupled face-to-face collaboration. Without appropriate system mediation, however, transitioning between these phases requires high navigation and coordination efforts. In this paper, we present an interaction system that allows collaborators in virtual reality to seamlessly switch between different collaboration models known from related work. To this end, we present collaborators with functionalities that let them work on individual sub-tasks in different virtual locations, consult each other using asymmetric interaction patterns while keeping their current location, and temporarily or permanently join each other for face-to-face interaction. We evaluated our methods in a user study with 32 participants working in teams of two. Our quantitative results indicate that delegating the target selection process for a long-distance teleport significantly improves placement accuracy and decreases task load within the team. Our qualitative user feedback shows that our system can be applied to support flexible collaboration. In addition, the proposed interaction sequence received positive evaluations from teams with varying VR experiences.

@ARTICLE{10568966,

author={Bimberg, Pauline and Zielasko, Daniel and Weyers, Benjamin and Froehlich, Bernd and Weissker, Tim},

journal={IEEE Transactions on Visualization and Computer Graphics},

title={Come Look at This: Supporting Fluent Transitions between Tightly and Loosely Coupled Collaboration in Social Virtual Reality},

year={2024},

volume={},

number={},

pages={1-17},

keywords={Collaboration;Virtual environments;Navigation;Task analysis;Virtual reality;Three-dimensional displays;Teleportation;Virtual Reality;3D User Interfaces;Multi-User Environments;Social VR;Groupwork;Collaborative Interfaces},

doi={10.1109/TVCG.2024.3418009}}

Poster: Travel Speed, Spatial Awareness, And Implications for Egocentric Target-Selection-Based Teleportation - A Replication Design

Virtual travel in Virtual Reality experiences is common, offering users the ability to explore expansive virtual spaces. Various interfaces exist for virtual travel, with speed playing a crucial role in user experience and spatial awareness. Teleportation-based interfaces provide instantaneous transitions, whereas continuous and semi-continuous methods vary in speed and control. Prior research by Bowman et al. highlighted the impact of travel speed on spatial awareness demonstrating that instantaneous travel can lead to user disorientation. However, additional cues, such as visual target selection, can aid in reorientation. This study replicates and extends Bowman’s experiment, investigating the influence of travel speed and visual target cues on spatial orientation.

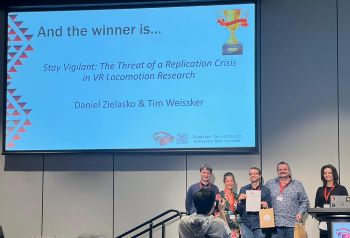

Stay Vigilant: The Threat of a Replication Crisis in VR Locomotion Research

The ability to reproduce previously published research findings is an important cornerstone of the scientific knowledge acquisition process. However, the exact details required to reproduce empirical experiments vary depending on the discipline. In this paper, we summarize key replication challenges as well as their specific consequences for VR locomotion research. We then present the results of a literature review on artificial locomotion techniques, in which we analyzed 61 papers published in the last five years with respect to their report of essential details required for reproduction. Our results indicate several issues in terms of the description of the experimental setup, the scientific rigor of the research process, and the generalizability of results, which altogether points towards a potential replication crisis in VR locomotion research. As a countermeasure, we provide guidelines to assist researchers with reporting future artificial locomotion experiments in a reproducible form.

Best Paper Award!

@inproceedings{10.1145/3611659.3615697,

author = {Zielasko, Daniel and Weissker, Tim},

title = {Stay Vigilant: The Threat of a Replication Crisis in VR Locomotion Research},

year = {2023},

isbn = {9798400703287},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3611659.3615697},

doi = {10.1145/3611659.3615697},

abstract = {The ability to reproduce previously published research findings is an important cornerstone of the scientific knowledge acquisition process. However, the exact details required to reproduce empirical experiments vary depending on the discipline. In this paper, we summarize key replication challenges as well as their specific consequences for VR locomotion research. We then present the results of a literature review on artificial locomotion techniques, in which we analyzed 61 papers published in the last five years with respect to their report of essential details required for reproduction. Our results indicate several issues in terms of the description of the experimental setup, the scientific rigor of the research process, and the generalizability of results, which altogether points towards a potential replication crisis in VR locomotion research. As a countermeasure, we provide guidelines to assist researchers with reporting future artificial locomotion experiments in a reproducible form.},

booktitle = {Proceedings of the 29th ACM Symposium on Virtual Reality Software and Technology},

articleno = {39},

numpages = {10},

keywords = {Reproducibility, Virtual Reality, Replication Crisis, Teleportation, Locomotion, Steering},

location = {Christchurch, New Zealand},

series = {VRST '23}

}

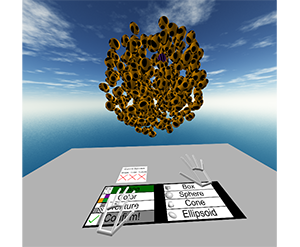

Design and Evaluation of a Free-Hand VR-based Authoring Environment for Automated Vehicle Testing

Virtual Reality is increasingly used for safe evaluation and validation of autonomous vehicles by automotive engineers. However, the design and creation of virtual testing environments is a cumbersome process. Engineers are bound to utilize desktop-based authoring tools, and a high level of expertise is necessary. By performing scene authoring entirely inside VR, faster design iterations become possible. To this end, we propose a VR authoring environment that enables engineers to design road networks and traffic scenarios for automated vehicle testing based on free-hand interaction. We present a 3D interaction technique for the efficient placement and selection of virtual objects that is employed on a 2D panel. We conducted a comparative user study in which our interaction technique outperformed existing approaches regarding precision and task completion time. Furthermore, we demonstrate the effectiveness of the system by a qualitative user study with domain experts.

Nominated for the Best Paper Award.

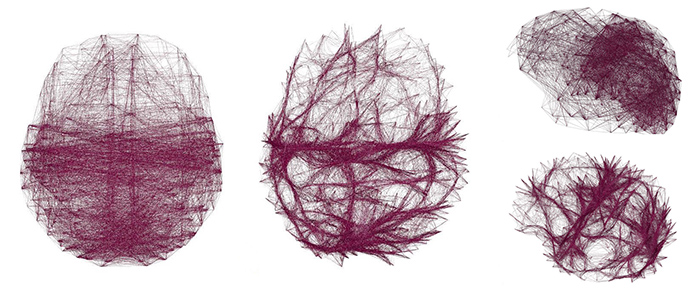

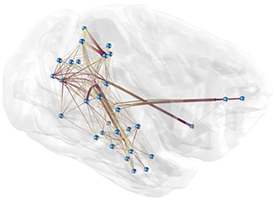

Voxel-Based Edge Bundling Trough Direction-Aware Kernel Smoothing

Relational data with a spatial embedding and depicted as node-link diagram is very common, e.g., in neuroscience, and edge bundling is one way to increase its readability or reveal hidden structures. This article presents a 3D extension to kernel density estimation-based edge bundling that is meant to be used in an interactive immersive analysis setting. This extension adds awareness of the edges’ direction when using kernel smoothing and thus implicitly supports both directed and undirected graphs. The method generates explicit bundles of edges, which can be analyzed and visualized individually and as sufficient as possible for a given application context, while it scales linearly with the input size.

@article{ZIELASKO2019,

title = "Voxel-based edge bundling through direction-aware kernel smoothing",

journal = "Computers & Graphics",

volume = "83",

pages = "87 - 96",

year = "2019",

issn = "0097-8493",

doi = "https://doi.org/10.1016/j.cag.2019.06.008",

url = "http://www.sciencedirect.com/science/article/pii/S0097849319301025",

author = "Daniel Zielasko and Xiaoqing Zhao and Ali Can Demiralp and Torsten W. Kuhlen and Benjamin Weyers"}

Passive Haptic Menus for Desk-Based and HMD-Projected Virtual Reality

In this work we evaluate the impact of passive haptic feedback on touch-based menus, given the constraints and possibilities of a seated, desk-based scenario in VR. Therefore, we compare a menu that once is placed on the surface of a desk and once mid-air on a surface in front of the user. The study design is completed by two conditions without passive haptic feedback. In the conducted user study (n = 33) we found effects of passive haptics (present vs- non-present) and menu alignment (desk vs. mid-air) on the task performance and subjective look & feel, however the race between the conditions was close. An overall winner was the mid-air menu with passive haptic feedback, which however raises hardware requirements.

@inproceedings{zielasko2019menu,

title={{Passive Haptic Menus for Desk-Based and HMD-Projected Virtual Reality}},

author={Zielasko, Daniel and Kr{\"u}ger Marcel and Weyers, Benjamin and Kuhlen, Torsten W},

booktitle={Proc. of IEEE VR Workshop on Everyday Virtual Reality},

year={2019}

}

A Non-Stationary Office Desk Substitution for Desk-Based and HMD-Projected Virtual Reality

The ongoing migration of HMDs to the consumer market also allows the integration of immersive environments into analysis workflows that are often bound to an (office) desk. However, a critical factor when considering VR solutions for professional applications is the prevention of cybersickness. In the given scenario the user is usually seated and the surrounding real world environment is very dominant, where the most dominant part is maybe the desk itself. Including this desk in the virtual environment could serve as a resting frame and thus reduce cybersickness next to a lot of further possibilities. In this work, we evaluate the feasibility of a substitution like this in the context of a visual data analysis task involving travel, and measure the impact on cybersickness as well as the general task performance and presence. In the conducted user study (n=52), surprisingly, and partially in contradiction to existing work, we found no significant differences for those core measures between the control condition without a virtual table and the condition containing a virtual table. However, the results also support the inclusion of a virtual table in desk-based use cases.

@inproceedings{zielasko2019travel,

title={{A Non-Stationary Office Desk Substitution for Desk-Based and HMD-Projected Virtual Reality}},

author={Zielasko, Daniel and Weyers, Benjamin and Kuhlen, Torsten W},

booktitle ={Proc. of IEEE VR Workshop on Immersive Sickness Prevention},

year={2019}

}

Dynamic Field of View Reduction Related to Subjective Sickness Measures in an HMD-based Data Analysis Task

Various factors influence the degree of cybersickness a user can suffer in an immersive virtual environment, some of which can be controlled without adapting the virtual environment itself. When using HMDs, one example is the size of the field of view. However, the degree to which factors like this can be manipulated without affecting the user negatively in other ways is limited. Another prominent characteristic of cybersickness is that it affects individuals very differently. Therefore, to account for both the possible disruptive nature of alleviating factors and the high interpersonal variance, a promising approach may be to intervene only in cases where users experience discomfort symptoms, and only as much as necessary. Thus, we conducted a first experiment, where the field of view was decreased when people feel uncomfortable, to evaluate the possible positive impact on sickness and negative influence on presence. While we found no significant evidence for any of these possible effects, interesting further results and observations were made.

» Show BibTeX

@InProceedings{zielasko2018,

title={{Dynamic Field of View Reduction Related to Subjective Sickness Measures in an HMD-based Data Analysis Task}},

author={Zielasko, Daniel and Mei{\ss}ner, Alexander and Freitag Sebastian and Weyers, Benjamin and Kuhlen, Torsten W},

booktitle ={Proc. of IEEE Virtual Reality Workshop on Everyday Virtual Reality},

year={2018}

}

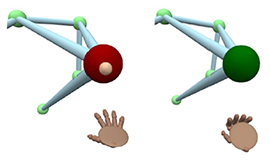

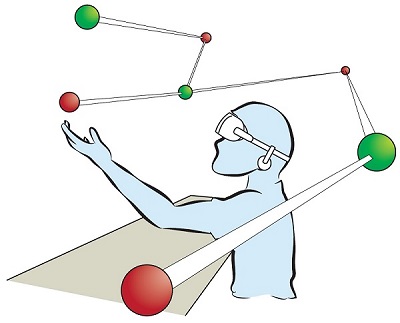

Seamless Hand-Based Remote and Close Range Interaction in IVEs

In this work, we describe a hybrid, hand-based interaction metaphor that makes remote and close objects in an HMD-based immersive virtual environment (IVE) seamlessly accessible. To accomplish this, different existing techniques, such as go-go and HOMER, were combined in a way that aims for generality, intuitiveness, uniformity, and speed. A technique like this is one prerequisite for a successful integration of IVEs to professional everyday applications, such as data analysis workflows.

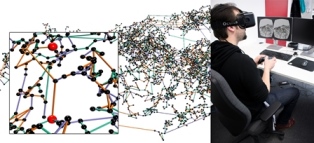

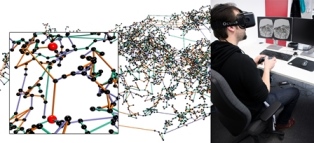

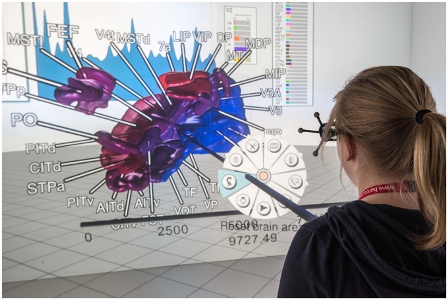

buenoSDIAs: Supporting Desktop Immersive Analytics While Actively Preventing Cybersickness

Immersive data analytics as an emerging research topic in scientific and information visualization has recently been brought back into the focus due to the emergence of low-cost consumer virtual reality hardware. Previous research has shown the positive impact of immersive visualization on data analytics workflows, but in most cases, insights were based on large-screen setups. In contrast, less research focuses on a close integration of immersive technology into existing, i.e., desktop-based data analytics workflows. This implies specific requirements regarding the usability of such systems, which include, i.e., the prevention of cybersickness. In this work, we present a prototypical application, which offers a first set of tools and addresses major challenges for a fully immersive data analytics setting in which the user is sitting at a desktop. In particular, we address the problem of cybersickness by integrating prevention strategies combined with individualized user profiles to maximize time of use.

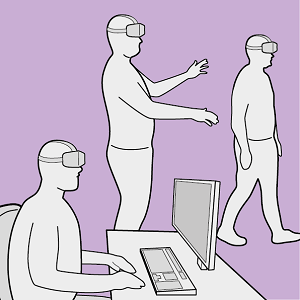

Utilizing Immersive Virtual Reality in Everyday Work

Applications of Virtual Reality (VR) have been repeatedly explored with the goal to improve the data analysis process of users from different application domains, such as architecture and simulation sciences. Unfortunately, making VR available in professional application scenarios or even using it on a regular basis has proven to be challenging. We argue that everyday usage environments, such as office spaces, have introduced constraints that critically affect the design of interaction concepts since well-established techniques might be difficult to use. In our opinion, it is crucial to understand the impact of usage scenarios on interaction design, to successfully develop VR applications for everyday use. To substantiate our claim, we define three distinct usage scenarios in this work that primarily differ in the amount of mobility they allow for. We outline each scenario's inherent constraints but also point out opportunities that may be used to design novel, well-suited interaction techniques for different everyday usage environments. In addition, we link each scenario to a concrete application example to clarify its relevance and show how it affects interaction design.

BlowClick 2.0: A Trigger Based on Non-Verbal Vocal Input

The use of non-verbal vocal input (NVVI) as a hand-free trigger approach has proven to be valuable in previous work [Zielasko2015]. Nevertheless, BlowClick's original detection method is vulnerable to false positives and, thus, is limited in its potential use, e.g., together with acoustic feedback for the trigger. Therefore, we extend the existing approach by adding common machine learning methods. We found that a support vector machine (SVM) with Gaussian kernel performs best for detecting blowing with at least the same latency and more precision as before. Furthermore, we added acoustic feedback to the NVVI trigger, which increases the user's confidence. To evaluate the advanced trigger technique, we conducted a user study (n=33). The results confirm that it is a reliable trigger; alone and as part of a hands-free point-and-click interface.

A Reliable Non-Verbal Vocal Input Metaphor for Clicking

We extended BlowClick, a NVVI metaphor for clicking, by adding machine learning methods to more reliably classify blowing events. We found a support vector machine with Gaussian kernel performing the best with at least the same latency and more precision than before. Furthermore, we added acoustic feedback to the NVVI trigger, which increases the user's confidence. With this extended technique we conducted a user study with 33 participants and could confirm that it is possible to use NVVI as a reliable trigger as part of a hands-free point-and-click interface.

Remain Seated: Towards Fully-Immersive Desktop VR

In this work we describe the scenario of fully-immersive desktop VR, which serves the overall goal to seamlessly integrate with existing workflows and workplaces of data analysts and researchers, such that they can benefit from the gain in productivity when immersed in their data-spaces. Furthermore, we provide a literature review showing the status quo of techniques and methods available for realizing this scenario under the raised restrictions. Finally, we propose a concept of an analysis framework and the decisions made and the decisions still to be taken, to outline how the described scenario and the collected methods are feasible in a real use case.

Poster: Towards a Design Space Characterizing Workflows that Take Advantage of Immersive Visualization

Immersive visualization (IV) fosters the creation of mental images of a data set, a scene, a procedure, etc. We devise an initial version of a design space for categorizing workflows that take advantage of IV. From this categorization, specific requirements for seamlessly integrating IV can be derived. We validate the design space with three workflows emerging from our research projects.

@InProceedings{Vierjahn2017,

Title = {Towards a Design Space Characterizing Workflows that Take Advantage of Immersive Visualization},

Author = {Tom Vierjahn and Daniel Zielasko and Kees van Kooten and Peter Messmer and Bernd Hentschel and Torsten W. Kuhlen and Benjamin Weyers},

Booktitle = {IEEE Virtual Reality Conference Poster Proceedings},

Year = {2017},

Pages = {329-330},

DOI={10.1109/VR.2017.7892310}

}

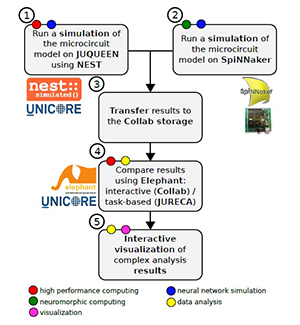

A Collaborative Simulation-Analysis Workflow for Computational Neuroscience Using HPC

Workflows for the acquisition and analysis of data in the natural sciences exhibit a growing degree of complexity and heterogeneity, are increasingly performed in large collaborative efforts, and often require the use of high-performance computing (HPC). Here, we explore the reasons for these new challenges and demands and discuss their impact, with a focus on the scientific domain of computational neuroscience. We argue for the need for software platforms integrating HPC systems that allow scientists to construct, comprehend and execute workflows composed of diverse processing steps using different tools. As a use case we present a concrete implementation of such a complex workflow, covering diverse topics such as HPC-based simulation using the NEST software, access to the SpiNNaker neuromorphic hardware platform, complex data analysis using the Elephant library, and interactive visualizations. Tools are embedded into a web-based software platform under development by the Human Brain Project, called Collaboratory. On the basis of this implementation, we discuss the state-of-the-art and future challenges in constructing large, collaborative workflows with access to HPC resources.

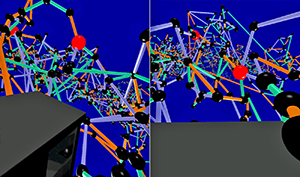

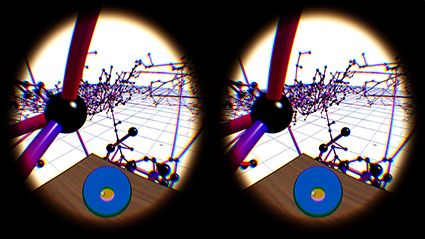

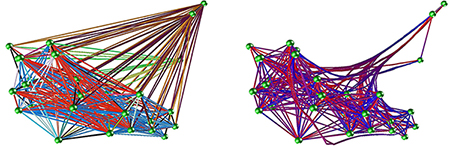

Interactive 3D Force-Directed Edge Bundling

Interactive analysis of 3D relational data is challenging. A common way of representing such data are node-link diagrams as they support analysts in achieving a mental model of the data. However, naïve 3D depictions of complex graphs tend to be visually cluttered, even more than in a 2D layout. This makes graph exploration and data analysis less efficient. This problem can be addressed by edge bundling. We introduce a 3D cluster-based edge bundling algorithm that is inspired by the force-directed edge bundling (FDEB) algorithm [Holten2009] and fulfills the requirements to be embedded in an interactive framework for spatial data analysis. It is parallelized and scales with the size of the graph regarding the runtime. Furthermore, it maintains the edge’s model and thus supports rendering the graph in different structural styles. We demonstrate this with a graph originating from a simulation of the function of a macaque brain.

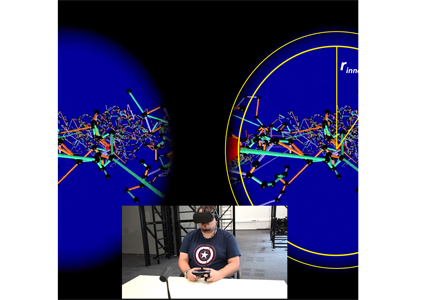

Evaluation of Hands-Free HMD-Based Navigation Techniques for Immersive Data Analysis

To use the full potential of immersive data analysis when wearing a head-mounted display, users have to be able to navigate through the spatial data. We collected, developed and evaluated 5 different hands-free navigation methods that are usable while seated in the analyst’s usual workplace. All methods meet the requirements of being easy to learn and inexpensive to integrate into existing workplaces. We conducted a user study with 23 participants which showed that a body leaning metaphor and an accelerometer pedal metaphor performed best. In the given task the participants had to determine the shortest path between various pairs of vertices in a large 3D graph.

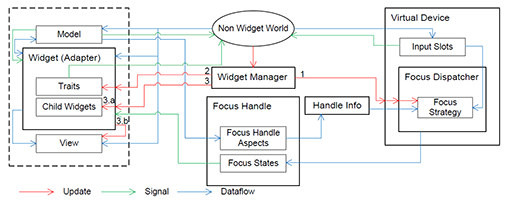

Vista Widgets: A Framework for Designing 3D User Interfaces from Reusable Interaction Building Blocks

Virtual Reality (VR) has been an active field of research for several decades, with 3D interaction and 3D User Interfaces (UIs) as important sub-disciplines. However, the development of 3D interaction techniques and in particular combining several of them to construct complex and usable 3D UIs remains challenging, especially in a VR context. In addition, there is currently only limited reusable software for implementing such techniques in comparison to traditional 2D UIs. To overcome this issue, we present ViSTA Widgets, a software framework for creating 3D UIs for immersive virtual environments. It extends the ViSTA VR framework by providing functionality to create multi-device, multi-focus-strategy interaction building blocks and means to easily combine them into complex 3D UIs. This is realized by introducing a device abstraction layer along sophisticated focus management and functionality to create novel 3D interaction techniques and 3D widgets. We present the framework and illustrate its effectiveness with code and application examples accompanied by performance evaluations.

@InProceedings{Gebhardt2016,

Title = {{Vista Widgets: A Framework for Designing 3D User Interfaces from Reusable Interaction Building Blocks}},

Author = {Gebhardt, Sascha and Petersen-Krau, Till and Pick, Sebastian and Rausch, Dominik and Nowke, Christian and Knott, Thomas and Schmitz, Patric and Zielasko, Daniel and Hentschel, Bernd and Kuhlen, Torsten W.},

Booktitle = {Proceedings of the 22nd ACM Conference on Virtual Reality Software and Technology},

Year = {2016},

Address = {New York, NY, USA},

Pages = {251--260},

Publisher = {ACM},

Series = {VRST '16},

Acmid = {2993382},

Doi = {10.1145/2993369.2993382},

ISBN = {978-1-4503-4491-3},

Keywords = {3D interaction, 3D user interfaces, framework, multi-device, virtual reality},

Location = {Munich, Germany},

Numpages = {10},

Url = {http://doi.acm.org/10.1145/2993369.2993382}

}

Poster: Evaluation of Hands-Free HMD-Based Navigation Techniques for Immersive Data Analysis

To use the full potential of immersive data analysis when wearing a head-mounted display, the user has to be able to navigate through the spatial data. We collected, developed and evaluated 5 different hands-free navigation methods that are usable while seated in the analyst’s usual workplace. All methods meet the requirements of being easy to learn and inexpensive to integrate into existing workplaces. We conducted a user study with 23 participants which showed that a body leaning metaphor and an accelerometer pedal metaphor performed best within the given task.

Integrating Visualizations into Modeling NEST Simulations

Modeling large-scale spiking neural networks showing realistic biological behavior in their dynamics is a complex and tedious task. Since these networks consist of millions of interconnected neurons, their simulation produces an immense amount of data. In recent years it has become possible to simulate even larger networks. However, solutions to assist researchers in understanding the simulation's complex emergent behavior by means of visualization are still lacking. While developing tools to partially fill this gap, we encountered the challenge to integrate these tools easily into the neuroscientists' daily workflow. To understand what makes this so challenging, we looked into the workflows of our collaborators and analyzed how they use the visualizations to solve their daily problems. We identified two major issues: first, the analysis process can rapidly change focus which requires to switch the visualization tool that assists in the current problem domain. Second, because of the heterogeneous data that results from simulations, researchers want to relate data to investigate these effectively. Since a monolithic application model, processing and visualizing all data modalities and reflecting all combinations of possible workflows in a holistic way, is most likely impossible to develop and to maintain, a software architecture that offers specialized visualization tools that run simultaneously and can be linked together to reflect the current workflow, is a more feasible approach. To this end, we have developed a software architecture that allows neuroscientists to integrate visualization tools more closely into the modeling tasks. In addition, it forms the basis for semantic linking of different visualizations to reflect the current workflow. In this paper, we present this architecture and substantiate the usefulness of our approach by common use cases we encountered in our collaborative work.

BlowClick: A Non-Verbal Vocal Input Metaphor for Clicking

In contrast to the wide-spread use of 6-DOF pointing devices, freehand user interfaces in Immersive Virtual Environments (IVE) are non-intrusive. However, for gesture interfaces, the definition of trigger signals is challenging. The use of mechanical devices, dedicated trigger gestures, or speech recognition are often used options, but each comes with its own drawbacks. In this paper, we present an alternative approach, which allows to precisely trigger events with a low latency using microphone input. In contrast to speech recognition, the user only blows into the microphone. The audio signature of such blow events can be recognized quickly and precisely. The results of a user study show that the proposed method allows to successfully complete a standard selection task and performs better than expected against a standard interaction device, the Flystick.

Cirque des Bouteilles: The Art of Blowing on Bottles

Making music by blowing on bottles is fun but challenging. We introduce a novel 3D user interface to play songs on virtual bottles. For this purpose the user blows into a microphone and the stream of air is recreated in the virtual environment and redirected to virtual bottles she is pointing to with her fingers. This is easy to learn and subsequently opens up opportunities for quickly switching between bottles and playing groups of them together to form complex melodies. Furthermore, our interface enables the customization of the virtual environment, by means of moving bottles, changing their type or filling level.

The Human Brain Project - Chances and Challenges for Cognitive Systems

The Human Brain Project is one of the largest scientific initiatives dedicated to the research of the human brain worldwide. Over 80 research groups from a broad variety of scientific areas, such as neuroscience, simulation science, high performance computing, robotics, and visualization work together in this European research initiative. This work at hand will identify certain chances and challenges for cognitive systems engineering resulting from the HBP research activities. Beside the main goal of the HBP gathering deeper insights into the structure and function of the human brain, cognitive system research can directly benefit from the creation of cognitive architectures, the simulation of neural networks, and the application of these in context of (neuro-)robotics. Nevertheless, challenges arise regarding the utilization and transformation of these research results for cognitive systems, which will be discussed in this paper. Tools necessary to cope with these challenges are visualization techniques helping to understand and gain insights into complex data. Therefore, this paper presents a set of visualization techniques developed at the Virtual Reality Group at the RWTH Aachen University.

@inproceedings{Weyers2014,

author = {Weyers, Benjamin and Nowke, Christian and H{\"{a}}nel, Claudia and Zielasko, Daniel and Hentschel, Bernd and Kuhlen, Torsten},

booktitle = {Workshop Kognitive Systeme: Mensch, Teams, Systeme und Automaten},

title = {{The Human Brain Project – Chances and Challenges for Cognitive Systems}},

year = {2014}

}

Poster: Interactive 3D Force-Directed Edge Bundling on Clustered Edges

Graphs play an important role in data analysis. Especially, graphs with a natural spatial embedding can benefit from a 3D visualization. But even more then in 2D, graphs visualized as intuitively readable 3D node-link diagrams can become very cluttered. This makes graph exploration and data analysis difficult. For this reason, we focus on the challenge of reducing edge clutter by utilizing edge bundling. In this paper we introduce a parallel, edge cluster based accelerator for the force-directed edge bundling algorithm presented in [Holten2009]. This opens up the possibility for user interaction during and after both the clustering and the bundling.