Profile

|

Jonathan Ehret, M.Sc. |

Jonathan Ehret née Wendt, received his Master degree in 2016 at RWTH Aachen. Now he conducts research in the field of Social VR by integrating virtual agents as advanced, emotional human interfaces into VR applications, focusing on conversational VAs, comprising aspects like auralisation of speech, language register, and co-verbal gestures.

Publications

Beyond Words: The Impact of Static and Animated Faces as Visual Cues on Memory Performance and Listening Effort during Two-Talker Conversations

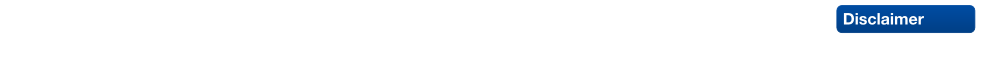

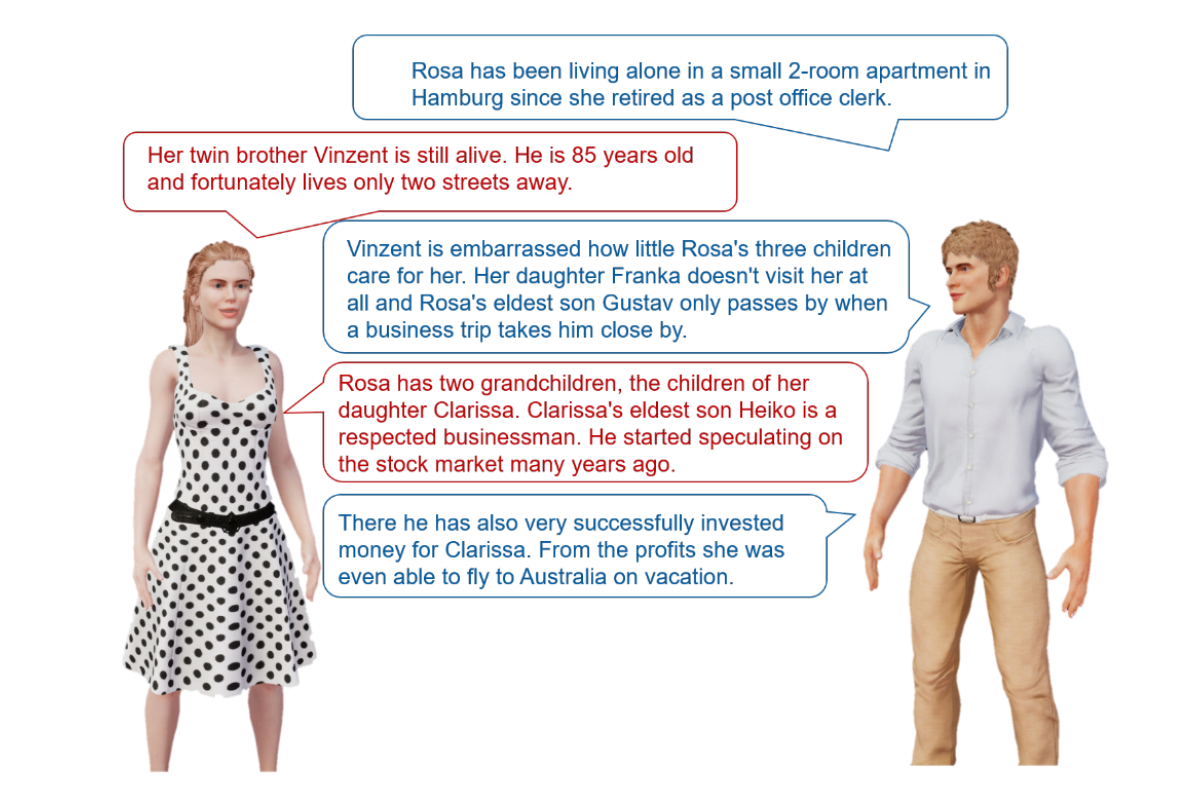

Listening to a conversation between two talkers and recalling the information is a common goal in verbal communication. However, cognitive-psychological experiments on short-term memory performance often rely on rather simple stimulus material, such as unrelated word lists or isolated sentences. The present study uniquely incorporated running speech, such as listening to a two-talker conversation, to investigate whether talker-related visual cues enhance short-term memory performance and reduce listening effort in non-noisy listening settings. In two equivalent dual-task experiments, participants listened to interrelated sentences spoken by two alternating talkers from two spatial positions, with talker-related visual cues presented as either static faces (Experiment 1, n = 30) or animated faces with lip sync (Experiment 2, n = 28). After each conversation, participants answered content-related questions as a measure of short-term memory (via the Heard Text Recall task). In parallel to listening, they performed a vibrotactile pattern recognition task to assess listening effort. Visual cue conditions (static or animated faces) were compared within-subject to a baseline condition without faces. To account for inter-individual variability, we measured and included individual working memory capacity, processing speed, and attentional functions as cognitive covariates. After controlling for these covariates, results indicated that neither static nor animated faces improved short-term memory performance for conversational content. However, static faces reduced listening effort, whereas animated faces increased it, as indicated by secondary task RTs. Participants' subjective ratings mirrored these behavioral results. Furthermore, working memory capacity was associated with short-term memory performance, and processing speed was associated with listening effort, the latter reflected in performance on the vibrotactile secondary task. In conclusion, this study demonstrates that visual cues influence listening effort and that individual differences in working memory and processing speed help explain variability in task performance, even in optimal listening conditions.

@article{MOHANATHASAN2026106295,

title = {Beyond words: The impact of static and animated faces as visual cues on memory performance and listening effort during two-talker conversations},

journal = {Acta Psychologica},

volume = {263},

pages = {106295},

year = {2026},

issn = {0001-6918},

doi = {https://doi.org/10.1016/j.actpsy.2026.106295},

url = {https://www.sciencedirect.com/science/article/pii/S0001691826000946},

author = {Chinthusa Mohanathasan and Plamenna B. Koleva and Jonathan Ehret and Andrea Bönsch and Janina Fels and Torsten W. Kuhlen and Sabine J. Schlittmeier}

}

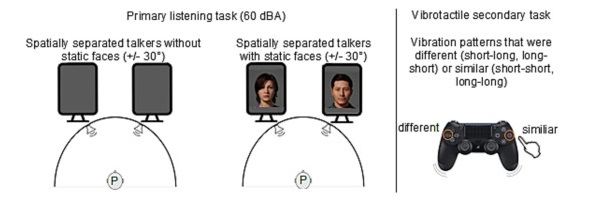

Objectifying Social Presence: Evaluating Multimodal Degraders in ECAs Using the Heard Text Recall Paradigm

Embodied conversational agents (ECAs) are key social interaction partners in various virtual reality (VR) applications, with their perceived social presence significantly influencing the quality and effectiveness of user-ECA interactions. This paper investigates the potential of the Heard Text Recall (HTR) paradigm as an indirect objective proxy for evaluating social presence, which is traditionally assessed through subjective questionnaires. To this end, we use the HTR task, which was primarily designed to assess memory performance in listening tasks, in a dual-task paradigm to assess cognitive spare capacity and correlate the latter with subjectively-rated social presence. As a prerequisite for this investigation, we introduce various co-verbal gesture modification techniques and assess their impact on the perceived naturalness of the presenting ECA, a crucial aspect fostering social presence. The main study then explores the applicability of HTR as a proxy for social presence by examining its effectiveness under different multimodal degraders of ECA behavior, including degraded co-verbal gestures, omitted lip synchronization, and the use of synthetic voices. The findings suggest that while HTR shows potential as an objective measure of social presence, its effectiveness is primarily evident in response to substantial changes in ECA behavior. Additionally, the study also highlights the negative effects of synthetic voices and inadequate lip synchronization on perceived social presence, emphasizing the need for careful consideration of these elements in ECA design.

@ARTICLE{11271093,

author={Ehret, Jonathan and Schüppen, Jonas and Mohanathasan, Chinthusa and Ermert, Cosima A. and Fels, Janina and Schlittmeier, Sabine J. and Kuhlen, Torsten W. and Bönsch, Andrea},

journal={IEEE Transactions on Visualization and Computer Graphics},

title={Objectifying Social Presence: Evaluating Multimodal Degraders in ECAs Using the Heard Text Recall Paradigm},

year={2025},

volume={},

number={},

pages={1-15},

doi={10.1109/TVCG.2025.3636079}

}

Fostering Engagement through a Latency-Optimized LLM-based Dialogue System for Multimodal ECA Responses

Interactions with Embodied Conversational Agents (ECAs) are an integral part of many social Virtual Reality (VR) applications, increasing the need for free, context-sensitive conversations characterized by latency-optimized and multimodal ECA responses. Our presented methodology consists of three interdependent steps: We first present a holistic framework driven by a Large Language Model (LLM), which integrates existing technologies into a modular and extendable system that is developer-friendly and suitable for diverse use-cases. Building on this foundation, our second step comprises streaming-based optimizations that effectively reduce measured response latency, thereby facilitating real-time conversations. Finally, we conduct a comparative analysis between our latency optimized LLM-driven ECA and a conventional button-based Wizard-of-Oz (WoZ) system to evaluate performance differences in user engagement. Our insights reveal that users perceive our LLM-driven ECA as significantly more natural, competent, and trustworthy than their WoZ counterparts, despite objective measures indicating slightly higher latency in technical performance. These findings underscore the potential of LLMs to enhance engagement in ECAs within VR environments.

Audiovisual angle and voice incongruence do not affect audiovisual verbal short-term memory in virtual reality

Virtual reality (VR) environments are frequently used in auditory and cognitive research to imitate real-life scenarios. The visual component in VR has the potential to affect how auditory information is processed, especially if incongruences between the visual and auditory information occur. This study investigated how audiovisual incongruence in VR implemented with a head-mounted display (HMD) affects verbal short-term memory compared to presentation of the same material over traditional computer monitors. Two experiments were conducted with both these display devices and two types of audiovisual incongruences: angle (Exp 1) and voice (Exp 2) incongruence. To quantify short-term memory, an audiovisual verbal serial recall (avVSR) task was developed where an embodied conversational agent (ECA) was animated to speak a digit sequence, which participants had to remember. The results showed no effect of the display devices on the proportion of correctly recalled digits overall, although subjective evaluations showed a higher sense of presence in the HMD condition. For the extreme conditions of angle incongruence in the computer monitor presentation, the proportion of correctly recalled digits increased marginally, presumably due to raised attention, but the effect size was negligible. Response times were not affected by incongruences in either display device across both experiments. These findings suggest that at least for the conditions studied here, the avVSR task is robust against angle and voice audiovisual incongruences in both HMD and computer monitor displays.

@article{ Ermert2025,

doi = {10.1371/journal.pone.0330693},

author = {Ermert, Cosima A. AND Yadav, Manuj AND Ehret, Jonathan AND

Mohanathasan, Chinthusa AND Bönsch, Andrea AND Kuhlen, Torsten W. AND

Schlittmeier, Sabine J. AND Fels, Janina},

journal = {PLOS ONE},

publisher = {Public Library of Science},

title = {Audiovisual angle and voice incongruence do not affect

audiovisual verbal short-term memory in virtual reality},

year = {2025},

month = {08},

volume = {20},

url = {https://doi.org/10.1371/journal.pone.0330693},

pages = {1-23},

number = {8},

}

Demo: A Latency-Optimized LLM-based Multimodal Dialogue System for Embodied Conversational Agents in VR

Interactions with Embodied Conversational Agents (ECAs) are essential in many social Virtual Reality (VR) applications, highlighting the growing demand for free-flowing, context-aware conversations supported by low-latency, multimodal ECA responses. We introduce a modular, extensible framework powered by an Large Language Model (LLM), featuring streaming-based optimization techniques specially crafted for multimodal responses. Our system is capable of controlling self-behavior and task execution, in the form of moving through the Immersive Virtual Environment (IVE) directly controlled by the LLM, and is also capable of reacting to events in the IVE. In our study, our applied optimizations achieved a latency improvement of about (66%) on average compared to having no optimizations.

» Show BibTeX

@inproceedings{Kuehlem2025,

author = {W. K\"{u}hlem, Konstantin and Ehret, Jonathan and W. Kuhlen, Torsten and B\"{o}nsch, Andrea},

title = {A Latency-Optimized LLM-based Multimodal Dialogue System for Embodied Conversational Agents in VR},

year = {2025},

isbn = {9798400715082},

publisher = {Association for Computing Machinery},

doi = {10.1145/3717511.3749287},

abstract = {Interactions with Embodied Conversational Agents (ECAs) are essential in many social Virtual Reality (VR) applications, highlighting the growing demand for free-flowing, context-aware conversations supported by low-latency, multimodal ECA responses. We introduce a modular, extensible framework powered by an Large Language Model (LLM), featuring streaming-based optimization techniques specially crafted for multimodal responses. Our system is capable of controlling self-behavior and task execution, in the form of moving through the Immersive Virtual Environment (IVE) directly controlled by the LLM, and is also capable of reacting to events in the IVE. In our study, our applied optimizations achieved a latency improvement of about (66\%) on average compared to having no optimizations.},

booktitle = {Proceedings of the 25th ACM International Conference on Intelligent Virtual Agents},

articleno = {49},

numpages = {3},

series = {IVA '25}

}

Exploring Gaze Dynamics: Initial Findings on the Role of Listening Bystanders in Conversational Interactions

This work-in-progress paper investigates how virtual listening bystanders influence participants’ gaze behavior and their perception of turn-taking during scripted conversations with embodied conversational agents (ECAs). 25 participants interacted with five ECAs – two speakers and three bystanders – across three conditions: no bystanders, bystanders exhibiting random gazing behavior, and social bystanders engaging in mutual gaze and backchanneling. Participants either observed the conversation or actively participated as speakers by reciting prompted sentences. The results indicated that bystanders reduced the participants’ attention to speakers, hindering their ability to anticipate turn changes and resulting in longer delays in shifting their gaze to the new speaker after an ECA yielded the turn. Random gazing bystanders were particularly noted for obscuring conversational flow. These findings underscore the challenges of designing effective and natural conversational environments, highlighting the need for careful consideration of ECA behaviors to enhance user engagement.

@INPROCEEDINGS{Ehret2025,

author={Ehret, Jonathan and Dasbach, Valentin and Hartmann, Jan-Nikjas and

Fels, Janina and Kuhlen, Torsten W. and Bönsch, Andrea},

booktitle={2025 IEEE Conference on Virtual Reality and 3D User Interfaces

Abstracts and Workshops (VRW)},

title={Exploring Gaze Dynamics: Initial Findings on the Role of Listening

Bystanders in Conversational Interactions},

year={2025},

volume={},

number={},

pages={748-752},

doi={10.1109/VRW66409.2025.00151}}

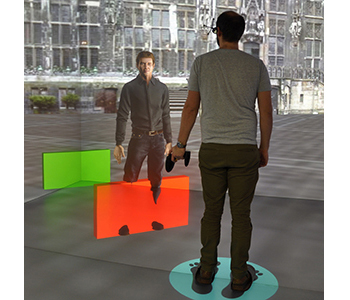

Wayfinding in Immersive Virtual Environments as Social Activity Supported by Virtual Agents

Effective navigation and interaction within immersive virtual environments rely on thorough scene exploration. Therefore, wayfinding is essential, assisting users in comprehending their surroundings, planning routes, and making informed decisions. Based on real-life observations, wayfinding is, thereby, not only a cognitive process but also a social activity profoundly influenced by the presence and behaviors of others. In virtual environments, these 'others' are virtual agents (VAs), defined as anthropomorphic computer-controlled characters, who enliven the environment and can serve as background characters or direct interaction partners. However, little research has been done to explore how to efficiently use VAs as social wayfinding support. In this paper, we aim to assess and contrast user experience, user comfort, and the acquisition of scene knowledge through a between-subjects study involving n = 60 participants across three distinct wayfinding conditions in one slightly populated urban environment: (i) unsupported wayfinding, (ii) strong social wayfinding using a virtual supporter who incorporates guiding and accompanying elements while directly impacting the participants' wayfinding decisions, and (iii) weak social wayfinding using flows of VAs that subtly influence the participants' wayfinding decisions by their locomotion behavior. Our work is the first to compare the impact of VAs' behavior in virtual reality on users' scene exploration, including spatial awareness, scene comprehension, and comfort. The results show the general utility of social wayfinding support, while underscoring the superiority of the strong type. Nevertheless, further exploration of weak social wayfinding as a promising technique is needed. Thus, our work contributes to the enhancement of VAs as advanced user interfaces, increasing user acceptance and usability.

@article{Boensch2024,

title={Wayfinding in Immersive Virtual Environments as Social Activity Supported by Virtual Agents},

author={B{\"o}nsch, Andrea and Ehret, Jonathan and Rupp, Daniel and Kuhlen, Torsten W.},

journal={Frontiers in Virtual Reality},

volume={4},

year={2024},

pages={1334795},

publisher={Frontiers},

doi={10.3389/frvir.2023.1334795}

}

A Lecturer’s Voice Quality and its Effect on Memory, Listening Effort, and Perception in a VR Environment

Many lecturers develop voice problems, such as hoarseness. Nevertheless, research on how voice quality influences listeners’ perception, comprehension, and retention of spoken language is limited to a small number of audio-only experiments. We aimed to address this gap by using audio-visual virtual reality (VR) to investigate the impact of a lecturer’s hoarseness on university students’ heard text recall, listening effort, and listening impression. Fifty participants were immersed in a virtual seminar room, where they engaged in a Dual-Task Paradigm. They listened to narratives presented by a virtual female professor, who spoke in either a typical or hoarse voice. Simultaneously, participants performed a secondary task. Results revealed significantly prolonged secondary-task response times with the hoarse voice compared to the typical voice, indicating increased listening effort. Subjectively, participants rated the hoarse voice as more annoying, effortful to listen to, and impeding for their cognitive performance. No effect of voice quality was found on heard text recall, suggesting that, while hoarseness may compromise certain aspects of spoken language processing, this might not necessarily result in reduced information retention. In summary, our findings underscore the importance of promoting vocal health among lecturers, which may contribute to enhanced listening conditions in learning spaces.

» Show BibTeX

@article{Schiller2024,

author = {Isabel S. Schiller and Carolin Breuer and Lukas Aspöck and

Jonathan Ehret and Andrea Bönsch and Torsten W. Kuhlen and Janina Fels and

Sabine J. Schlittmeier},

doi = {10.1038/s41598-024-63097-6},

issn = {2045-2322},

issue = {1},

journal = {Scientific Reports},

keywords = {Audio-visual language processing,Virtual reality,Voice

quality},

month = {5},

pages = {12407},

pmid = {38811832},

title = {A lecturer’s voice quality and its effect on memory, listening

effort, and perception in a VR environment},

volume = {14},

url = {https://www.nature.com/articles/s41598-024-63097-6},

year = {2024},

}

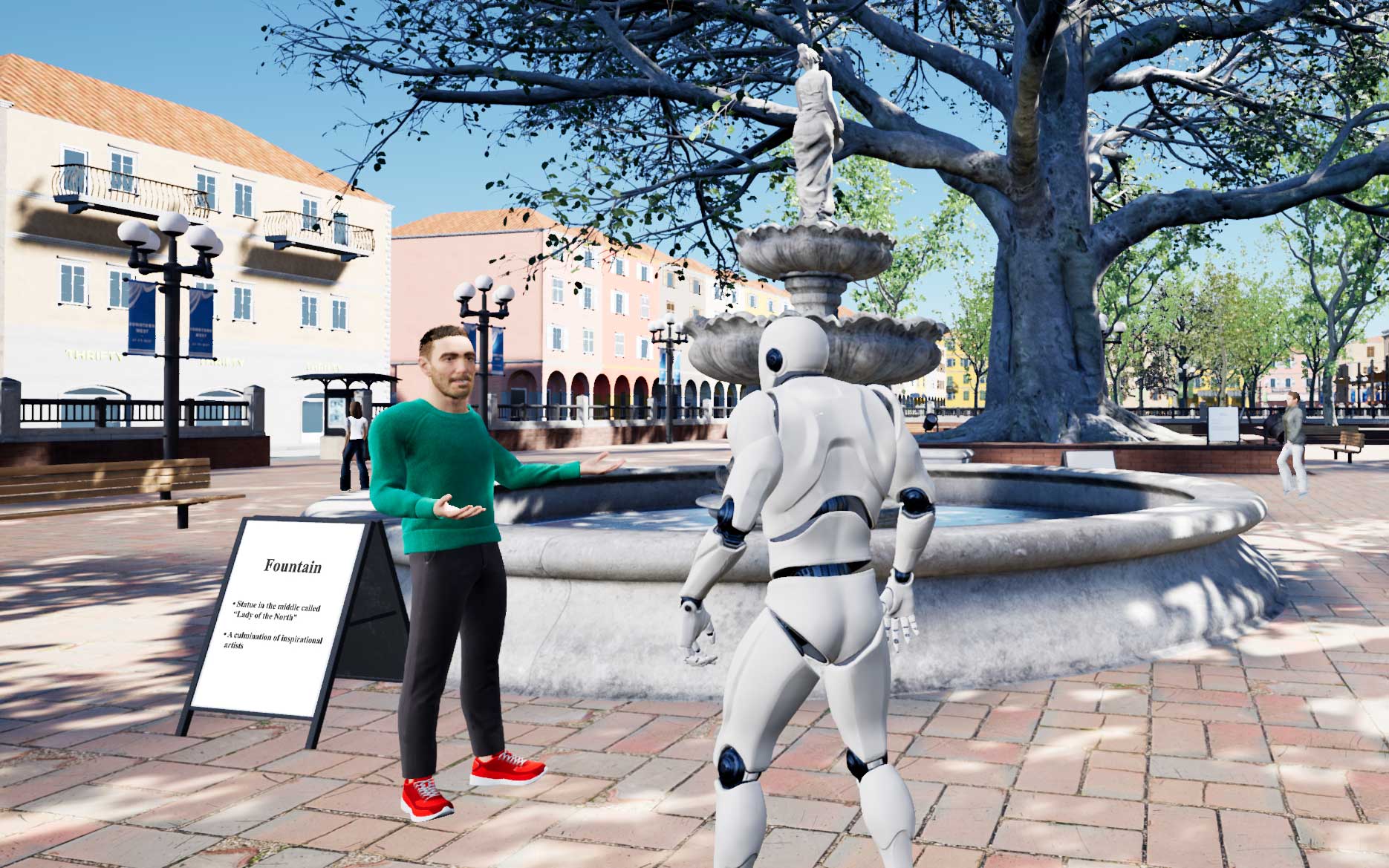

StudyFramework: Comfortably Setting up and Conducting Factorial-Design Studies Using the Unreal Engine

Setting up and conducting user studies is fundamental to virtual reality research. Yet, often these studies are developed from scratch, which is time-consuming and especially hard and error-prone for novice developers. In this paper, we introduce the StudyFramework, a framework specifically designed to streamline the setup and execution of factorial-design VR-based user studies within the Unreal Engine, significantly enhancing the overall process. We elucidate core concepts such as setup, randomization, the experimenter view, and logging. After utilizing our framework to set up and conduct their respective studies, 11 study developers provided valuable feedback through a structured questionnaire. This feedback, which was generally positive, highlighting its simplicity and usability, is discussed in detail.

» Show BibTeX

@ InProceedings{Ehret2024a,

author={Ehret, Jonathan and Bönsch, Andrea and Fels, Janina and

Schlittmeier, Sabine J. and Kuhlen, Torsten W.},

booktitle={2024 IEEE Conference on Virtual Reality and 3D User Interfaces

Abstracts and Workshops (VRW): Workshop "Open Access Tools and Libraries for

Virtual Reality"},

title={StudyFramework: Comfortably Setting up and Conducting

Factorial-Design Studies Using the Unreal Engine},

year={2024}

}

Audiovisual Coherence: Is Embodiment of Background Noise Sources a Necessity?

Exploring the synergy between visual and acoustic cues in virtual reality (VR) is crucial for elevating user engagement and perceived (social) presence. We present a study exploring the necessity and design impact of background sound source visualizations to guide the design of future soundscapes. To this end, we immersed n = 27 participants using a head-mounted display (HMD) within a virtual seminar room with six virtual peers and a virtual female professor. Participants engaged in a dual-task paradigm involving simultaneously listening to the professor and performing a secondary vibrotactile task, followed by recalling the heard speech content. We compared three types of background sound source visualizations in a within-subject design: no visualization, static visualization, and animated visualization. Participants’ subjective ratings indicate the importance of animated background sound source visualization for an optimal coherent audiovisual representation, particularly when embedding peer-emitted sounds. However, despite this subjective preference, audiovisual coherence did not affect participants’ performance in the dual-task paradigm measuring their listening effort.

» Show BibTeX

@ InProceedings{Ehret2024b,

author={Ehret, Jonathan and Bönsch, Andrea and Schiller, Isabel S. and

Breuer, Carolin and Aspöck, Lukas and Fels, Janina and Schlittmeier, Sabine

J. and Kuhlen, Torsten W.},

booktitle={2024 IEEE Conference on Virtual Reality and 3D User Interfaces

Abstracts and Workshops (VRW): "Workshop on Virtual Humans and Crowds in

Immersive Environments (VHCIE)"},

title={Audiovisual Coherence: Is Embodiment of Background Noise Sources a

Necessity?},

year={2024}

}

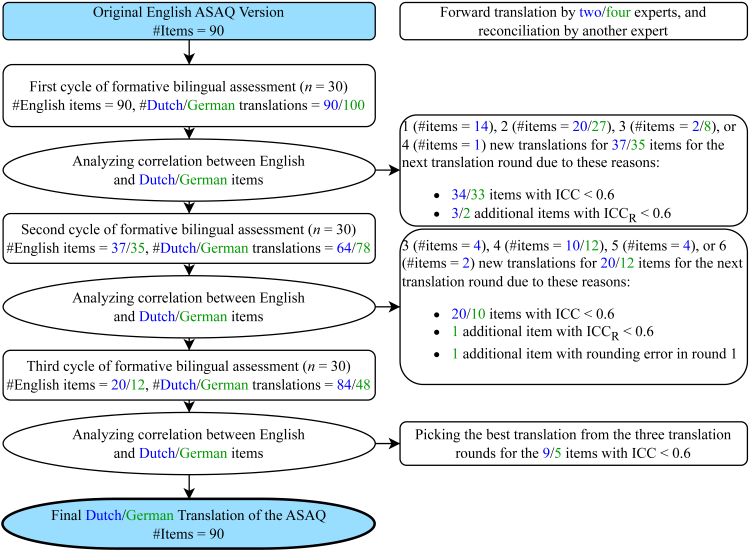

German and Dutch Translations of the Artificial-Social-Agent Questionnaire Instrument for Evaluating Human-Agent Interactions

Enabling the widespread utilization of the Artificial-Social-Agent (ASA)Questionnaire, a research instrument to comprehensively assess diverse ASA qualities while ensuring comparability, necessitates translations beyond the original English source language questionnaire. We thus present Dutch and German translations of the long and short versions of the ASA Questionnaire and describe the translation challenges we encountered. Summative assessments with 240 English-Dutch and 240 English-German bilingual participants show, on average, excellent correlations (Dutch ICC M = 0.82,SD = 0.07, range [0.58, 0.93]; German ICC M = 0.81, SD = 0.09, range [0.58,0.94]) with the original long version on the construct and dimension level. Results for the short version show, on average, good correlations (Dutch ICC M = 0.65, SD = 0.12, range [0.39, 0.82]; German ICC M = 0.67, SD = 0.14, range [0.30,0.91]). We hope these validated translations allow the Dutch and German-speaking populations to evaluate ASAs in their own language.

@InProceedings{Boensch2024,

author = { Nele Albers, Andrea Bönsch, Jonathan Ehret, Boleslav

A. Khodakov, Willem-Paul Brinkman },

booktitle = {ACM International Conference on Intelligent Virtual

Agents (IVA ’24)},

title = { German and Dutch Translations of the

Artificial-Social-Agent Questionnaire Instrument for Evaluating Human-Agent

Interactions},

year = {2024},

organization = {ACM},

pages = {4},

doi = {10.1145/3652988.3673928},

}

Who's next? Integrating Non-Verbal Turn-Taking Cues for Embodied Conversational Agents

Taking turns in a conversation is a delicate interplay of various signals, which we as humans can easily decipher. Embodied conversational agents (ECAs) communicating with humans should leverage this ability for smooth and enjoyable conversations. Extensive research hasanalyzed human turn-taking cues, and attempts have been made to predict turn-taking based on observed cues. These cues vary from prosodic, semantic, and syntactic modulation over adapted gesture and gaze behavior to actively used respiration. However, when generating such behavior for social robots or ECAs, often only single modalities were considered, e.g., gazing. We strive to design a comprehensive system that produces cues for all non-verbal modalities: gestures, gaze, and breathing. The system provides valuable cues without requiring speech content adaptation. We evaluated our system in a VR based user study with N = 32 participants executing two subsequent tasks. First, we asked them to listen to two ECAs taking turns in several conversations. Second, participants engaged in taking turns with one of the ECAs directly. We examined the system’s usability and the perceived social presence of the ECAs' turn-taking behavior, both with respect to each individual non-verbal modality and their interplay. While we found effects of gesture manipulation in interactions with the ECAs, no effects on social presence were found.

This work is licensed under a Creative Commons Attribution International 4.0 License

» Show BibTeX

@InProceedings{Ehret2023,

author = {Jonathan Ehret, Andrea Bönsch, Patrick Nossol, Cosima A. Ermert, Chinthusa Mohanathasan, Sabine J. Schlittmeier, Janina Fels and Torsten W. Kuhlen},

booktitle = {ACM International Conference on Intelligent Virtual Agents (IVA ’23)},

title = {Who's next? Integrating Non-Verbal Turn-Taking Cues for Embodied Conversational Agents},

year = {2023},

organization = {ACM},

pages = {8},

doi = {10.1145/3570945.3607312},

}

Voice Quality and its Effects on University Students' Listening Effort in a Virtual Seminar Room

A teacher’s poor voice quality may increase listening effort in pupils, but it is unclear whether this effect persists in adult listeners. Thus, the goal of this study is to examine the impact of vocal hoarseness on university students' listening effort in a virtual seminar room. An audio-visual immersive virtual reality environment is utilized to simulate a typical seminar room with common background sounds and fellow students represented as wooden mannequins. Participants wear a head-mounted display and are equipped with two controllers to engage in a dual-task paradigm. The primary task is to listen to a virtual professor reading short texts and retain relevant content information to be recalled later. The texts are presented either in a normal or an imitated hoarse voice. In parallel, participants perform a secondary task which is responding to tactile vibration patterns via the controllers. It is hypothesized that listening to the hoarse voice induces listening effort, resulting in more cognitive resources needed for primary task performance while secondary task performance is hindered. Results are presented and discussed in light of students’ cognitive performance and listening challenges in higher education learning environments.

@INPROCEEDINGS{Schiller:977871,

author = {Schiller, Isabel Sarah and Aspöck, Lukas and Breuer,

Carolin and Ehret, Jonathan and Bönsch, Andrea and Fels,

Janina and Kuhlen, Torsten and Schlittmeier, Sabine Janina},

title = {{V}oice Quality and its Effects on University

Students' Listening Effort in a Virtual Seminar Room},

year = {2023},

month = {Dec},

date = {2023-12-04},

organization = {Acoustics 2023, Sydney (Australia), 4

Dec 2023 - 8 Dec 2023},

doi = {10.1121/10.0022982}

}

Towards Plausible Cognitive Research in Virtual Environments: The Effect of Audiovisual Cues on Short-Term Memory in Two Talker Conversations

When three or more people are involved in a conversation, often one conversational partner listens to what the others are saying and has to remember the conversational content. The setups in cognitive-psychological experiments often differ substantially from everyday listening situations by neglecting such audiovisual cues. The presence of speech-related audiovisual cues, such as the spatial position, and the appearance or non-verbal behavior of the conversing talkers may influence the listener's memory and comprehension of conversational content. In our project, we provide first insights into the contribution of acoustic and visual cues on short-term memory, and (social) presence. Analyses have shown that the memory performance varies with increasingly more plausible audiovisual characteristics. Furthermore, we have conducted a series of experiments regarding the influence of the visual reproduction medium (virtual reality vs. traditional computer screens) and spatial or content audio-visual mismatch on auditory short-term memory performance. Adding virtual embodiments to the talkers allowed us to conduct experiments on the influence of the fidelity of co-verbal gestures and turn-taking signals. Thus, we are able to provide a more plausible paradigm for investigating memory for two-talker conversations within an interactive audiovisual virtual reality environment.

@InProceedings{Ehret2023Audictive,

author = {Jonathan Ehret, Cosima A. Ermert, Chinthusa

Mohanathasan, Janina Fels, Torsten W. Kuhlen and Sabine J. Schlittmeier},

booktitle = {Proceedings of the 1st AUDICTIVE Conference},

title = {Towards Plausible Cognitive Research in Virtual

Environments: The Effect of Audiovisual Cues on Short-Term Memory in

Two-Talker Conversations},

year = {2023},

pages = {68-72},

doi = { 10.18154/RWTH-2023-08409},

}

Poster: Memory and Listening Effort in Two-Talker Conversations: Does Face Visibility Help Us Remember?

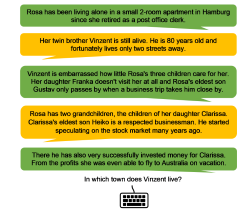

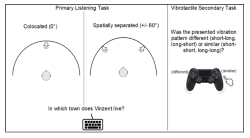

Listening to and remembering conversational content is a highly demanding task that requires the interplay of auditory processes and several cognitive functions. In face-to-face conversations, it is quite impossible that two talker’s’ audio signals originate from the same spatial position and that their faces are hidden from view. The availability of such audiovisual cues when listening potentially influences memory and comprehension of the heard content. In the present study, we investigated the effect of static visual faces of two talkers and cognitive functions on the listener’s short-term memory of conversations and listening effort. Participants performed a dual-task paradigm including a primary listening task, where a conversation between two spatially separated talkers (+/- 60°) with static faces was presented. In parallel, a vibrotactile task was administered, independently of both visual and auditory modalities. To investigate the possibility of person-specific factors influencing short-term memory, we assessed additional cognitive functions like working memory. We discuss our results in terms of the role that visual information and cognitive functions play in short-term memory of conversations.

@InProceedings{ Mohanathasan2023ESCoP,

author = { Chinthusa Mohanathasan, Jonathan Ehret, Cosima A. Ermert, Janina Fels, Torsten Wolfgang Kuhlen and Sabine J. Schlittmeier},

booktitle = { 23. Conference of the European Society for Cognitive Psychology , Porto , Portugal , ESCoP 2023},

title = { Memory and Listening Effort in Two-Talker Conversations: Does Face Visibility Help Us Remember?},

year = {2023},

}

Towards More Realistic Listening Research in Virtual Environments: The Effect of Spatial Separation of Two Talkers in Conversations on Memory and Listening Effort

between three or more people often include phases in which one conversational partner is the listener while the others are conversing. In face-to-face conversations, it is quite unlikely to have two talkers’ audio signals come from the same spatial location - yet monaural-diotic sound presentation is often realized in cognitive-psychological experiments. However, the availability of spatial cues probably influences the cognitive processing of heard conversational content. In the present study we test this assumption by investigating spatial separation of conversing talkers in the listener’s short-term memory and listening effort. To this end, participants were administered a dual-task paradigm. In the primary task, participants listened to a conversation between two alternating talkers in a non-noisy setting and answered questions on the conversational content after listening. The talkers’ audio signals were presented at a distance of 2.5m from the listener either spatially separated (+/- 60°) or co-located (0°; within-subject). As a secondary task, participants worked in parallel to the listening task on a vibrotactile stimulation task, which is detached from auditory and visual modalities. The results are reported and discussed in particular regarding future listening experiments in virtual environments.

@InProceedings{Mohanathasan2023DAGA,

author = {Chinthusa Mohanathasan, Jonathan Ehret, Cosima A.

Ermert, Janina Fels, Torsten Wolfgang Kuhlen and Sabine J. Schlittmeier},

booktitle = {49. Jahrestagung für Akustik , Hamburg , Germany ,

DAGA 2023},

title = {Towards More Realistic Listening Research in Virtual

Environments: The Effect of Spatial Separation of Two Talkers in

Conversations on Memory and Listening Effort},

year = {2023},

pages = {1425-1428},

doi = { 10.18154/RWTH-2023-05116},

}

Poster: Whom Do You Follow? Pedestrian Flows Constraining the User’s Navigation during Scene Exploration

In this work-in-progress, we strive to combine two wayfinding techniques supporting users in gaining scene knowledge, namely (i) the River Analogy, in which users are considered as boats automatically floating down predefined rivers, e.g., streets in an urban scene, and (ii) virtual pedestrian flows as social cues indirectly guiding users through the scene. In our combined approach, the pedestrian flows function as rivers. To navigate through the scene, users leash themselves to a pedestrian of choice, considered as boat, and are dragged along the flow towards an area of interest. Upon arrival, users can detach themselves to freely explore the site without navigational constraints. We briefly outline our approach, and discuss the results of an initial study focusing on various leashing visualizations.

@InProceedings{Boensch2023b,

author = {Andrea Bönsch, Lukas B. Zimmermann, Jonathan Ehret, and Torsten W.Kuhlen},

booktitle = {ACM International Conferenceon Intelligent Virtual Agents (IVA ’23)},

title = {Whom Do You Follow? Pedestrian Flows Constraining the User’sNavigation during Scene Exploration},

year = {2023},

organization = {ACM},

pages = {3},

doi = {10.1145/3570945.3607350},

}

Poster: Where Do They Go? Overhearing Conversing Pedestrian Groups during Scene Exploration

On entering an unknown immersive virtual environment, a user’s first task is gaining knowledge about the respective scene, termed scene exploration. While many techniques for aided scene exploration exist, such as virtual guides, or maps, unaided wayfinding through pedestrians-as-cues is still in its infancy. We contribute to this research by indirectly guiding users through pedestrian groups conversing about their target location. A user who overhears the conversation without being a direct addressee can consciously decide whether to follow the group to reach an unseen point of interest. We outline our approach and give insights into the results of a first feasibility study in which we compared our new approach to non-talkative groups and groups conversing about random topics.

@InProceedings{Boensch2023a,

author = {Andrea Bönsch, Till Sittart, Jonathan Ehret, and Torsten W. Kuhlen},

booktitle = {ACM International Conference on Intelligent VirtualAgents (IVA ’23)},

title = {Where Do They Go? Overhearing Conversing Pedestrian Groups duringScene Exploration},

year = {2023},

pages = {3},

publisher = {ACM},

doi = {10.1145/3570945.3607351},

}

AuViST - An Audio-Visual Speech and Text Database for the Heard-Text-Recall Paradigm

The Audio-Visual Speech and Text (AuViST) database provides additional material to the heardtext-recall (HTR) paradigm by Schlittmeier and colleagues. German audio recordings in male and female voice as well as matching face tracking data are provided for all texts.

Poster: Memory and Listening Effort in Conversations: The Role of Spatial Cues and Cognitive Functions

Conversations involving three or more people often include phases where one conversational partner listens to what the others are saying and has to remember the conversational content. It is possible that the presence of speech-related auditory information, such as different spatial positions of conversing talkers, influences listener's memory and comprehension of conversational content. However, in cognitive-psychological experiments, talkers’ audio signals are often presented diotically, i.e., identically to both ears as mono signals. This does not reflect face-to-face conversations where two talkers’ audio signals never come from the same spatial location. Therefore, in the present study, we examine how the spatial separation of two conversing talkers affects listener’s short-term memory of heard information and listening effort. To accomplish this, participants were administered a dual-task paradigm. In the primary task, participants listened to a conversation between a female and a male talker and then responded to content-related questions. The talkers’ audio signals were presented via headphones at a distance of 2.5m from the listener either spatially separated (+/- 60°) or co-located (0°). In parallel to this listening task, participants performed a vibrotactile pattern recognition task as a secondary task, that is independent of both auditory and visual modalities. In addition, we measured participants’ working memory capacity, selective visual attention, and mental speed to control for listener-specific characteristics that may affect listener’s memory performance. We discuss the extent to which spatial cues affect higher-level auditory cognition, specifically short-term memory of conversational content.

@InProceedings{ Mohanathasan2023TeaP,

author = { Chinthusa Mohanathasan, Jonathan Ehret, Cosima A.

Ermert, Janina Fels, Torsten Wolfgang Kuhlen and Sabine J. Schlittmeier},

booktitle = { Abstracts of the 65th TeaP : Tagung experimentell

arbeitender Psycholog:innen, Conference of Experimental Psychologists},

title = { Memory and Listening Effort in Conversations: The

Role of Spatial Cues and Cognitive Functions},

year = {2023},

pages = {252-252},

}

Audio-Visual Content Mismatches in the Serial Recall Paradigm

In many everyday scenarios, short-term memory is crucial for human interaction, e.g., when remembering a shopping list or following a conversation. A well-established paradigm to investigate short-term memory performance is the serial recall. Here, participants are presented with a list of digits in random order and are asked to memorize the order in which the digits were presented. So far, research in cognitive psychology has mostly focused on the effect of auditory distractors on the recall of visually presented items. The influence of visual distractors on the recall of auditory items has mostly been ignored. In the scope of this talk, we designed an audio-visual serial recall task. Along with the auditory presentation of the to-remembered digits, participants saw the face of a virtual human, moving the lips according to the spoken words. However, the gender of the face did not always match the gender of the voice heard, hence introducing an audio-visual content mismatch. The results give further insights into the interplay of visual and auditory stimuli in serial recall experiments.

@InProceedings{Ermert2023DAGA,

author = {Cosima A. Ermert, Jonathan Ehret, Torsten Wolfgang

Kuhlen, Chinthusa Mohanathasan, Sabine J. Schlittmeier and Janina Fels},

booktitle = {49. Jahrestagung für Akustik , Hamburg , Germany ,

DAGA 2023},

title = {Audio-Visual Content Mismatches in the Serial Recall

Paradigm},

year = {2023},

pages = {1429-1430},

}

Poster: Hoarseness among university professors and how it can influence students’ listening impression: an audio-visual immersive VR study

For university students, following a lecture can be challenging when room acoustic conditions are poor or when their professor suffers from a voice disorder. Related to the high vocal demands of teaching, university professors develop voice disorders quite frequently. The key symptom is hoarseness. The aim of this study is to investigate the effect of hoarseness on university students’ subjective listening effort and listening impression using audio-visual immersive virtual reality (VR) including a real-time room simulation of a typical seminar room. Equipped with a head-mounted display, participants are immersed in the virtual seminar room, with typical binaural background sounds, where they perform a listening task. This task involves comprehending and recalling information from text, read aloud by a female virtual professor positioned in front of the seminar room. Texts are presented in two experimental blocks, one of them read aloud in a normal (modal) voice, the other one in a hoarse voice. After each block, participants fill out a questionnaire to evaluate their perceived listening effort and overall listening impression under the respective voice quality, as well as the human-likeliness of and preferences towards the virtual professor. Results are presented and discussed regarding voice quality design for virtual tutors and potential impli-cations for students’ motivation and performance in academic learning spaces.

@InProceedings{Schiller2023Audictive,

author = {Isabel S. Schiller, Lukas Aspöck, Carolin Breuer,

Jonathan Ehret and Andrea Bönsch},

booktitle = {Proceedings of the 1st AUDICTIVE Conference},

title = {Hoarseness among university professors and how it can

influence students’ listening impression: an audio-visual immersive VR

study},

year = {2023},

pages = {134-137},

doi = { 10.18154/RWTH-2023-08885},

}

Does a Talker's Voice Quality Affect University Students' Listening Effort in a Virtual Seminar Room?

A university professor's voice quality can either facilitate or impede effective listening in students. In this study, we investigated the effect of hoarseness on university students’ listening effort in seminar rooms using audio-visual virtual reality (VR). During the experiment, participants were immersed in a virtual seminar room with typical background sounds and performed a dual-task paradigm involving listening to and answering questions about short stories, narrated by a female virtual professor, while responding to tactile vibration patterns. In a within-subject design, the professor's voice quality was varied between normal and hoarse. Listening effort was assessed based on performance and response time measures in the dual-task paradigm and participants’ subjective evaluation. It was hypothesized that listening to a hoarse voice leads to higher listening effort. While the analysis is still ongoing, our preliminary results show that listening to the hoarse voice significantly increased perceived listening effort. In contrast, the effect of voice quality was not significant in the dual-task paradigm. These findings indicate that, even if students' performance remains unchanged, listening to hoarse university professors may still require more effort.

@INBOOK{Schiller:977866,

author = {Schiller, Isabel Sarah and Bönsch, Andrea and Ehret,

Jonathan and Breuer, Carolin and Aspöck, Lukas},

title = {{D}oes a talker's voice quality affect university

students' listening effort in a virtual seminar room?},

address = {Turin},

publisher = {European Acoustics Association},

pages = {2813-2816},

year = {2024},

booktitle = {Proceedings of the 10th Convention of

the European Acoustics Association :

Forum Acusticum 2023. Politecnico di

Torino, Torino, Italy, September 11 -

15, 2023 / Editors: Arianna Astolfi,

Francesco Asdrudali, Louena Shtrepi},

month = {Sep},

date = {2023-09-11},

organization = {10. Convention of the European

Acoustics Association : Forum

Acusticum, Turin (Italy), 11 Sep 2023 -

15 Sep 2023},

doi = {10.61782/fa.2023.0320},

}

Verbal Interactions with Embodied Conversational Agents

Embedding virtual humans into virtual reality (VR) applications can fulfill diverse needs. These, so-called, embodied conversational agents (ECAs) can simply enliven the virtual environments, act for example as training partners, tutors, or therapists, or serve as advanced (emotional) user interfaces to control immersive systems. The latter case is of special interest since we as human users are specifically good at interpreting other humans. ECAs can enhance their verbal communication with non-verbal behavior and thereby make communication more efficient. For example, backchannels, like nodding or signaling not understanding, can be used to give feedback while a user is speaking. Furthermore, gestures, gaze, posture, proxemics, and many more non-verbal behaviors can be applied. Additionally, turn-taking can be streamlined when the ECA understands when to take over the turn and signals willingness to yield it once done. While many of these aspects are already under investigation from very different disciplines, operationalizing those into versatile, virtually embodied human-computer interfaces remains an open challenge.

To this end, I conducted several studies investigating acoustical effects of ECAs' speech, both with regard to the auralization in the virtual environment and the speech signals used. Furthermore, I want to find guidelines for expressing both turn-taking and various backchannels that make interactions with such advanced embodied interfaces more efficient and pleasant, both when the ECA is speaking and during listening. Additionally, measuring social presence (i.e., the feeling of being there and interacting with a ``real'' person) is an important instrument for this kind of research, since I want to facilitate exactly those subconscious processes of understanding other humans, which we as humans are particularly good at. Therefore, I want to investigate objective measures for social presence.

@inproceedings{Ehret2022a,

author = {Ehret, Jonathan},

booktitle = {Doctoral Consortium at the 22nd ACM International Conference on

Intelligent Virtual Agents},

title = {{Doctoral Consortium : Verbal Interactions with Embodied

Conversational Agents}},

year = {2022}

}

Poster: Measuring Listening Effort in Adverse Listening Conditions: Testing Two Dual Task Paradigms for Upcoming Audiovisual Virtual Reality Experiments

Listening to and remembering the content of conversations is a highly demanding task from a cognitive-psychological perspective. Particularly, in adverse listening conditions cognitive resources available for higher-level processing of speech are reduced since increased listening effort consumes more of the overall available cognitive resources. Applying audiovisual Virtual Reality (VR) environments to listening research could be highly beneficial for exploring cognitive performance for overheard content. In this study, we therefore evaluated two (secondary) tasks concerning their suitability for measuring cognitive spare capacity as an indicator of listening effort in audiovisual VR environments. In two experiments, participants were administered a dual-task paradigm including a listening primary) task in which a conversation between two talkers is presented, and an unrelated secondary task each. Both experiments were carried out without additional background noise and under continuous noise. We discuss our results in terms of guidance for future experimental studies, especially in audiovisual VR environments.

@InProceedings{ Mohanathasan2022ESCoP,

author = { Chinthusa Mohanathasan, Jonathan Ehret, Cosima A.

Ermert, Janina Fels, Torsten Wolfgang Kuhlen and Sabine J. Schlittmeier},

booktitle = { 22. Conference of the European Society for Cognitive

Psychology , Lille , France , ESCoP},

title = { Measuring Listening Effort in Adverse Listening

Conditions: Testing Two Dual Task Paradigms for Upcoming Audiovisual Virtual

Reality Experiments},

year = {2022},

}

Late-Breaking Report: Natural Turn-Taking with Embodied Conversational Agents

Adding embodied conversational agents (ECAs) to immersive virtual environments (IVEs) becomes relevant in various application scenarios, for example, conversational systems. For successful interactions with these ECAs, they have to behave naturally, i.e. in the way a user would expect a real human to behave. Teaming up with acousticians and psychologists, we strive to explore turn-taking in VR-based interactions between either two ECAs or an ECA and a human user.

Late-Breaking Report: An Embodied Conversational Agent Supporting Scene Exploration by Switching between Guiding and Accompanying

In this late-breaking report, we first motivate the requirement of an embodied conversational agent (ECA) who combines characteristics of a virtual tour guide and a knowledgeable companion in order to allow users an interactive and adaptable, however, structured exploration of an unknown immersive, architectural environment. Second, we roughly outline our proposed ECA’s behavioral design followed by a teaser on the planned user study.

Do Prosody and Embodiment Influence the Perceived Naturalness of Conversational Agents' Speech?

presented at ACM Symposium on Applied Perception (SAP)

For conversational agents’ speech, all possible sentences have to be either prerecorded by voice actors or the required utterances can be synthesized. While synthesizing speech is more flexible and economic in production, it also potentially reduces the perceived naturalness of the agents amongst others due to mistakes at various linguistic levels. In our paper, we are interested in the impact of adequate and inadequate prosody, here particularly in terms of accent placement, on the perceived naturalness and aliveness of the agents. We compare (i) inadequate prosody, as generated by off-the-shelf text-to-speech (TTS) engines with synthetic output, (ii) the same inadequate prosody imitated by trained human speakers and (iii) adequate prosody produced by those speakers. The speech was presented either as audio-only or by embodied, anthropomorphic agents, to investigate the potential masking effect by a simultaneous visual representation of those virtual agents. To this end, we conducted an online study with 40 participants listening to four different dialogues each presented in the three Speech levels and the two Embodiment levels. Results confirmed that adequate prosody in human speech is perceived as more natural (and the agents are perceived as more alive) than inadequate prosody in both human (ii) and synthetic speech (i). Thus, it is not sufficient to just use a human voice for an agent’s speech to be perceived as natural - it is decisive whether the prosodic realisation is adequate or not. Furthermore, and surprisingly, we found no masking effect by speaker embodiment, since neither a human voice with inadequate prosody nor a synthetic voice was judged as more natural, when a virtual agent was visible compared to the audio-only condition. On the contrary, the human voice was even judged as less “alive” when accompanied by a virtual agent. In sum, our results emphasize on the one hand the importance of adequate prosody for perceived naturalness, especially in terms of accents being placed on important words in the phrase, while showing on the other hand that the embodiment of virtual agents plays a minor role in naturalness ratings of voices.

» Show BibTeX

@article{Ehret2021a,

author = {Ehret, Jonathan and B\"{o}nsch, Andrea and Asp\"{o}ck, Lukas and R\"{o}hr, Christine T. and Baumann, Stefan and Grice, Martine and Fels, Janina and Kuhlen, Torsten W.},

title = {Do Prosody and Embodiment Influence the Perceived Naturalness of Conversational Agents’ Speech?},

journal = {ACM transactions on applied perception},

year = {2021},

issue_date = {October 2021},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

volume = {18},

number = {4},

articleno = {21},

issn = {1544-3558},

url = {https://doi.org/10.1145/3486580},

doi = {10.1145/3486580},

numpages = {15},

keywords = {speech, audio, accentuation, prosody, text-to-speech, Embodied conversational agents (ECAs), virtual acoustics, embodiment}

}

Being Guided or Having Exploratory Freedom: User Preferences of a Virtual Agent’s Behavior in a Museum

A virtual guide in an immersive virtual environment allows users a structured experience without missing critical information. However, although being in an interactive medium, the user is only a passive listener, while the embodied conversational agent (ECA) fulfills the active roles of wayfinding and conveying knowledge. Thus, we investigated for the use case of a virtual museum, whether users prefer a virtual guide or a free exploration accompanied by an ECA who imparts the same information compared to the guide. Results of a small within-subjects study with a head-mounted display are given and discussed, resulting in the idea of combining benefits of both conditions for a higher user acceptance. Furthermore, the study indicated the feasibility of the carefully designed scene and ECA’s appearance.

We also submitted a GALA video entitled "An Introduction to the World of Internet Memes by Curator Kate: Guiding or Accompanying Visitors?" by D. Hashem, A. Bönsch, J. Ehret, and T.W. Kuhlen, showcasing our application.

IVA 2021 GALA Audience Award!

» Show BibTeX

@inproceedings{Boensch2021b,

author = {B\"{o}nsch, Andrea and Hashem, David and Ehret, Jonathan and Kuhlen, Torsten W.},

title = {{Being Guided or Having Exploratory Freedom: User Preferences of a Virtual Agent's Behavior in a Museum}},

year = {2021},

isbn = {9781450386197},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3472306.3478339},

doi = {10.1145/3472306.3478339},

booktitle = {{Proceedings of the 21th ACM International Conference on Intelligent Virtual Agents}},

pages = {33–40},

numpages = {8},

keywords = {virtual agents, enjoyment, guiding, virtual reality, free exploration, museum, embodied conversational agents},

location = {Virtual Event, Japan},

series = {IVA '21}

}

Poster: Indircet User Guidance by Pedestrians in Virtual Environments

Scene exploration allows users to acquire scene knowledge on entering an unknown virtual environment. To support users in this endeavor, aided wayfinding strategies intentionally influence the user’s wayfinding decisions through, e.g., signs or virtual guides.

Our focus, however, is an unaided wayfinding strategy, in which we use virtual pedestrians as social cues to indirectly and subtly guide users through virtual environments during scene exploration. We shortly outline the required pedestrians’ behavior and results of a first feasibility study indicating the potential of the general approach.

» Show BibTeX

@inproceedings {Boensch2021a,

booktitle = {ICAT-EGVE 2021 - International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments - Posters and Demos},

editor = {Maiero, Jens and Weier, Martin and Zielasko, Daniel},

title = {{Indirect User Guidance by Pedestrians in Virtual Environments}},

author = {Bönsch, Andrea and Güths, Katharina and Ehret, Jonathan and Kuhlen, Torsten W.},

year = {2021},

publisher = {The Eurographics Association},

ISSN = {1727-530X},

ISBN = {978-3-03868-159-5},

DOI = {10.2312/egve.20211336}

}

Poster: Prosodic and Visual Naturalness of Dialogs Presented by Conversational Virtual Agents

Conversational virtual agents, with and without visual representation, are becoming more present in our daily life, e.g. as intelligent virtual assistants on smart devices. To investigate the naturalness of both the speech and the nonverbal behavior of embodied conversational agents (ECAs), an interdisciplinary research group was initiated, consisting of phoneticians, computer scientists, and acoustic engineers. For a web-based pilot experiment, simple dialogs between a male and a female speaker were created, with three prosodic conditions. For condition 1, the dialog was created synthetically using a text-to-speech engine. In the other two prosodic conditions (2,3) human speakers were recorded with 2) the erroneous accentuation of the text-to-speech synthesis of condition 1, and 3) with a natural accentuation. Face tracking data of the recorded speakers was additionally obtained and applied as input data for the facial animation of the ECAs. Based on the recorded data, auralizations in a virtual acoustic environment were generated and presented as binaural signals to the participants either in combination with the visual representation of the ECAs as short videos or without any visual feedback. A preliminary evaluation of the participants’ responses to questions related to naturalness, presence, and preference is presented in this work.

@inproceedings{Aspoeck2021,

author = {Asp\"{o}ck, Lukas and Ehret, Jonathan and Baumann, Stefan and B\"{o}nsch, Andrea and R\"{o}hr, Christine T. and Grice, Martine and Kuhlen, Torsten W. and Fels, Janina},

title = {Prosodic and Visual Naturalness of Dialogs Presented by Conversational Virtual Agents},

year = {2021},

note = {Hybride Konferenz},

month = {Aug},

date = {2021-08-15},

organization = {47. Jahrestagung für Akustik, Wien (Austria), 15 Aug 2021 - 18 Aug 2021},

url = {https://vr.rwth-aachen.de/publication/02207/}

}

Listening to, and remembering conversations between two talkers: Cognitive research using embodied conversational agents in audiovisual virtual environments

In the AUDICTIVE project about listening to, and remembering the content of conversations between two talkers we aim to investigate the combined effects of potentially performance-relevant but scarcely addressed audiovisual cues on memory and comprehension for running speech. Our overarching methodological approach is to develop an audiovisual Virtual Reality testing environment that includes embodied Virtual Agents (VAs). This testing environment will be used in a series of experiments to research the basic aspects of audiovisual cognitive performance in a close(r)-to-real-life setting. We aim to provide insights into the contribution of acoustical and visual cues on the cognitive performance, user experience, and presence as well as quality and vibrancy of VR applications, especially those with a social interaction focus. We will study the effects of variations in the audiovisual ’realism’ of virtual environments on memory and comprehension of multi-talker conversations and investigate how fidelity characteristics in audiovisual virtual environments contribute to the realism and liveliness of social VR scenarios with embodied VAs. Additionally, we will study the suitability of text memory, comprehension measures, and subjective judgments to assess the quality of experience of a VR environment. First steps of the project with respect to the general idea of AUDICTIVE are presented.

@ inproceedings {Fels2021,

author = {Fels, Janina and Ermert, Cosima A. and Ehret, Jonathan and Mohanathasan, Chinthusa and B\"{o}nsch, Andrea and Kuhlen, Torsten W. and Schlittmeier, Sabine J.},

title = {Listening to, and Remembering Conversations between Two Talkers: Cognitive Research using Embodied Conversational Agents in Audiovisual Virtual Environments},

address = {Berlin},

publisher = {Deutsche Gesellschaft für Akustik e.V. (DEGA)},

pages = {1328-1331},

year = {2021},

booktitle = {[Fortschritte der Akustik - DAGA 2021, DAGA 2021, 2021-08-15 - 2021-08-18, Wien, Austria]},

month = {Aug},

date = {2021-08-15},

organization = {47. Jahrestagung für Akustik, Wien (Austria), 15 Aug 2021 - 18 Aug 2021},

url = {https://vr.rwth-aachen.de/publication/02206/}

}

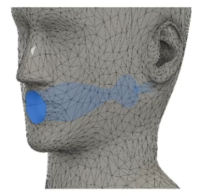

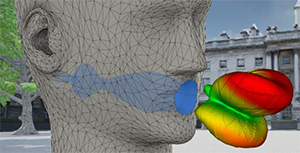

Talk: Speech Source Directivity for Embodied Conversational Agents

Embodied conversational agents (ECAs) are computer-controlled characters who communicate with a human using natural language. Being represented as virtual humans, ECAs are often utilized in domains such as training, therapy, or guided tours while being embedded in an immersive virtual environment. Having plausible speech sound is thereby desirable to improve the overall plausibility of these virtual-reality-based simulations. In an audiovisual VR experiment, we investigated the impact of directional radiation for the produced speech on the perceived naturalism. Furthermore, we examined how directivity filters influence the perceived social presence of participants in interactions with an ECA. Therefor we varied the source directivity between 1) being omnidirectional, 2) featuring the average directionality of a human speaker, and 3) dynamically adapting to the currently produced phonemes. Our results indicate that directionality of speech is noticed and rated as more natural. However, no significant change of perceived naturalness could be found when adding dynamic, phoneme-dependent directivity. Furthermore, no significant differences on social presence were measurable between any of the three conditions.

» Show BibTeX

Bibtex:

@misc{Ehret2021b,

author = {Ehret, Jonathan and Aspöck, Lukas and B\"{o}nsch, Andrea and Fels, Janina and Kuhlen, Torsten W.},

title = {Speech Source Directivity for Embodied Conversational Agents},

publisher = {IHTA, Institute for Hearing Technology and Acoustics},

year = {2021},

note = {Hybride Konferenz},

month = {Aug},

date = {2021-08-15},

organization = {47. Jahrestagung für Akustik, Wien (Austria), 15 Aug 2021 - 18 Aug 2021},

subtyp = {Video},

url = {https://vr.rwth-aachen.de/publication/02205/}

}

Inferring a User’s Intent on Joining or Passing by Social Groups

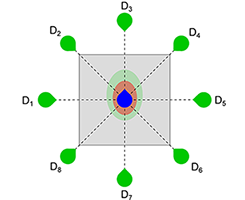

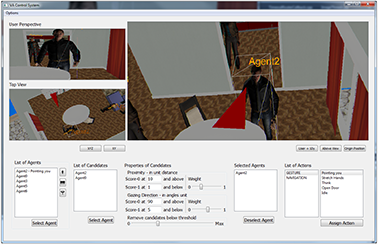

Modeling the interactions between users and social groups of virtual agents (VAs) is vital in many virtual-reality-based applications. However, only little research on group encounters has been conducted yet. We intend to close this gap by focusing on the distinction between joining and passing-by a group. To enhance the interactive capacity of VAs in these situations, knowing the user’s objective is required to showreasonable reactions. To this end,we propose a classification scheme which infers the user’s intent based on social cues such as proxemics, gazing and orientation, followed by triggering believable, non-verbal actions on the VAs.We tested our approach in a pilot study with overall promising results and discuss possible improvements for further studies.

» Show BibTeX

@inproceedings{10.1145/3383652.3423862,

author = {B\"{o}nsch, Andrea and Bluhm, Alexander R. and Ehret, Jonathan and Kuhlen, Torsten W.},

title = {Inferring a User's Intent on Joining or Passing by Social Groups},

year = {2020},

isbn = {9781450375863},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3383652.3423862},

doi = {10.1145/3383652.3423862},

abstract = {Modeling the interactions between users and social groups of virtual agents (VAs) is vital in many virtual-reality-based applications. However, only little research on group encounters has been conducted yet. We intend to close this gap by focusing on the distinction between joining and passing-by a group. To enhance the interactive capacity of VAs in these situations, knowing the user's objective is required to show reasonable reactions. To this end, we propose a classification scheme which infers the user's intent based on social cues such as proxemics, gazing and orientation, followed by triggering believable, non-verbal actions on the VAs. We tested our approach in a pilot study with overall promising results and discuss possible improvements for further studies.},

booktitle = {Proceedings of the 20th ACM International Conference on Intelligent Virtual Agents},

articleno = {10},

numpages = {8},

keywords = {virtual agents, joining a group, social groups, virtual reality},

location = {Virtual Event, Scotland, UK},

series = {IVA '20}

}

Evaluating the Influence of Phoneme-Dependent Dynamic Speaker Directivity of Embodied Conversational Agents’ Speech

Generating natural embodied conversational agents within virtual spaces crucially depends on speech sounds and their directionality. In this work, we simulated directional filters to not only add directionality, but also directionally adapt each phoneme. We therefore mimic reality where changing mouth shapes have an influence on the directional propagation of sound. We conducted a study (n = 32) evaluating naturalism ratings, preference and distinguishability of omnidirectional speech auralization compared to static and dynamic, phoneme-dependent directivities. The results indicated that participants cannot distinguish dynamic from static directivity. Furthermore, participants’ preference ratings aligned with their naturalism ratings. There was no unanimity, however, with regards to which auralization is the most natural.

» Show BibTeX

@inproceedings{10.1145/3383652.3423863,

author = {Ehret, Jonathan and Stienen, Jonas and Brozdowski, Chris and B\"{o}nsch, Andrea and Mittelberg, Irene and Vorl\"{a}nder, Michael and Kuhlen, Torsten W.},

title = {Evaluating the Influence of Phoneme-Dependent Dynamic Speaker Directivity of Embodied Conversational Agents' Speech},

year = {2020},

isbn = {9781450375863},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3383652.3423863},

doi = {10.1145/3383652.3423863},

abstract = {Generating natural embodied conversational agents within virtual spaces crucially depends on speech sounds and their directionality. In this work, we simulated directional filters to not only add directionality, but also directionally adapt each phoneme. We therefore mimic reality where changing mouth shapes have an influence on the directional propagation of sound. We conducted a study (n = 32) evaluating naturalism ratings, preference and distinguishability of omnidirectional speech auralization compared to static and dynamic, phoneme-dependent directivities. The results indicated that participants cannot distinguish dynamic from static directivity. Furthermore, participants' preference ratings aligned with their naturalism ratings. There was no unanimity, however, with regards to which auralization is the most natural.},

booktitle = {Proceedings of the 20th ACM International Conference on Intelligent Virtual Agents},

articleno = {17},

numpages = {8},

keywords = {phoneme-dependent directivity, directional 3D sound, speech, embodied conversational agents, virtual acoustics},

location = {Virtual Event, Scotland, UK},

series = {IVA '20}

}

The Impact of a Virtual Agent’s Non-Verbal Emotional Expression on a User’s Personal Space Preferences

Virtual-reality-based interactions with virtual agents (VAs) are likely subject to similar influences as human-human interactions. In either real or virtual social interactions, interactants try to maintain their personal space (PS), an ubiquitous, situative, flexible safety zone. Building upon larger PS preferences to humans and VAs with angry facial expressions, we extend the investigations to whole-body emotional expressions. In two immersive settings–HMD and CAVE–66 males were approached by an either happy, angry, or neutral male VA. Subjects preferred a larger PS to the angry VA when being able to stop him at their convenience (Sample task), replicating previous findings, and when being able to actively avoid him (PassBy task). In the latter task, we also observed larger distances in the CAVE than in the HMD.

» Show BibTeX

@inproceedings{10.1145/3383652.3423888,

author = {B\"{o}nsch, Andrea and Radke, Sina and Ehret, Jonathan and Habel, Ute and Kuhlen, Torsten W.},

title = {The Impact of a Virtual Agent's Non-Verbal Emotional Expression on a User's Personal Space Preferences},

year = {2020},

isbn = {9781450375863},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3383652.3423888},

doi = {10.1145/3383652.3423888},

abstract = {Virtual-reality-based interactions with virtual agents (VAs) are likely subject to similar influences as human-human interactions. In either real or virtual social interactions, interactants try to maintain their personal space (PS), an ubiquitous, situative, flexible safety zone. Building upon larger PS preferences to humans and VAs with angry facial expressions, we extend the investigations to whole-body emotional expressions. In two immersive settings-HMD and CAVE-66 males were approached by an either happy, angry, or neutral male VA. Subjects preferred a larger PS to the angry VA when being able to stop him at their convenience (Sample task), replicating previous findings, and when being able to actively avoid him (Pass By task). In the latter task, we also observed larger distances in the CAVE than in the HMD.},

booktitle = {Proceedings of the 20th ACM International Conference on Intelligent Virtual Agents},

articleno = {12},

numpages = {8},

keywords = {personal space, virtual reality, emotions, virtual agents},

location = {Virtual Event, Scotland, UK},

series = {IVA '20}

}

Immersive Sketching to Author Crowd Movements in Real-time

the flow of virtual crowds in a direct and interactive manner. Here, options to redirect a flow by sketching barriers, or guiding entities based on a sketched network of connected sections are provided. As virtual crowds are increasingly often embedded into virtual reality (VR) applications, 3D authoring is of interest.

In this preliminary work, we thus present a sketch-based approach for VR. First promising results of a proof-of-concept are summarized and improvement suggestions, extensions, and future steps are discussed.

» Show BibTeX

@inproceedings{10.1145/3383652.3423883,

author = {B\"{o}nsch, Andrea and Barton, Sebastian J. and Ehret, Jonathan and Kuhlen, Torsten W.},

title = {Immersive Sketching to Author Crowd Movements in Real-Time},

year = {2020},

isbn = {9781450375863},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3383652.3423883},

doi = {10.1145/3383652.3423883},

abstract = {Sketch-based interfaces in 2D screen space allow to efficiently author the flow of virtual crowds in a direct and interactive manner. Here, options to redirect a flow by sketching barriers, or guiding entities based on a sketched network of connected sections are provided.As virtual crowds are increasingly often embedded into virtual reality (VR) applications, 3D authoring is of interest. In this preliminary work, we thus present a sketch-based approach for VR. First promising results of a proof-of-concept are summarized and improvement suggestions, extensions, and future steps are discussed.},

booktitle = {Proceedings of the 20th ACM International Conference on Intelligent Virtual Agents},

articleno = {11},

numpages = {3},

keywords = {virtual crowds, virtual reality, sketch-based interface, authoring},

location = {Virtual Event, Scotland, UK},

series = {IVA '20}

}

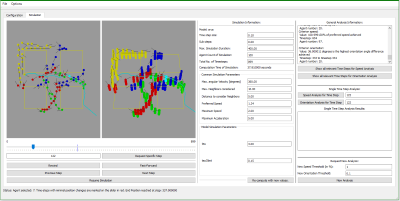

Towards a Graphical User Interface for Exploring and Fine-Tuning Crowd Simulations

Simulating a realistic navigation of virtual pedestrians through virtual environments is a recurring subject of investigations. The various mathematical approaches used to compute the pedestrians’ paths result, i.a., in different computation-times and varying path characteristics. Customizable parameters, e.g., maximal walking speed or minimal interpersonal distance, add another level of complexity. Thus, choosing the best-fitting approach for a given environment and use-case is non-trivial, especially for novice users.

To facilitate the informed choice of a specific algorithm with a certain parameter set, crowd simulation frameworks such as Menge provide an extendable collection of approaches with a unified interface for usage. However, they often miss an elaborated visualization with high informative value accompanied by visual analysis methods to explore the complete simulation data in more detail – which is yet required for an informed choice. Benchmarking suites such as SteerBench are a helpful approach as they objectively analyze crowd simulations, however they are too tailored to specific behavior details. To this end, we propose a preliminary design of an advanced graphical user interface providing a 2D and 3D visualization of the crowd simulation data as well as features for time navigation and an overall data exploration.

» Show BibTeX

@InProceedings{Boensch2020b,

author = {Andrea B\"{o}nsch and Marcel Jonda and Jonathan Ehret and Torsten W. Kuhlen},

title = {{Towards a Graphical User Interface for Exploring and Fine-Tuning Crowd Simulations}},

booktitle = {IEEE Virtual Humans and Crowds for Immersive Environments (VHCIE)},

year = {2020},

month={March}

}

Influence of Directivity on the Perception of Embodied Conversational Agents' Speech

Embodied conversational agents become more and more important in various virtual reality applications, e.g., as peers, trainers or therapists. Besides their appearance and behavior, appropriate speech is required for them to be perceived as human-like and realistic. Additionally to the used voice signal, also its auralization in the immersive virtual environment has to be believable. Therefore, we investigated the effect of adding directivity to the speech sound source. Directivity simulates the orientation dependent auralization with regard to the agent's head orientation. We performed a one-factorial user study with two levels (n=35) to investigate the effect directivity has on the perceived social presence and realism of the agent's voice. Our results do not indicate any significant effects regarding directivity on both variables covered. We account this partly to an overall too low realism of the virtual agent, a not overly social utilized scenario and generally high variance of the examined measures. These results are critically discussed and potential further research questions and study designs are identified.

» Show BibTeX

@inproceedings{Wendt2019,

author = {Wendt, Jonathan and Weyers, Benjamin and Stienen, Jonas and B\"{o}nsch, Andrea and Vorl\"{a}nder, Michael and Kuhlen, Torsten W.},

title = {Influence of Directivity on the Perception of Embodied Conversational Agents' Speech},

booktitle = {Proceedings of the 19th ACM International Conference on Intelligent Virtual Agents},

series = {IVA '19},

year = {2019},

isbn = {978-1-4503-6672-4},

location = {Paris, France},

pages = {130--132},

numpages = {3},

url = {http://doi.acm.org/10.1145/3308532.3329434},

doi = {10.1145/3308532.3329434},

acmid = {3329434},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {directional 3d sound, social presence, virtual acoustics, virtual agents},

}

Evaluation of Omnipresent Virtual Agents Embedded as Temporarily Required Assistants in Immersive Environments

When designing the behavior of embodied, computer-controlled, human-like virtual agents (VA) serving as temporarily required assistants in virtual reality applications, two linked factors have to be considered: the time the VA is visible in the scene, defined as presence time (PT), and the time till the VA is actually available for support on a user’s calling, defined as approaching time (AT).

Complementing a previous research on behaviors with a low VA’s PT, we present the results of a controlled within-subjects study investigating behaviors by which the VA is always visible, i.e., behaviors with a high PT. The two behaviors affecting the AT tested are: following, a design in which the VA is omnipresent and constantly follows the users, and busy, a design in which theVAis self-reliantly spending time nearby the users and approaches them only if explicitly asked for. The results indicate that subjects prefer the following VA, a behavior which also leads to slightly lower execution times compared to busy.

» Show BibTeX

@InProceedings{Boensch2019c,

author = {Andrea B\"{o}nsch and Jan Hoffmann and Jonathan Wendt and Torsten W. Kuhlen},

title = {{Evaluation of Omnipresent Virtual Agents Embedded as Temporarily Required Assistants in Immersive Environments}},

booktitle = {IEEE Virtual Humans and Crowds for Immersive Environments (VHCIE)},

year = {2019},

doi={10.1109/VHCIE.2019.8714726},

month={March}

}

Social VR: How Personal Space is Affected by Virtual Agents’ Emotions

Personal space (PS), the flexible protective zone maintained around oneself, is a key element of everyday social interactions. It, e.g., affects people's interpersonal distance and is thus largely involved when navigating through social environments. However, the PS is regulated dynamically, its size depends on numerous social and personal characteristics and its violation evokes different levels of discomfort and physiological arousal. Thus, gaining more insight into this phenomenon is important.

We contribute to the PS investigations by presenting the results of a controlled experiment in a CAVE, focusing on German males in the age of 18 to 30 years. The PS preferences of 27 participants have been sampled while they were approached by either a single embodied, computer-controlled virtual agent (VA) or by a group of three VAs. In order to investigate the influence of a VA's emotions, we altered their facial expression between angry and happy. Our results indicate that the emotion as well as the number of VAs approaching influence the PS: larger distances are chosen to angry VAs compared to happy ones; single VAs are allowed closer compared to the group. Thus, our study is a foundation for social and behavioral studies investigating PS preferences.

@InProceedings{Boensch2018c,

author = {Andrea B\"{o}nsch and Sina Radke and Heiko Overath and Laura M. Asch\'{e} and Jonathan Wendt and Tom Vierjahn and Ute Habel and Torsten W. Kuhlen},

title = {{Social VR: How Personal Space is Affected by Virtual Agents’ Emotions}},

booktitle = {Proceedings of the IEEE Conference on Virtual Reality and 3D User Interfaces (VR) 2018},

year = {2018}

}