Profile

|

Jun.-Prof. Dr.-Ing. Benjamin Weyers |

Dissertation: Reconfiguration of User Interface Models for Monitoring and Control of Human-Computer Systems, Universität Duisburg Essen, 2012

Publications

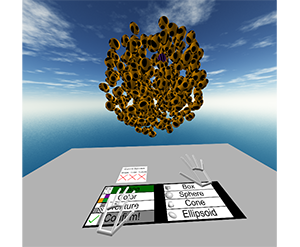

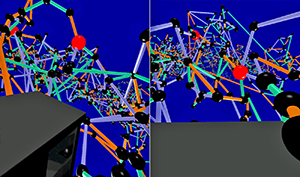

Choose Your Reference Frame Right: An Immersive Authoring Technique for Creating Reactive Behavior

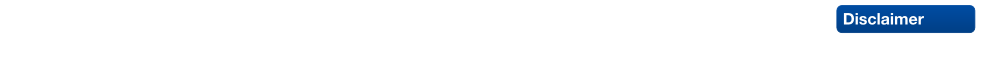

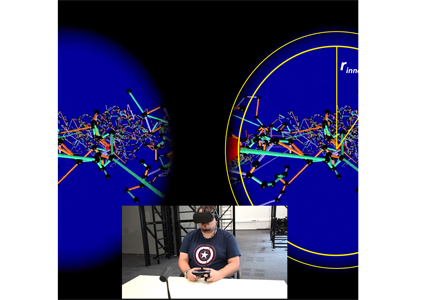

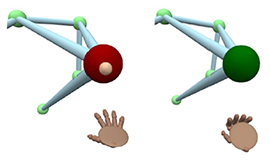

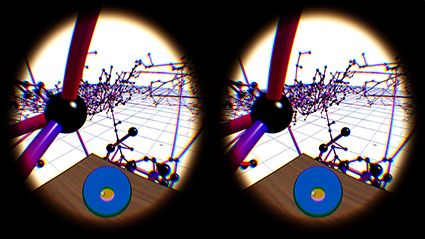

Immersive authoring enables content creation for virtual environments without a break of immersion. To enable immersive authoring of reactive behavior for a broad audience, we present modulation mapping, a simplified visual programming technique. To evaluate the applicability of our technique, we investigate the role of reference frames in which the programming elements are positioned, as this can affect the user experience. Thus, we developed two interface layouts: "surround-referenced" and "object-referenced". The former positions the programming elements relative to the physical tracking space, and the latter relative to the virtual scene objects. We compared the layouts in an empirical user study (n = 34) and found the surround-referenced layout faster, lower in task load, less cluttered, easier to learn and use, and preferred by users. Qualitative feedback, however, revealed the object-referenced layout as more intuitive, engaging, and valuable for visual debugging. Based on the results, we propose initial design implications for immersive authoring of reactive behavior by visual programming. Overall, modulation mapping was found to be an effective means for creating reactive behavior by the participants.

Honorable Mention for Best Paper!» Show BibTeX

@inproceedings{eroglu2024choose,

title={Choose Your Reference Frame Right: An Immersive Authoring Technique for Creating Reactive Behavior},

author={Eroglu, Sevinc and Schmitz, Patric and Sinke, Kilian and Anders, David and Kuhlen, Torsten Wolfgang and Weyers, Benjamin},

booktitle={30th ACM Symposium on Virtual Reality Software and Technology},

pages={1--11},

year={2024}

}

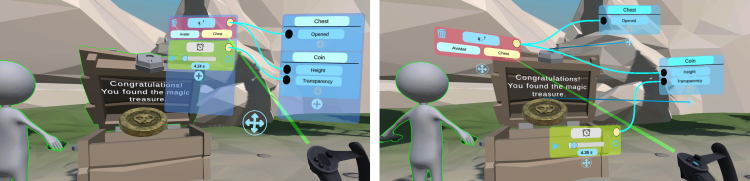

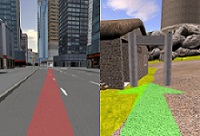

VRScenarioBuilder: Free-Hand Immersive Authoring Tool for Scenario-based Testing of Automated Vehicles

Virtual Reality has become an important medium in the automotive industry, providing engineers with a simulated platform to actively engage with and evaluate realistic driving scenarios for testing and validating automated vehicles. However, engineers are often restricted to using 2D desktop-based tools for designing driving scenarios, which can result in inefficiencies in the development and testing cycles. To this end, we present VRScenarioBuilder, an immersive authoring tool that enables engineers to create and modify dynamic driving scenarios directly in VR using free-hand interactions. Our tool features a natural user interface that enables users to create scenarios by using drag-and-drop building blocks. To evaluate the interface components and interactions, we conducted a user study with VR experts. Our findings highlight the effectiveness and potential improvements of our tool. We have further identified future research directions, such as exploring the spatial arrangement of the interface components and managing lengthy blocks.

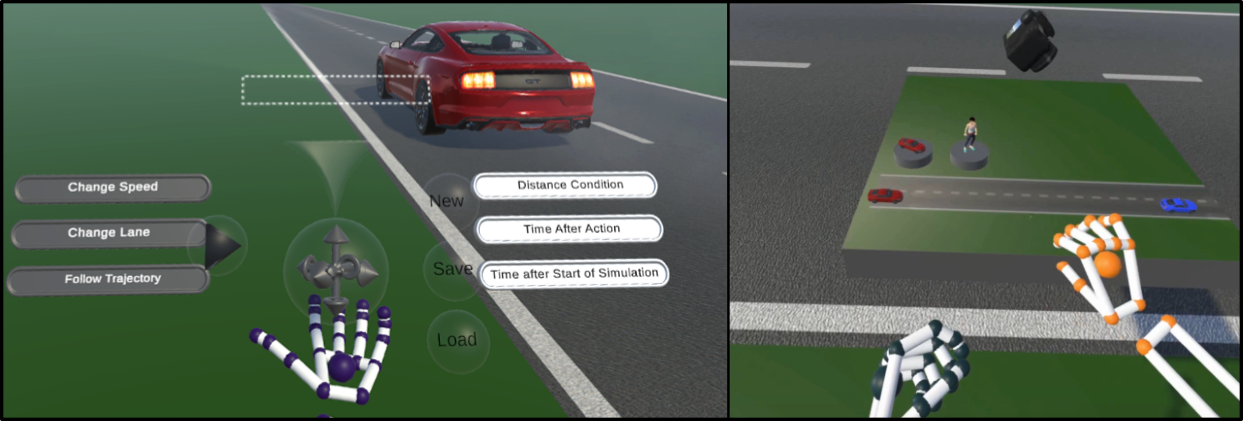

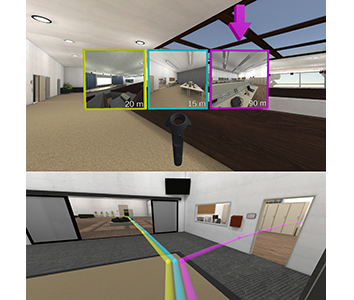

Come Look at This: Supporting Fluent Transitions between Tightly and Loosely Coupled Collaboration in Social Virtual Reality

Collaborative work in social virtual reality often requires an interplay of loosely coupled collaboration from different virtual locations and tightly coupled face-to-face collaboration. Without appropriate system mediation, however, transitioning between these phases requires high navigation and coordination efforts. In this paper, we present an interaction system that allows collaborators in virtual reality to seamlessly switch between different collaboration models known from related work. To this end, we present collaborators with functionalities that let them work on individual sub-tasks in different virtual locations, consult each other using asymmetric interaction patterns while keeping their current location, and temporarily or permanently join each other for face-to-face interaction. We evaluated our methods in a user study with 32 participants working in teams of two. Our quantitative results indicate that delegating the target selection process for a long-distance teleport significantly improves placement accuracy and decreases task load within the team. Our qualitative user feedback shows that our system can be applied to support flexible collaboration. In addition, the proposed interaction sequence received positive evaluations from teams with varying VR experiences.

@ARTICLE{10568966,

author={Bimberg, Pauline and Zielasko, Daniel and Weyers, Benjamin and Froehlich, Bernd and Weissker, Tim},

journal={IEEE Transactions on Visualization and Computer Graphics},

title={Come Look at This: Supporting Fluent Transitions between Tightly and Loosely Coupled Collaboration in Social Virtual Reality},

year={2024},

volume={},

number={},

pages={1-17},

keywords={Collaboration;Virtual environments;Navigation;Task analysis;Virtual reality;Three-dimensional displays;Teleportation;Virtual Reality;3D User Interfaces;Multi-User Environments;Social VR;Groupwork;Collaborative Interfaces},

doi={10.1109/TVCG.2024.3418009}}

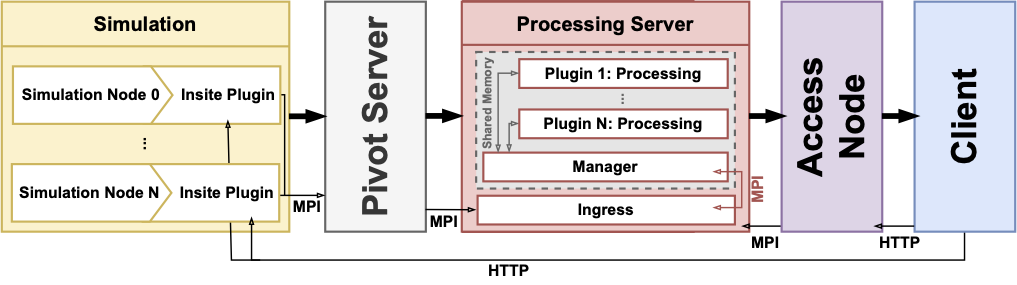

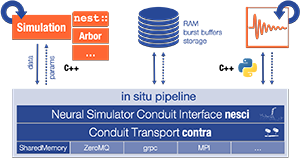

Poster: Insite Pipeline - A Pipeline Enabling In-Transit Processing for Arbor, NEST and TVB

Simulation of neuronal networks has steadily advanced and now allows for larger and more complex models. However, scaling simulations to such sizes comes with issues and challenges.Especially the amount of data produced, as well as the runtime of the simulation, can be limiting.Often, storing all data on disk is impossible, and users might have to wait for a long time until they can process the data.A standard solution in simulation science is to use in-transit approaches.In-transit implementations allow users to access data while the simulation is still running and do parallel processing outside the simulation.This allows for early insights into the results, early stopping of simulations that are not promising, or even steering of the simulations.Existing in-transit solutions, however, are often complex to integrate into the workflow as they rely on integration into simulators and often use data formats that are complex to handle.This is especially constraining in the context of multi-disciplinary research conducted in the HBP, as such an important feature should be accessible to all users.

To remedy this, we developed Insite, a pipeline that allows easy in-transit access to simulation data of multiscale simulations conducted with TVB, NEST, and Arbor.

@misc{kruger_marcel_2023_7849225,

author = {Krüger, Marcel and

Gerrits, Tim and

Kuhlen, Torsten and

Weyers, Benjamin},

title = {{Insite Pipeline - A Pipeline Enabling In-Transit

Processing for Arbor, NEST and TVB}},

month = mar,

year = 2023,

publisher = {Zenodo},

doi = {10.5281/zenodo.7849225},

url = {https://doi.org/10.5281/zenodo.7849225}

}

Insite: A Pipeline Enabling In-Transit Visualization and Analysis for Neuronal Network Simulations

Neuronal network simulators are central to computational neuroscience, enabling the study of the nervous system through in-silico experiments. Through the utilization of high-performance computing resources, these simulators are capable of simulating increasingly complex and large networks of neurons today. Yet, the increased capabilities introduce a challenge to the analysis and visualization of the simulation results. In this work, we propose a pipeline for in-transit analysis and visualization of data produced by neuronal network simulators. The pipeline is able to couple with simulators, enabling querying, filtering, and merging data from multiple simulation instances. Additionally, the architecture allows user-defined plugins that perform analysis tasks in the pipeline. The pipeline applies traditional REST API paradigms and utilizes data formats such as JSON to provide easy access to the generated data for visualization and further processing. We present and assess the proposed architecture in the context of neuronal network simulations generated by the NEST simulator.

@InProceedings{10.1007/978-3-031-23220-6_20,

author="Kr{\"u}ger, Marcel and Oehrl, Simon and Demiralp, Ali C. and Spreizer, Sebastian and Bruchertseifer, Jens and Kuhlen, Torsten W. and Gerrits, Tim and Weyers, Benjamin",

editor="Anzt, Hartwig and Bienz, Amanda and Luszczek, Piotr and Baboulin, Marc",

title="Insite: A Pipeline Enabling In-Transit Visualization and Analysis for Neuronal Network Simulations",

booktitle="High Performance Computing. ISC High Performance 2022 International Workshops",

year="2022",

publisher="Springer International Publishing",

address="Cham",

pages="295--305",

isbn="978-3-031-23220-6"

}

Design and Evaluation of a Free-Hand VR-based Authoring Environment for Automated Vehicle Testing

Virtual Reality is increasingly used for safe evaluation and validation of autonomous vehicles by automotive engineers. However, the design and creation of virtual testing environments is a cumbersome process. Engineers are bound to utilize desktop-based authoring tools, and a high level of expertise is necessary. By performing scene authoring entirely inside VR, faster design iterations become possible. To this end, we propose a VR authoring environment that enables engineers to design road networks and traffic scenarios for automated vehicle testing based on free-hand interaction. We present a 3D interaction technique for the efficient placement and selection of virtual objects that is employed on a 2D panel. We conducted a comparative user study in which our interaction technique outperformed existing approaches regarding precision and task completion time. Furthermore, we demonstrate the effectiveness of the system by a qualitative user study with domain experts.

Nominated for the Best Paper Award.

Talk: Insite: A Generalized Pipeline for In-transit Visualization and Analysis

Neuronal network simulators are essential to computational neuroscience, enabling the study of the nervous system through in-silico experiments. Through utilization of high-performance computing resources, these simulators are able to simulate increasingly complex and large networks of neurons today. It also creates new challenges for the analysis and visualization of such simulations. In-situ and in-transport strategies are popular approaches in these scenarios. They enable live monitoring of running simulations and parameter adjustment in the case of erroneous configurations which can save valuable compute resources.

This talk will present the current status of our pipeline for in-transport analysis and visualization of neuronal network simulator data. The pipeline is able to couple with NEST along other simulators with data management (querying, filtering and merging) from multiple simulator instances. Finally, the data is passed to end-user applications for visualization and analysis. The goal is to be integrated into third party tools such as the multi-view visual analysis toolkit ViSimpl.

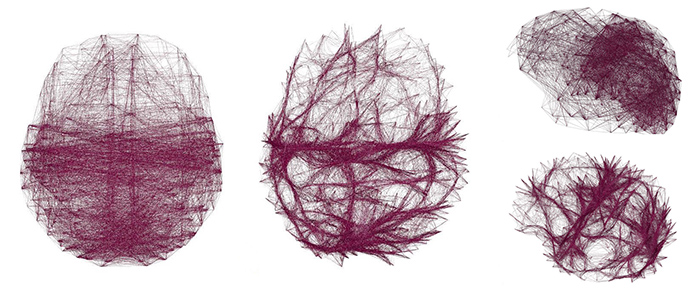

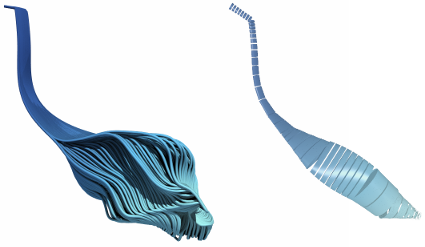

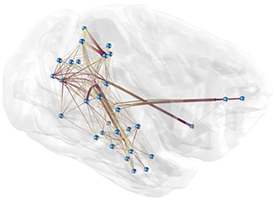

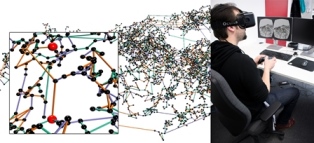

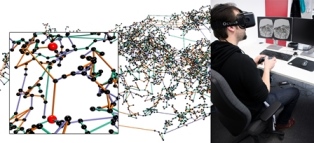

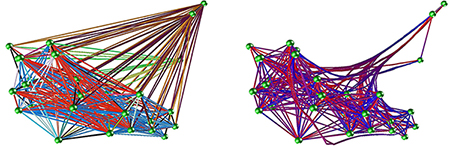

Voxel-Based Edge Bundling Trough Direction-Aware Kernel Smoothing

Relational data with a spatial embedding and depicted as node-link diagram is very common, e.g., in neuroscience, and edge bundling is one way to increase its readability or reveal hidden structures. This article presents a 3D extension to kernel density estimation-based edge bundling that is meant to be used in an interactive immersive analysis setting. This extension adds awareness of the edges’ direction when using kernel smoothing and thus implicitly supports both directed and undirected graphs. The method generates explicit bundles of edges, which can be analyzed and visualized individually and as sufficient as possible for a given application context, while it scales linearly with the input size.

@article{ZIELASKO2019,

title = "Voxel-based edge bundling through direction-aware kernel smoothing",

journal = "Computers & Graphics",

volume = "83",

pages = "87 - 96",

year = "2019",

issn = "0097-8493",

doi = "https://doi.org/10.1016/j.cag.2019.06.008",

url = "http://www.sciencedirect.com/science/article/pii/S0097849319301025",

author = "Daniel Zielasko and Xiaoqing Zhao and Ali Can Demiralp and Torsten W. Kuhlen and Benjamin Weyers"}

Influence of Directivity on the Perception of Embodied Conversational Agents' Speech

Embodied conversational agents become more and more important in various virtual reality applications, e.g., as peers, trainers or therapists. Besides their appearance and behavior, appropriate speech is required for them to be perceived as human-like and realistic. Additionally to the used voice signal, also its auralization in the immersive virtual environment has to be believable. Therefore, we investigated the effect of adding directivity to the speech sound source. Directivity simulates the orientation dependent auralization with regard to the agent's head orientation. We performed a one-factorial user study with two levels (n=35) to investigate the effect directivity has on the perceived social presence and realism of the agent's voice. Our results do not indicate any significant effects regarding directivity on both variables covered. We account this partly to an overall too low realism of the virtual agent, a not overly social utilized scenario and generally high variance of the examined measures. These results are critically discussed and potential further research questions and study designs are identified.

» Show BibTeX

@inproceedings{Wendt2019,

author = {Wendt, Jonathan and Weyers, Benjamin and Stienen, Jonas and B\"{o}nsch, Andrea and Vorl\"{a}nder, Michael and Kuhlen, Torsten W.},

title = {Influence of Directivity on the Perception of Embodied Conversational Agents' Speech},

booktitle = {Proceedings of the 19th ACM International Conference on Intelligent Virtual Agents},

series = {IVA '19},

year = {2019},

isbn = {978-1-4503-6672-4},

location = {Paris, France},

pages = {130--132},

numpages = {3},

url = {http://doi.acm.org/10.1145/3308532.3329434},

doi = {10.1145/3308532.3329434},

acmid = {3329434},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {directional 3d sound, social presence, virtual acoustics, virtual agents},

}

Passive Haptic Menus for Desk-Based and HMD-Projected Virtual Reality

In this work we evaluate the impact of passive haptic feedback on touch-based menus, given the constraints and possibilities of a seated, desk-based scenario in VR. Therefore, we compare a menu that once is placed on the surface of a desk and once mid-air on a surface in front of the user. The study design is completed by two conditions without passive haptic feedback. In the conducted user study (n = 33) we found effects of passive haptics (present vs- non-present) and menu alignment (desk vs. mid-air) on the task performance and subjective look & feel, however the race between the conditions was close. An overall winner was the mid-air menu with passive haptic feedback, which however raises hardware requirements.

@inproceedings{zielasko2019menu,

title={{Passive Haptic Menus for Desk-Based and HMD-Projected Virtual Reality}},

author={Zielasko, Daniel and Kr{\"u}ger Marcel and Weyers, Benjamin and Kuhlen, Torsten W},

booktitle={Proc. of IEEE VR Workshop on Everyday Virtual Reality},

year={2019}

}

A Non-Stationary Office Desk Substitution for Desk-Based and HMD-Projected Virtual Reality

The ongoing migration of HMDs to the consumer market also allows the integration of immersive environments into analysis workflows that are often bound to an (office) desk. However, a critical factor when considering VR solutions for professional applications is the prevention of cybersickness. In the given scenario the user is usually seated and the surrounding real world environment is very dominant, where the most dominant part is maybe the desk itself. Including this desk in the virtual environment could serve as a resting frame and thus reduce cybersickness next to a lot of further possibilities. In this work, we evaluate the feasibility of a substitution like this in the context of a visual data analysis task involving travel, and measure the impact on cybersickness as well as the general task performance and presence. In the conducted user study (n=52), surprisingly, and partially in contradiction to existing work, we found no significant differences for those core measures between the control condition without a virtual table and the condition containing a virtual table. However, the results also support the inclusion of a virtual table in desk-based use cases.

@inproceedings{zielasko2019travel,

title={{A Non-Stationary Office Desk Substitution for Desk-Based and HMD-Projected Virtual Reality}},

author={Zielasko, Daniel and Weyers, Benjamin and Kuhlen, Torsten W},

booktitle ={Proc. of IEEE VR Workshop on Immersive Sickness Prevention},

year={2019}

}

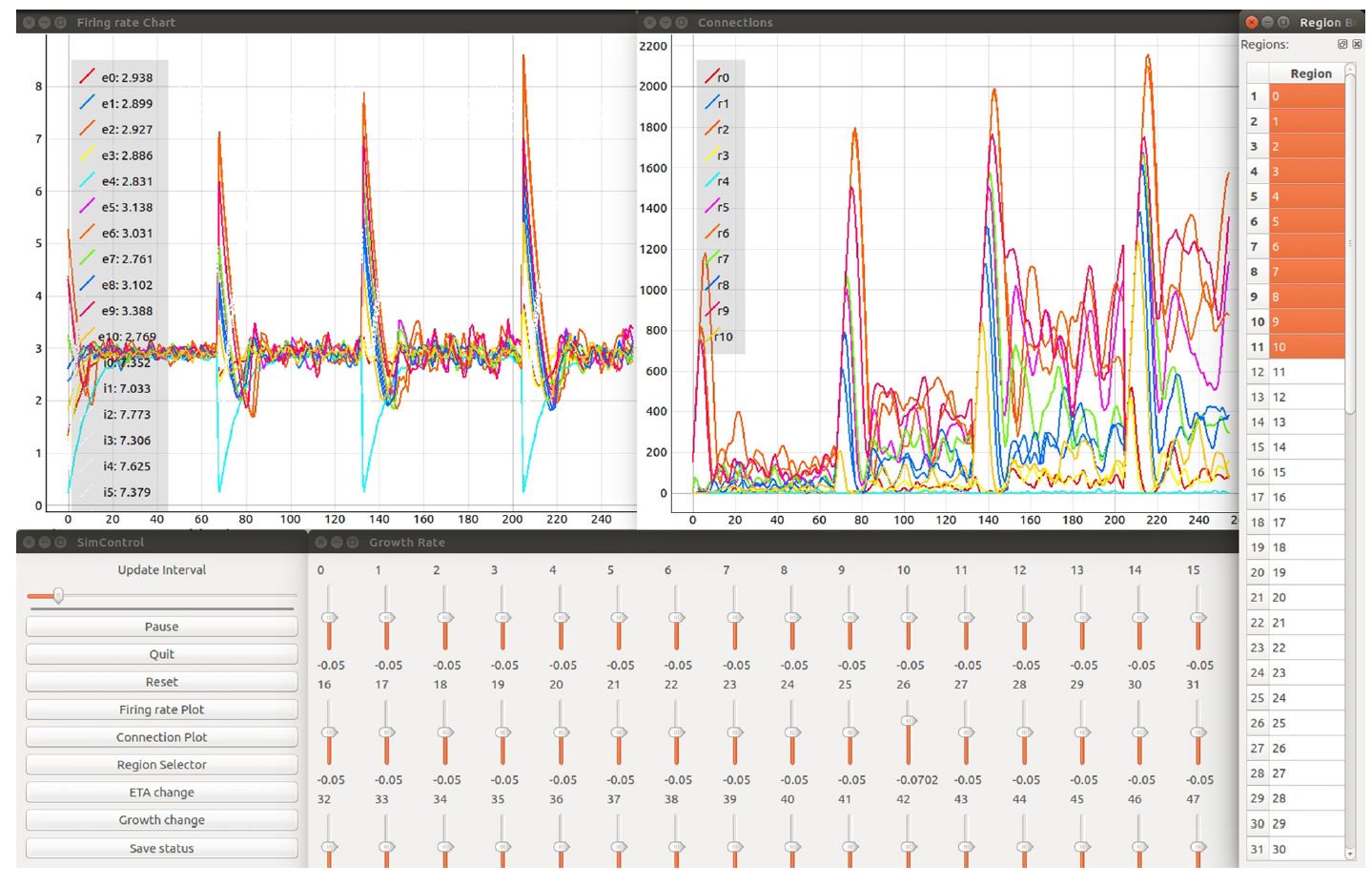

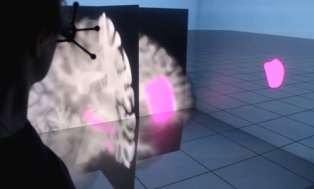

Toward Rigorous Parameterization of Underconstrained Neural Network Models Through Interactive Visualization and Steering of Connectivity Generation

Simulation models in many scientific fields can have non-unique solutions or unique solutions which can be difficult to find. Moreover, in evolving systems, unique ?nal state solutions can be reached by multiple different trajectories. Neuroscience is no exception. Often, neural network models are subject to parameter fitting to obtain desirable output comparable to experimental data. Parameter fitting without sufficient constraints and a systematic exploration of the possible solution space can lead to conclusions valid only around local minima or around non-minima. To address this issue, we have developed an interactive tool for visualizing and steering parameters in neural network simulation models. In this work, we focus particularly on connectivity generation, since ?nding suitable connectivity configurations for neural network models constitutes a complex parameter search scenario. The development of the tool has been guided by several use cases—the tool allows researchers to steer the parameters of the connectivity generation during the simulation, thus quickly growing networks composed of multiple populations with a targeted mean activity. The flexibility of the software allows scientists to explore other connectivity and neuron variables apart from the ones presented as use cases. With this tool, we enable an interactive exploration of parameter spaces and a better understanding of neural network models and grapple with the crucial problem of non-unique network solutions and trajectories. In addition, we observe a reduction in turn around times for the assessment of these models, due to interactive visualization while the simulation is computed.

@ARTICLE{10.3389/fninf.2018.00032,

AUTHOR={Nowke, Christian and Diaz-Pier, Sandra and Weyers, Benjamin and Hentschel, Bernd and Morrison, Abigail and Kuhlen, Torsten W. and Peyser, Alexander},

TITLE={Toward Rigorous Parameterization of Underconstrained Neural Network Models Through Interactive Visualization and Steering of Connectivity Generation},

JOURNAL={Frontiers in Neuroinformatics},

VOLUME={12},

PAGES={32},

YEAR={2018},

URL={https://www.frontiersin.org/article/10.3389/fninf.2018.00032},

DOI={10.3389/fninf.2018.00032},

ISSN={1662-5196},

ABSTRACT={Simulation models in many scientific fields can have non-unique solutions or unique solutions which can be difficult to find.

Moreover, in evolving systems, unique final state solutions can be reached by multiple different trajectories.

Neuroscience is no exception. Often, neural network models are subject to parameter fitting to obtain desirable output comparable to experimental data. Parameter fitting without sufficient constraints and a systematic exploration of the possible solution space can lead to conclusions valid only around local minima or around non-minima. To address this issue, we have developed an interactive tool for visualizing and steering parameters in neural network simulation models.

In this work, we focus particularly on connectivity generation, since finding suitable connectivity configurations for neural network models constitutes a complex parameter search scenario. The development of the tool has been guided by several use cases -- the tool allows researchers to steer the parameters of the connectivity generation during the simulation, thus quickly growing networks composed of multiple populations with a targeted mean activity. The flexibility of the software allows scientists to explore other connectivity and neuron variables apart from the ones presented as use cases. With this tool, we enable an interactive exploration of parameter spaces and a better understanding of neural network models and grapple with the crucial problem of non-unique network solutions and trajectories. In addition, we observe a reduction in turn around times for the assessment of these models, due to interactive visualization while the simulation is computed.}

}

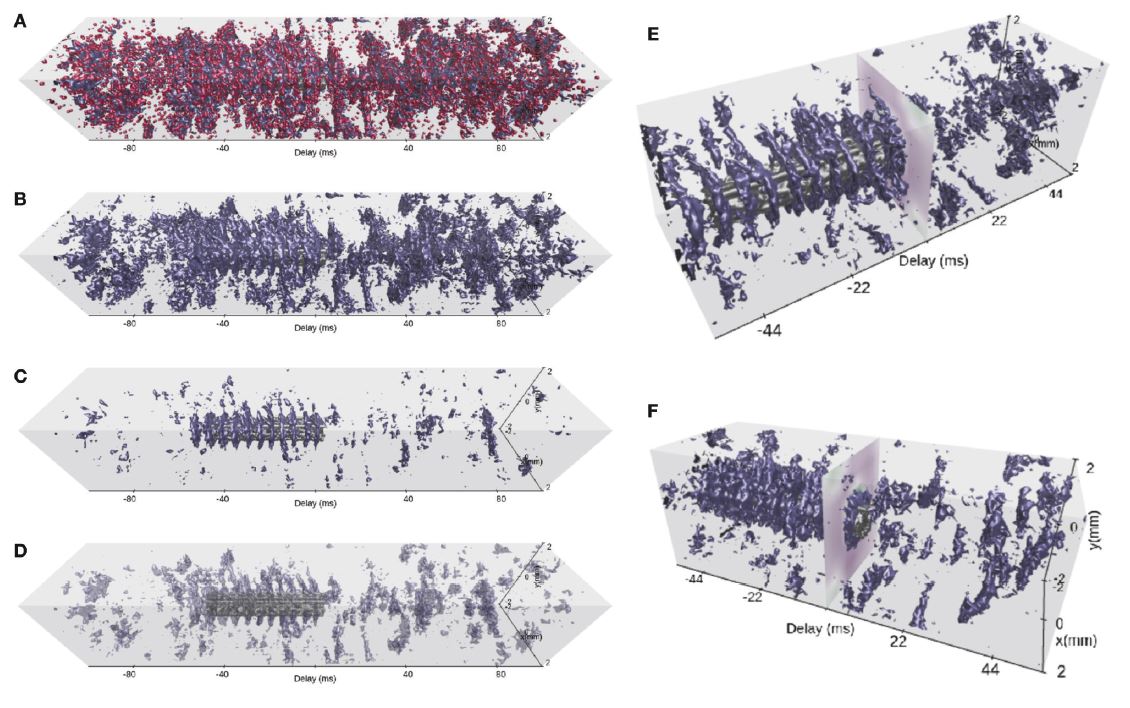

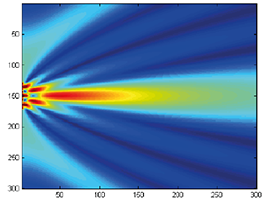

VIOLA : a Multi-Purpose and Web-Based Visualization Tool for Neuronal-Network Simulation Output

Neuronal network models and corresponding computer simulations are invaluable tools to aid the interpretation of the relationship between neuron properties, connectivity, and measured activity in cortical tissue. Spatiotemporal patterns of activity propagating across the cortical surface as observed experimentally can for example be described by neuronal network models with layered geometry and distance-dependent connectivity. In order to cover the surface area captured by today’s experimental techniques and to achieve sufficient self-consistency, such models contain millions of nerve cells. The interpretation of the resulting stream of multi-modal and multi-dimensional simulation data calls for integrating interactive visualization steps into existing simulation-analysis workflows. Here, we present a set of interactive visualization concepts called views for the visual analysis of activity data in topological network models, and a corresponding reference implementation VIOLA (VIsualization Of Layer Activity). The software is a lightweight, open-source, web-based, and platform-independent application combining and adapting modern interactive visualization paradigms, such as coordinated multiple views, for massively parallel neurophysiological data. For a use-case demonstration we consider spiking activity data of a two-population, layered point-neuron network model incorporating distance-dependent connectivity subject to a spatially confined excitation originating from an external population. With the multiple coordinated views, an explorative and qualitative assessment of the spatiotemporal features of neuronal activity can be performed upfront of a detailed quantitative data analysis of speci?c aspects of the data. Interactive multi-view analysis therefore assists existing data Analysis workflows. Furthermore,ongoingeffortsincludingtheEuropeanHumanBrainProjectaim at providing online user portals for integrated model development, simulation, analysis, and provenance tracking, wherein interactive visual analysis tools are one component. Browser-compatible, web-technology based solutions are therefore required. Within this scope, with VIOLA we provide a first prototype.

@ARTICLE{10.3389/fninf.2018.00075,

AUTHOR={Senk, Johanna and Carde, Corto and Hagen, Espen and Kuhlen, Torsten W. and Diesmann, Markus and Weyers, Benjamin},

TITLE={VIOLA—A Multi-Purpose and Web-Based Visualization Tool for Neuronal-Network Simulation Output},

JOURNAL={Frontiers in Neuroinformatics},

VOLUME={12},

PAGES={75},

YEAR={2018},

URL={https://www.frontiersin.org/article/10.3389/fninf.2018.00075},

DOI={10.3389/fninf.2018.00075},

ISSN={1662-5196},

ABSTRACT={Neuronal network models and corresponding computer simulations are invaluable tools to aid the interpretation of the relationship between neuron properties, connectivity and measured activity in cortical tissue. Spatiotemporal patterns of activity propagating across the cortical surface as observed experimentally can for example be described by neuronal network models with layered geometry and distance-dependent connectivity. In order to cover the surface area captured by today's experimental techniques and to achieve sufficient self-consistency, such models contain millions of nerve cells. The interpretation of the resulting stream of multi-modal and multi-dimensional simulation data calls for integrating interactive visualization steps into existing simulation-analysis workflows. Here, we present a set of interactive visualization concepts called views for the visual analysis of activity data in topological network models, and a corresponding reference implementation VIOLA (VIsualization Of Layer Activity). The software is a lightweight, open-source, web-based and platform-independent application combining and adapting modern interactive visualization paradigms, such as coordinated multiple views, for massively parallel neurophysiological data. For a use-case demonstration we consider spiking activity data of a two-population, layered point-neuron network model incorporating distance-dependent connectivity subject to a spatially confined excitation originating from an external population. With the multiple coordinated views, an explorative and qualitative assessment of the spatiotemporal features of neuronal activity can be performed upfront of a detailed quantitative data analysis of specific aspects of the data. Interactive multi-view analysis therefore assists existing data analysis workflows. Furthermore, ongoing efforts including the European Human Brain Project aim at providing online user portals for integrated model development, simulation, analysis and provenance tracking, wherein interactive visual analysis tools are one component. Browser-compatible, web-technology based solutions are therefore required. Within this scope, with VIOLA we provide a first prototype.}

}

Streaming Live Neuronal Simulation Data into Visualization and Analysis

Neuroscientists want to inspect the data their simulations are producing while these are still running. This will on the one hand save them time waiting for results and therefore insight. On the other, it will allow for more efficient use of CPU time if the simulations are being run on supercomputers. If they had access to the data being generated, neuroscientists could monitor it and take counter-actions, e.g., parameter adjustments, should the simulation deviate too much from in-vivo observations or get stuck.

As a first step toward this goal, we devise an in situ pipeline tailored to the neuroscientific use case. It is capable of recording and transferring simulation data to an analysis/visualization process, while the simulation is still running. The developed libraries are made publicly available as open source projects. We provide a proof-of-concept integration, coupling the neuronal simulator NEST to basic 2D and 3D visualization.

@InProceedings{10.1007/978-3-030-02465-9_18,

author="Oehrl, Simon

and M{\"u}ller, Jan

and Schnathmeier, Jan

and Eppler, Jochen Martin

and Peyser, Alexander

and Plesser, Hans Ekkehard

and Weyers, Benjamin

and Hentschel, Bernd

and Kuhlen, Torsten W.

and Vierjahn, Tom",

editor="Yokota, Rio

and Weiland, Mich{\`e}le

and Shalf, John

and Alam, Sadaf",

title="Streaming Live Neuronal Simulation Data into Visualization and Analysis",

booktitle="High Performance Computing",

year="2018",

publisher="Springer International Publishing",

address="Cham",

pages="258--272",

abstract="Neuroscientists want to inspect the data their simulations are producing while these are still running. This will on the one hand save them time waiting for results and therefore insight. On the other, it will allow for more efficient use of CPU time if the simulations are being run on supercomputers. If they had access to the data being generated, neuroscientists could monitor it and take counter-actions, e.g., parameter adjustments, should the simulation deviate too much from in-vivo observations or get stuck.",

isbn="978-3-030-02465-9"

}

Interactive Exploration Assistance for Immersive Virtual Environments Based on Object Visibility and Viewpoint Quality

During free exploration of an unknown virtual scene, users often miss important parts, leading to incorrect or incomplete environment knowledge and a potential negative impact on performance in later tasks. This is addressed by wayfinding aids such as compasses, maps, or trails, and automated exploration schemes such as guided tours. However, these approaches either do not actually ensure exploration success or take away control from the user.

Therefore, we present an interactive assistance interface to support exploration that guides users to interesting and unvisited parts of the scene upon request, supplementing their own, free exploration. It is based on an automated analysis of object visibility and viewpoint quality and is therefore applicable to a wide range of scenes without human supervision or manual input. In a user study, we found that the approach improves users' knowledge of the environment, leads to a more complete exploration of the scene, and is also subjectively helpful and easy to use.

Does the Directivity of a Virtual Agent’s Speech Influence the Perceived Social Presence?

When interacting and communicating with virtual agents in immersive environments, the agents’ behavior should be believable and authentic. Thereby, one important aspect is a convincing auralizations of their speech. In this work-in progress paper a study design to evaluate the effect of adding directivity to speech sound source on the perceived social presence of a virtual agent is presented. Therefore, we describe the study design and discuss first results of a prestudy as well as consequential improvements of the design.

» Show BibTeX

@InProceedings{Boensch2018b,

author = {Jonathan Wendt and Benjamin Weyers and Andrea B\"{o}nsch and Jonas Stienen and Tom Vierjahn and Michael Vorländer and Torsten W. Kuhlen },

title = {{Does the Directivity of a Virtual Agent’s Speech Influence the Perceived Social Presence?}},

booktitle = {IEEE Virtual Humans and Crowds for Immersive Environments (VHCIE)},

year = {2018}

}

Dynamic Field of View Reduction Related to Subjective Sickness Measures in an HMD-based Data Analysis Task

Various factors influence the degree of cybersickness a user can suffer in an immersive virtual environment, some of which can be controlled without adapting the virtual environment itself. When using HMDs, one example is the size of the field of view. However, the degree to which factors like this can be manipulated without affecting the user negatively in other ways is limited. Another prominent characteristic of cybersickness is that it affects individuals very differently. Therefore, to account for both the possible disruptive nature of alleviating factors and the high interpersonal variance, a promising approach may be to intervene only in cases where users experience discomfort symptoms, and only as much as necessary. Thus, we conducted a first experiment, where the field of view was decreased when people feel uncomfortable, to evaluate the possible positive impact on sickness and negative influence on presence. While we found no significant evidence for any of these possible effects, interesting further results and observations were made.

» Show BibTeX

@InProceedings{zielasko2018,

title={{Dynamic Field of View Reduction Related to Subjective Sickness Measures in an HMD-based Data Analysis Task}},

author={Zielasko, Daniel and Mei{\ss}ner, Alexander and Freitag Sebastian and Weyers, Benjamin and Kuhlen, Torsten W},

booktitle ={Proc. of IEEE Virtual Reality Workshop on Everyday Virtual Reality},

year={2018}

}

Seamless Hand-Based Remote and Close Range Interaction in IVEs

In this work, we describe a hybrid, hand-based interaction metaphor that makes remote and close objects in an HMD-based immersive virtual environment (IVE) seamlessly accessible. To accomplish this, different existing techniques, such as go-go and HOMER, were combined in a way that aims for generality, intuitiveness, uniformity, and speed. A technique like this is one prerequisite for a successful integration of IVEs to professional everyday applications, such as data analysis workflows.

Talk: Streaming Live Neuronal Simulation Data into Visualization and Analysis

Being able to inspect neuronal network simulations while they are running provides new research strategies to neuroscientists as it enables them to perform actions like parameter adjustments in case the simulation performs unexpectedly. This can also save compute resources when such simulations are run on large supercomputers as errors can be detected and corrected earlier saving valuable compute time. This talk presents a prototypical pipeline that enables in-situ analysis and visualization of running simulations.

Fluid Sketching: Bringing Ebru Art into VR

In this interactive demo, we present our Fluid Sketching application as an innovative virtual reality-based interpretation of traditional marbling art. By using a particle-based simulation combined with natural, spatial, and multi-modal interaction techniques, we create and extend the original artistic work to build a comprehensive interactive experience. With the interactive demo of Fluid Sketching during Mensch und Computer 2018, we aim at increasing the awareness of paper marbling as traditional type of art and demonstrating the potential of virtual reality as new and innovative digital and artistic medium.

@article{eroglu2018fluid,

title={Fluid Sketching: Bringing Ebru Art into VR},

author={Eroglu, Sevinc and Weyers, Benjamin and Kuhlen, Torsten},

journal={Mensch und Computer 2018-Workshopband},

year={2018},

publisher={Gesellschaft f{\"u}r Informatik eV}

}

Interactive Exploration of Dissipation Element Geometry

Dissipation elements (DE) define a geometrical structure for the analysis of small-scale turbulence. Existing analyses based on DEs focus on a statistical treatment of large populations of DEs. In this paper, we propose a method for the interactive visualization of the geometrical shape of DE populations. We follow a two-step approach: in a pre-processing step, we approximate individual DEs by tube-like, implicit shapes with elliptical cross sections of varying radii; we then render these approximations by direct ray-casting thereby avoiding the need for costly generation of detailed, explicit geometry for rasterization. Our results demonstrate that the approximation gives a reasonable representation of DE geometries and the rendering performance is suitable for interactive use.

@InProceedings{Vierjahn2017,

booktitle = {Eurographics Symposium on Parallel Graphics and Visualization},

author = {Tom Vierjahn and Andrea Schnorr and Benjamin Weyers and Dominik Denker and Ingo Wald and Christoph Garth and Torsten W. Kuhlen and Bernd Hentschel},

title = {Interactive Exploration of Dissipation Element Geometry},

year = {2017},

pages = {53--62},

ISSN = {1727-348X},

ISBN = {978-3-03868-034-5},

doi = {10.2312/pgv.20171093},

}

buenoSDIAs: Supporting Desktop Immersive Analytics While Actively Preventing Cybersickness

Immersive data analytics as an emerging research topic in scientific and information visualization has recently been brought back into the focus due to the emergence of low-cost consumer virtual reality hardware. Previous research has shown the positive impact of immersive visualization on data analytics workflows, but in most cases, insights were based on large-screen setups. In contrast, less research focuses on a close integration of immersive technology into existing, i.e., desktop-based data analytics workflows. This implies specific requirements regarding the usability of such systems, which include, i.e., the prevention of cybersickness. In this work, we present a prototypical application, which offers a first set of tools and addresses major challenges for a fully immersive data analytics setting in which the user is sitting at a desktop. In particular, we address the problem of cybersickness by integrating prevention strategies combined with individualized user profiles to maximize time of use.

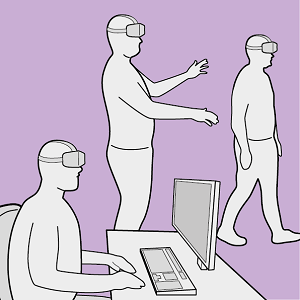

Utilizing Immersive Virtual Reality in Everyday Work

Applications of Virtual Reality (VR) have been repeatedly explored with the goal to improve the data analysis process of users from different application domains, such as architecture and simulation sciences. Unfortunately, making VR available in professional application scenarios or even using it on a regular basis has proven to be challenging. We argue that everyday usage environments, such as office spaces, have introduced constraints that critically affect the design of interaction concepts since well-established techniques might be difficult to use. In our opinion, it is crucial to understand the impact of usage scenarios on interaction design, to successfully develop VR applications for everyday use. To substantiate our claim, we define three distinct usage scenarios in this work that primarily differ in the amount of mobility they allow for. We outline each scenario's inherent constraints but also point out opportunities that may be used to design novel, well-suited interaction techniques for different everyday usage environments. In addition, we link each scenario to a concrete application example to clarify its relevance and show how it affects interaction design.

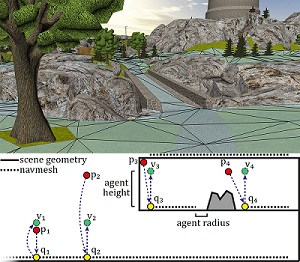

Efficient Approximate Computation of Scene Visibility Based on Navigation Meshes and Applications for Navigation and Scene Analysis

Scene visibility - the information of which parts of the scene are visible from a certain location—can be used to derive various properties of a virtual environment. For example, it enables the computation of viewpoint quality to determine the informativeness of a viewpoint, helps in constructing virtual tours, and allows to keep track of the objects a user may already have seen. However, computing visibility at runtime may be too computationally expensive for many applications, while sampling the entire scene beforehand introduces a costly precomputation step and may include many samples not needed later on.

Therefore, in this paper, we propose a novel approach to precompute visibility information based on navigation meshes, a polygonal representation of a scene’s navigable areas. We show that with only limited precomputation, high accuracy can be achieved in these areas. Furthermore, we demonstrate the usefulness of the approach by means of several applications, including viewpoint quality computation, landmark and room detection, and exploration assistance. In addition, we present a travel interface based on common visibility that we found to result in less cybersickness in a user study.

» Show BibTeX

@INPROCEEDINGS{freitag2017a,

author={Sebastian Freitag and Benjamin Weyers and Torsten W. Kuhlen},

booktitle={2017 IEEE Symposium on 3D User Interfaces (3DUI)},

title={{Efficient Approximate Computation of Scene Visibility Based on Navigation Meshes and Applications for Navigation and Scene Analysis}},

year={2017},

pages={134--143},

}

Approximating Optimal Sets of Views in Virtual Scenes

Viewpoint quality estimation methods allow the determination of the most informative position in a scene. However, a single position usually cannot represent an entire scene, requiring instead a set of several viewpoints. Measuring the quality of such a set of views, however, is not trivial, and the computation of an optimal set of views is an NP-hard problem. Therefore, in this work, we propose three methods to estimate the quality of a set of views. Furthermore, we evaluate three approaches for computing an approximation to the optimal set (two of them new) regarding effectiveness and efficiency.

Assisted Travel Based on Common Visibility and Navigation Meshes

The manual adjustment of travel speed to cover medium or large distances in virtual environments may increase cognitive load, and manual travel at high speeds can lead to cybersickness due to inaccurate steering. In this work, we present an approach to quickly pass regions where the environment does not change much, using automated suggestions based on the computation of common visibility. In a user study, we show that our method can reduce cybersickness when compared with manual speed control.

BlowClick 2.0: A Trigger Based on Non-Verbal Vocal Input

The use of non-verbal vocal input (NVVI) as a hand-free trigger approach has proven to be valuable in previous work [Zielasko2015]. Nevertheless, BlowClick's original detection method is vulnerable to false positives and, thus, is limited in its potential use, e.g., together with acoustic feedback for the trigger. Therefore, we extend the existing approach by adding common machine learning methods. We found that a support vector machine (SVM) with Gaussian kernel performs best for detecting blowing with at least the same latency and more precision as before. Furthermore, we added acoustic feedback to the NVVI trigger, which increases the user's confidence. To evaluate the advanced trigger technique, we conducted a user study (n=33). The results confirm that it is a reliable trigger; alone and as part of a hands-free point-and-click interface.

A Reliable Non-Verbal Vocal Input Metaphor for Clicking

We extended BlowClick, a NVVI metaphor for clicking, by adding machine learning methods to more reliably classify blowing events. We found a support vector machine with Gaussian kernel performing the best with at least the same latency and more precision than before. Furthermore, we added acoustic feedback to the NVVI trigger, which increases the user's confidence. With this extended technique we conducted a user study with 33 participants and could confirm that it is possible to use NVVI as a reliable trigger as part of a hands-free point-and-click interface.

Remain Seated: Towards Fully-Immersive Desktop VR

In this work we describe the scenario of fully-immersive desktop VR, which serves the overall goal to seamlessly integrate with existing workflows and workplaces of data analysts and researchers, such that they can benefit from the gain in productivity when immersed in their data-spaces. Furthermore, we provide a literature review showing the status quo of techniques and methods available for realizing this scenario under the raised restrictions. Finally, we propose a concept of an analysis framework and the decisions made and the decisions still to be taken, to outline how the described scenario and the collected methods are feasible in a real use case.

Poster: Towards a Design Space Characterizing Workflows that Take Advantage of Immersive Visualization

Immersive visualization (IV) fosters the creation of mental images of a data set, a scene, a procedure, etc. We devise an initial version of a design space for categorizing workflows that take advantage of IV. From this categorization, specific requirements for seamlessly integrating IV can be derived. We validate the design space with three workflows emerging from our research projects.

@InProceedings{Vierjahn2017,

Title = {Towards a Design Space Characterizing Workflows that Take Advantage of Immersive Visualization},

Author = {Tom Vierjahn and Daniel Zielasko and Kees van Kooten and Peter Messmer and Bernd Hentschel and Torsten W. Kuhlen and Benjamin Weyers},

Booktitle = {IEEE Virtual Reality Conference Poster Proceedings},

Year = {2017},

Pages = {329-330},

DOI={10.1109/VR.2017.7892310}

}

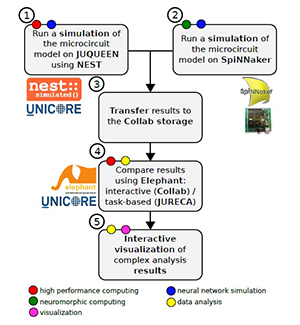

A Collaborative Simulation-Analysis Workflow for Computational Neuroscience Using HPC

Workflows for the acquisition and analysis of data in the natural sciences exhibit a growing degree of complexity and heterogeneity, are increasingly performed in large collaborative efforts, and often require the use of high-performance computing (HPC). Here, we explore the reasons for these new challenges and demands and discuss their impact, with a focus on the scientific domain of computational neuroscience. We argue for the need for software platforms integrating HPC systems that allow scientists to construct, comprehend and execute workflows composed of diverse processing steps using different tools. As a use case we present a concrete implementation of such a complex workflow, covering diverse topics such as HPC-based simulation using the NEST software, access to the SpiNNaker neuromorphic hardware platform, complex data analysis using the Elephant library, and interactive visualizations. Tools are embedded into a web-based software platform under development by the Human Brain Project, called Collaboratory. On the basis of this implementation, we discuss the state-of-the-art and future challenges in constructing large, collaborative workflows with access to HPC resources.

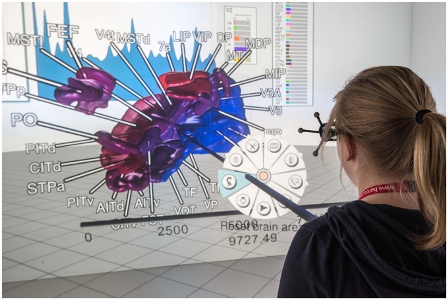

Interactive 3D Force-Directed Edge Bundling

Interactive analysis of 3D relational data is challenging. A common way of representing such data are node-link diagrams as they support analysts in achieving a mental model of the data. However, naïve 3D depictions of complex graphs tend to be visually cluttered, even more than in a 2D layout. This makes graph exploration and data analysis less efficient. This problem can be addressed by edge bundling. We introduce a 3D cluster-based edge bundling algorithm that is inspired by the force-directed edge bundling (FDEB) algorithm [Holten2009] and fulfills the requirements to be embedded in an interactive framework for spatial data analysis. It is parallelized and scales with the size of the graph regarding the runtime. Furthermore, it maintains the edge’s model and thus supports rendering the graph in different structural styles. We demonstrate this with a graph originating from a simulation of the function of a macaque brain.

Visual Quality Adjustment for Volume Rendering in a Head-Tracked Virtual Environment

To avoid simulator sickness and improve presence in immersive virtual environments (IVEs), high frame rates and low latency are required. In contrast, volume rendering applications typically strive for high visual quality that induces high computational load and, thus, leads to low frame rates. To evaluate this trade-off in IVEs, we conducted a controlled user study with 53 participants. Search and count tasks were performed in a CAVE with varying volume rendering conditions which are applied according to viewer position updates corresponding to head tracking. The results of our study indicate that participants preferred the rendering condition with continuous adjustment of the visual quality over an instantaneous adjustment which guaranteed for low latency and over no adjustment providing constant high visual quality but rather low frame rates. Within the continuous condition, the participants showed best task performance and felt less disturbed by effects of the visualization during movements. Our findings provide a good basis for further evaluations of how to accelerate volume rendering in IVEs according to user’s preferences.

@article{Hanel2016,

author = { H{\"{a}}nel, Claudia and Weyers, Benjamin and Hentschel, Bernd and Kuhlen, Torsten W.},

doi = {10.1109/TVCG.2016.2518338},

issn = {10772626},

journal = {IEEE Transactions on Visualization and Computer Graphics},

number = {4},

pages = {1472--1481},

pmid = {26780811},

title = {{Visual Quality Adjustment for Volume Rendering in a Head-Tracked Virtual Environment}},

volume = {22},

year = {2016}

}

Examining Rotation Gain in CAVE-like Virtual Environments

When moving through a tracked immersive virtual environment, it is sometimes useful to deviate from the normal one-to-one mapping of real to virtual motion. One option is the application of rotation gain, where the virtual rotation of a user around the vertical axis is amplified or reduced by a factor. Previous research in head-mounted display environments has shown that rotation gain can go unnoticed to a certain extent, which is exploited in redirected walking techniques. Furthermore, it can be used to increase the effective field of regard in projection systems. However, rotation gain has never been studied in CAVE systems, yet. In this work, we present an experiment with 87 participants examining the effects of rotation gain in a CAVE-like virtual environment. The results show no significant effects of rotation gain on simulator sickness, presence, or user performance in a cognitive task, but indicate that there is a negative influence on spatial knowledge especially for inexperienced users. In secondary results, we could confirm results of previous work and demonstrate that they also hold for CAVE environments, showing a negative correlation between simulator sickness and presence, cognitive performance and spatial knowledge, a positive correlation between presence and spatial knowledge, a mitigating influence of experience with 3D applications and previous CAVE exposure on simulator sickness, and a higher incidence of simulator sickness in women.

@ARTICLE{freitag2016a,

author={S. Freitag and B. Weyers and T. W. Kuhlen},

journal={IEEE Transactions on Visualization and Computer Graphics},

title={{Examining Rotation Gain in CAVE-like Virtual Environments}},

year={2016},

volume={22},

number={4},

pages={1462-1471},

doi={10.1109/TVCG.2016.2518298},

ISSN={1077-2626},

month={April},

}

Design and Evaluation of Data Annotation Workflows for CAVE-like Virtual Environments

Data annotation finds increasing use in Virtual Reality applications with the goal to support the data analysis process, such as architectural reviews. In this context, a variety of different annotation systems for application to immersive virtual environments have been presented. While many interesting interaction designs for the data annotation workflow have emerged from them, important details and evaluations are often omitted. In particular, we observe that the process of handling metadata to interactively create and manage complex annotations is often not covered in detail. In this paper, we strive to improve this situation by focusing on the design of data annotation workflows and their evaluation. We propose a workflow design that facilitates the most important annotation operations, i.e., annotation creation, review, and modification. Our workflow design is easily extensible in terms of supported annotation and metadata types as well as interaction techniques, which makes it suitable for a variety of application scenarios. To evaluate it, we have conducted a user study in a CAVE-like virtual environment in which we compared our design to two alternatives in terms of a realistic annotation creation task. Our design obtained good results in terms of task performance and user experience.

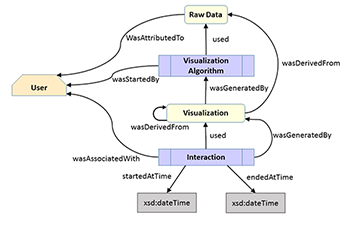

Towards Multi-user Provenance Tracking of Visual Analysis Workflows over Multiple Applications

Provenance tracking for visual analysis workflows is still a challenge as especially interaction and collaboration aspects are poorly covered in existing realizations. Therefore, we propose a first prototype addressing these issues based on the PROV model. Interactions in multiple applications by multiple users can be tracked by means of a web interface and, thus, allowing even for tracking of remote-located collaboration partners. In the end, we demonstrate the applicability based on two use cases and discuss some open issues not addressed by our implementation so far but that can be easily integrated into our architecture.

@inproceedings {eurorv3.20161112,

booktitle = {EuroVis Workshop on Reproducibility, Verification, and Validation in Visualization (EuroRV3)},

editor = {Kai Lawonn and Mario Hlawitschka and Paul Rosenthal},

title = {{Towards Multi-user Provenance Tracking of Visual Analysis Workflows over Multiple Applications}},

author = { H{\"{a}}nel, Claudia and Khatami, Mohammad and Kuhlen, Torsten W. and Weyers, Benjamin},

year = {2016},

publisher = {The Eurographics Association},

ISSN = {-},

ISBN = {978-3-03868-017-8},

DOI = {10.2312/eurorv3.20161112}

}

Collision Avoidance in the Presence of a Virtual Agent in Small-Scale Virtual Environments

Computer-controlled, human-like virtual agents (VAs), are often embedded into immersive virtual environments (IVEs) in order to enliven a scene or to assist users. Certain constraints need to be fulfilled, e.g., a collision avoidance strategy allowing users to maintain their personal space. Violating this flexible protective zone causes discomfort in real-world situations and in IVEs. However, no studies on collision avoidance for small-scale IVEs have been conducted yet.

Our goal is to close this gap by presenting the results of a controlled user study in a CAVE. 27 participants were immersed in a small-scale office with the task of reaching the office door. Their way was blocked either by a male or female VA, representing their co-worker. The VA showed different behavioral patterns regarding gaze and locomotion.

Our results indicate that participants preferred collaborative collision avoidance: they expect the VA to step aside in order to get more space to pass while being willing to adapt their own walking paths.

Honorable Mention for Best Technote!

» Show BibTeX

@InProceedings{Boensch2016a,

Title = {Collision Avoidance in the Presence of a Virtual Agent in Small-Scale Virtual Environments},

Author = {Andrea B\"{o}nsch and Benjamin Weyers and Jonathan Wendt and Sebastian Freitag and Torsten W. Kuhlen},

Booktitle = {IEEE Symposium on 3D User Interfaces},

Year = {2016},

Pages = {145-148},

Abstract = {Computer-controlled, human-like virtual agents (VAs), are often embedded into immersive virtual environments (IVEs) in order to enliven a scene or to assist users. Certain constraints need to be fulfilled, e.g., a collision avoidance strategy allowing users to maintain

their personal space. Violating this flexible protective zone causes discomfort in real-world situations and in IVEs. However, no studies on collision avoidance for small-scale IVEs have been conducted yet. Our goal is to close this gap by presenting the results of a controlled

user study in a CAVE. 27 participants were immersed in a small-scale office with the task of reaching the office door. Theirwaywas blocked either by a male or female VA, representing their co-worker. The VA showed different behavioral patterns regarding gaze and locomotion.

Our results indicate that participants preferred collaborative collision avoidance: they expect the VA to step aside in order to get more space to pass while being willing to adapt their own walking paths.}

}

Automatic Speed Adjustment for Travel through Immersive Virtual Environments based on Viewpoint Quality

When traveling virtually through large scenes, long distances and different detail densities render fixed movement speeds impractical. However, to manually adjust the travel speed, users have to control an additional parameter, which may be uncomfortable and requires cognitive effort. Although automatic speed adjustment techniques exist, many of them can be problematic in indoor scenes. Therefore, we propose to automatically adjust travel speed based on viewpoint quality, originally a measure of the informativeness of a viewpoint. In a user study, we show that our technique is easy to use, allowing users to reach targets faster and use less cognitive resources than when choosing their speed manually.

Best Technote!

@INPROCEEDINGS{freitag2016b,

author={S. Freitag and B. Weyers and T. W. Kuhlen},

booktitle={2016 IEEE Symposium on 3D User Interfaces (3DUI)},

title={{Automatic Speed Adjustment for Travel through Immersive Virtual Environments based on Viewpoint Quality}},

year={2016},

pages={67-70},

doi={10.1109/3DUI.2016.7460033},

month={March},

}

Evaluation of Hands-Free HMD-Based Navigation Techniques for Immersive Data Analysis

To use the full potential of immersive data analysis when wearing a head-mounted display, users have to be able to navigate through the spatial data. We collected, developed and evaluated 5 different hands-free navigation methods that are usable while seated in the analyst’s usual workplace. All methods meet the requirements of being easy to learn and inexpensive to integrate into existing workplaces. We conducted a user study with 23 participants which showed that a body leaning metaphor and an accelerometer pedal metaphor performed best. In the given task the participants had to determine the shortest path between various pairs of vertices in a large 3D graph.

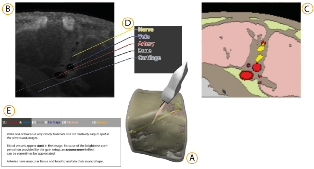

Experiences on Validation of Multi-Component System Simulations for Medical Training Applications

In the simulation of multi-component systems, we often encounter a problem with a lack of ground-truth data. This situation makes the validation of our simulation methods and models a difficult task. In this work we present a guideline to design validation methodologies that can be applied to the validation of multi-component simulations that lack of ground-truth data. Additionally we present an example applied to an Ultrasound Image Simulation for medical training and give an overview of the considerations made and the results for each of the validation methods. With these guidelines we expect to obtain more comparable and reproducible validation results from which other similar work can benefit.

@InProceedings{eurorv3.20161113,

author = {Law, Yuen C. and Weyers, Benjamin and Kuhlen, Torsten W.},

title = {{Experiences on Validation of Multi-Component System Simulations for Medical Training Applications}},

booktitle = {EuroVis Workshop on Reproducibility, Verification, and Validation in Visualization (EuroRV3)},

year = {2016},

editor = {Kai Lawonn and Mario Hlawitschka and Paul Rosenthal},

publisher = {The Eurographics Association},

doi = {10.2312/eurorv3.20161113},

isbn = {978-3-03868-017-8},

pages = {29--33}

}

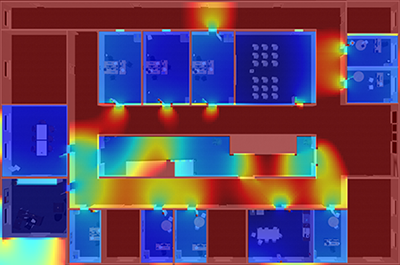

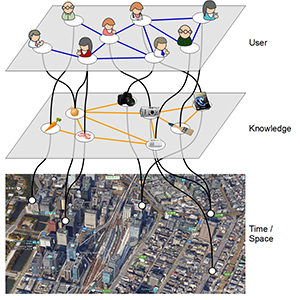

Web-based Interactive and Visual Data Analysis for Ubiquitous Learning Analytics

Interactive visual data analysis is a well-established class of methods to gather knowledge from raw and complex data. A broad variety of examples can be found in literature presenting its applicability in various ways and different scientific domains. However, fully fledged solutions for visual analysis addressing learning analytics are still rare. Therefore, this paper will discuss visual and interactive data analysis for learning analytics by presenting best practices followed by a discussion of a general architecture combining interactive visualization employing the Information Seeking Mantra in conjunction with the paradigm of coordinated multiple views. Finally, by presenting a use case for ubiquitous learning analytics its applicability will be demonstrated with the focus on temporal and spatial relation of learning data. The data is gathered from a ubiquitous learning scenario offering information for students to identify learning partners and provides information to teachers enabling the adaption of their learning material.

@InProceedings{weyers2016a,

Title = {Web-based Interactive and Visual Data Analysis for Ubiquitous Learning Analytics},

Author = {Benjamin Weyers, Christian Nowke, Torsten Wolfgang Kuhlen, Mouri Kousuke, Hiroaki Ogata},

Booktitle = {First International Workshop on Learning Analytics Across Physical and Digital Spaces co-located with 6th International Conference on Learning Analytics \& Knowledge (LAK 2016)},

Year = {2016},

Pages = {65--69},

Editor = {Roberto Martinez-Maldonado, Davinia Hernandez-Leo},

Volume = {1601},

Abstract = {Interactive visual data analysis is a well-established class of methods to gather knowledge from raw and complex data. A broad variety of examples can be found in literature presenting its applicability in various ways and different scientific domains. However, fully fledged solutions for visual analysis addressing learning analytics are still rare. Therefore, this paper will discuss visual and interactive data analysis for learning analytics by presenting best practices followed by a discussion of a general architecture combining interactive visualization employing the Information Seeking Mantra in conjunction with the paradigm of coordinated multiple views. Finally, by presenting a use case for ubiquitous learning analytics its applicability will be demonstrated with the focus on temporal and spatial relation of learning data. The data is gathered from a ubiquitous learning scenario offering information

for students to identify learning partners and provides information to teachers enabling the adaption of their learning material.},

Keywords = {interactive analysis, web-based visualization, learning analytics},

Url = {http://ceur-ws.org/Vol-1601/}

}

Poster: Evaluation of Hands-Free HMD-Based Navigation Techniques for Immersive Data Analysis

To use the full potential of immersive data analysis when wearing a head-mounted display, the user has to be able to navigate through the spatial data. We collected, developed and evaluated 5 different hands-free navigation methods that are usable while seated in the analyst’s usual workplace. All methods meet the requirements of being easy to learn and inexpensive to integrate into existing workplaces. We conducted a user study with 23 participants which showed that a body leaning metaphor and an accelerometer pedal metaphor performed best within the given task.

Integrating Visualizations into Modeling NEST Simulations

Modeling large-scale spiking neural networks showing realistic biological behavior in their dynamics is a complex and tedious task. Since these networks consist of millions of interconnected neurons, their simulation produces an immense amount of data. In recent years it has become possible to simulate even larger networks. However, solutions to assist researchers in understanding the simulation's complex emergent behavior by means of visualization are still lacking. While developing tools to partially fill this gap, we encountered the challenge to integrate these tools easily into the neuroscientists' daily workflow. To understand what makes this so challenging, we looked into the workflows of our collaborators and analyzed how they use the visualizations to solve their daily problems. We identified two major issues: first, the analysis process can rapidly change focus which requires to switch the visualization tool that assists in the current problem domain. Second, because of the heterogeneous data that results from simulations, researchers want to relate data to investigate these effectively. Since a monolithic application model, processing and visualizing all data modalities and reflecting all combinations of possible workflows in a holistic way, is most likely impossible to develop and to maintain, a software architecture that offers specialized visualization tools that run simultaneously and can be linked together to reflect the current workflow, is a more feasible approach. To this end, we have developed a software architecture that allows neuroscientists to integrate visualization tools more closely into the modeling tasks. In addition, it forms the basis for semantic linking of different visualizations to reflect the current workflow. In this paper, we present this architecture and substantiate the usefulness of our approach by common use cases we encountered in our collaborative work.

Proceedings of the Workshop on Formal Methods in Human Computer Interaction(FoMHCI)

Conference Proceedings

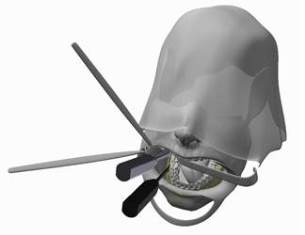

Simulation-based Ultrasound Training Supported by Annotations, Haptics and Linked Multimodal Views

When learning ultrasound (US) imaging, trainees must learn how to recognize structures, interpret textures and shapes, and simultaneously register the 2D ultrasound images to their 3D anatomical mental models. Alleviating the cognitive load imposed by these tasks should free the cognitive resources and thereby improve the learning process. We argue that the amount of cognitive load that is required to mentally rotate the models to match the images to them is too large and therefore negatively impacts the learning process. We present a 3D visualization tool that allows the user to naturally move a 2D slice and navigate around a 3D anatomical model. The slice is displayed in-place to facilitate the registration of the 2D slice in its 3D context. Two duplicates are also shown externally to the model; the first is a simple rendered image showing the outlines of the structures and the second is a simulated ultrasound image. Haptic cues are also provided to the users to help them maneuver around the 3D model in the virtual space. With the additional display of annotations and information of the most important structures, the tool is expected to complement the available didactic material used in the training of ultrasound procedures.

Comparison and Evaluation of Viewpoint Quality Estimation Algorithms for Immersive Virtual Environments

The knowledge of which places in a virtual environment are interesting or informative can be used to improve user interfaces and to create virtual tours. Viewpoint Quality Estimation algorithms approximate this information by calculating quality scores for viewpoints. However, even though several such algorithms exist and have also been used, e.g., in virtual tour generation, they have never been comparatively evaluated on virtual scenes. In this work, we introduce three new Viewpoint Quality Estimation algorithms, and compare them against each other and six existing metrics, by applying them to two different virtual scenes. Furthermore, we conducted a user study to obtain a quantitative evaluation of viewpoint quality. The results reveal strengths and limitations of the metrics on actual scenes, and provide recommendations on which algorithms to use for real applications.

@InProceedings{Freitag2015,

Title = {{Comparison and Evaluation of Viewpoint Quality Estimation Algorithms for Immersive Virtual Environments}},

Author = {Freitag, Sebastian and Weyers, Benjamin and B\"{o}nsch, Andrea and Kuhlen, Torsten W.},

Booktitle = {ICAT-EGVE 2015 - International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments},

Year = {2015},

Pages = {53-60},

Doi = {10.2312/egve.20151310}

}

Haptic 3D Surface Representation of Table-Based Data for People With Visual Impairments

The UN Convention on the Rights of Persons with Disabilities Article 24 states that “States Parties shall ensure inclusive education at all levels of education and life long learning.” This article focuses on the inclusion of people with visual impairments in learning processes including complex table-based data. Gaining insight into and understanding of complex data is a highly demanding task for people with visual impairments. Especially in the case of table-based data, the classic approaches of braille-based output devices and printing concepts are limited. Haptic perception requires sequential information processing rather than the parallel processing used by the visual system, which hinders haptic perception to gather a fast overview of and deeper insight into the data. Nevertheless, neuroscientific research has identified great dependencies between haptic perception and the cognitive processing of visual sensing. Based on these findings, we developed a haptic 3D surface representation of classic diagrams and charts, such as bar graphs and pie charts. In a qualitative evaluation study, we identified certain advantages of our relief-type 3D chart approach. Finally, we present an education model for German schools that includes a 3D printing approach to help integrate students with visual impairments./citation.cfm?id=2700433

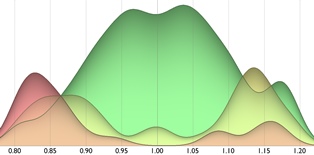

Verified Stochastic Methods in Geographic Information System Applications With Uncertainty

Modern localization techniques are based on the Global Positioning System (GPS). In general, the accuracy of the measurement depends on various uncertain parameters. In addition, despite its relevance, a number of localization approaches fail to consider the modeling of uncertainty in geographic information system (GIS) applications. This paper describes a new verified method for uncertain (GPS) localization for use in GPS and GIS application scenarios based on Dempster-Shafer theory (DST), with two-dimensional and interval-valued basic probability assignments. The main benefit our approach offers for GIS applications is a workflow concept using DST-based models that are embedded into an ontology-based semantic querying mechanism accompanied by 3D visualization techniques. This workflow provides interactive means of querying uncertain GIS models semantically and provides visual feedback.

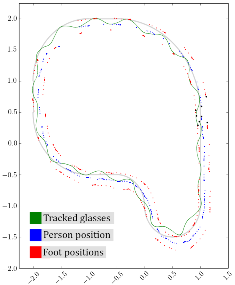

Low-Cost Vision-Based Multi-Person Foot Tracking for CAVE Systems with Under-Floor Projection

In this work, we present an approach for tracking the feet of multiple users in CAVE-like systems with under-floor projection. It is based on low-cost consumer cameras, does not require users to wear additional equipment, and can be installed without modifying existing components. If the brightness of the floor projection does not contain too much variation, the feet of several people can be successfully and precisely tracked and assigned to individuals. The tracking data can be used to enable or enhance user interfaces like Walking-in-Place or torso-directed steering, provide audio feedback for footsteps, and improve the immersive experience for multiple users.

BlowClick: A Non-Verbal Vocal Input Metaphor for Clicking

In contrast to the wide-spread use of 6-DOF pointing devices, freehand user interfaces in Immersive Virtual Environments (IVE) are non-intrusive. However, for gesture interfaces, the definition of trigger signals is challenging. The use of mechanical devices, dedicated trigger gestures, or speech recognition are often used options, but each comes with its own drawbacks. In this paper, we present an alternative approach, which allows to precisely trigger events with a low latency using microphone input. In contrast to speech recognition, the user only blows into the microphone. The audio signature of such blow events can be recognized quickly and precisely. The results of a user study show that the proposed method allows to successfully complete a standard selection task and performs better than expected against a standard interaction device, the Flystick.

Gaze Guiding zur Unterstützung der Bedienung technischer Systeme

Die Vermeidung von Bedienfehlern ist gerade in sicherheitskritischen Systemen von zentraler Bedeutung. Um das Wiedererinnern an einmal erlernte Fähigkeiten für das Bedienen und Steuern technischer Systeme zu erleichtern und damit Fehler zu vermeiden, werden sogenannte Refresher Interventionen eingesetzt. Hierbei handelt es sich bisher zumindest um aufwändige Simulations- oder Simulationstrainings, die bereits erlernte Fähigkeiten durch deren wiederholte Ausführung auffrischen und so in selten auftretenden kritischen Situationen korrekt abrufbar machen. Die vorliegende Arbeit zeigt wie das Ziel des Wiedererinnerns auch ohne Refresher in Form einer Gaze Guiding Komponente erreicht werden kann, die in eine visuelle Benutzerschnittstelle zur Bedienung des technischen Prozesses eingebettet wird und den Fertigkeitsabruf durch gezielte kontextabhängige Ein- und Überblendungen unterstützt. Die Wirkung dieses Konzepts wird zurzeit in einer größeren DFG-geförderten Studie untersucht.

An Integrative Tool Chain for Collaborative Virtual Museums in Immersive Virtual Environments

Various conceptual approaches for the creation and presentation of virtual museums can be found. However, less work exists that concentrates on collaboration in virtual museums. The support of collaboration in virtual museums provides various benefits for the visit as well as the preparation and creation of virtual exhibits. This paper addresses one major problem of collaboration in virtual museums: the awareness of visitors. We use a Cave Automated Virtual Environment (CAVE) for the visualization of generated virtual museums to offer simple awareness through co-location. Furthermore, the use of smartphones during the visit enables the visitors to create comments or to access exhibit related metadata. Thus, the main contribution of this ongoing work is the presentation of a workflow that enables an integrated deployment of generic virtual museums into a CAVE, which will be demonstrated by deploying the virtual Leopold Fleischhacker Museum.

Crowdsourcing and Knowledge Co-creation in Virtual Museums

This paper gives an overview on crowdsourcing practices in virtual mu-seums. Engaged nonprofessionals and specialists support curators in creating digi-tal 2D or 3D exhibits, exhibitions and tour planning and enhancement of metadata using the Virtual Museum and Cultural Object Exchange Format (ViMCOX). ViMCOX provides the semantic structure of exhibitions and complete museums and includes new features, such as room and outdoor design, interactions with artwork, path planning and dissemination and presentation of contents. Applica-tion examples show the impact of crowdsourcing in the Museo de Arte Contem-poraneo in Santiago de Chile and in the virtual museum depicting the life and work of the Jewish sculptor Leopold Fleischhacker. A further use case is devoted to crowd-based support for restoration of high-quality 3D shapes.

"Workshop on Formal Methods in Human Computer Interaction."

This workshop aims to gather active researchers and practitioners in the field of formal methods in the context of user interfaces, interaction techniques, and interactive systems. The main objectives are to look at the evolutions of the definition and use of formal methods for interactive systems since the last book on the field nearly 20 years ago and also to identify important themes for the next decade of research. The goals of this workshop are to offer an exchange platform for scientists who are interested in the formal modeling and description of interaction, user interfaces, and interactive systems and to discuss existing formal modeling methods in this area of conflict. Participants will be asked to present their perspectives, concepts, and techniques for formal modeling along one of two case studies – the control of a nuclear power plant and an air traffic management arrival manager.

FILL: Formal Description of Executable and Reconfigurable Models of Interactive Systems

This paper presents the Formal Interaction Logic Language (FILL) as modeling approach for the description of user interfaces in an executable way. In the context of the workshop on Formal Methods in Human Computer Interaction, this work presents FILL by first introducing its architectural structure, its visual representation and transformation of reference nets, a special type of Petri nets, and finally discussing FILL in context of two use case proposed by the workshop. Therefore, this work shows how FILL can be used to model automation as part of the user interface model as well as how formal reconfiguration can be used to implement user-based automation given a formal user interface model.

3DUIdol-6th Annual 3DUI Contest.

The 6th annual IEEE 3DUI contest focuses on Virtual Music Instruments (VMIs), and on 3D user interfaces for playing them. The Contest is part of the IEEE 2015 3DUI Symposium held in Arles, France. The contest is open to anyone interested in 3D User Interfaces (3DUIs), from researchers to students, enthusiasts, and professionals. The purpose of the contest is to stimulate innovative and creative solutions to challenging 3DUI problems. Due to the recent explosion of affordable and portable 3D devices, this year's contest will be judged live at 3DUI. The judgment will be done by selected 3DUI experts during on-site presentation during the conference. Therefore, contestants are required to bring their systems for live judging and for attendees to experience them.

Poster: Effects and Applicability of Rotation Gain in CAVE-like Environments

In this work, we report on a pilot study we conducted, and on a study design, to examine the effects and applicability of rotation gain in CAVE-like virtual environments. The results of the study will give recommendations for the maximum levels of rotation gain that are reasonable in algorithms for enlarging the virtual field of regard or redirected walking.

Innovative Mensch-Maschine Schnittstelle für Prüf- und Diagnosesysteme

Bisher wird die Mensch Maschine Schnittstelle (MMS) für Prüf- und Diagnosesoftware für die Automobilindustrie von Drittherstellern für spezifische Prüfprozesse nach uneinheitlichen und unsystematischen Maßgaben erstellt und zusammen mit entsprechender Hardware vermarktet. Anhand einer durchgeführten Vorstudie in 2012 bei einem Automobilhersteller wurde gezeigt, dass diese Maß-gaben leider eher selten die gewünschten Anforderungen an eine menschgerechte und benutzer-freundliche Gestaltung erfüllen. In dieser Arbeit soll anhand einer bekannten Methode neue Anforderungen an die MMS beim Diagnose- und Prüfbereich am Fließband in Hinblick auf Usability und Human Factors untersucht werden.

A 3D Collaborative Virtual Environment to Integrate Immersive Virtual Reality into Factory Planning Processes